- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

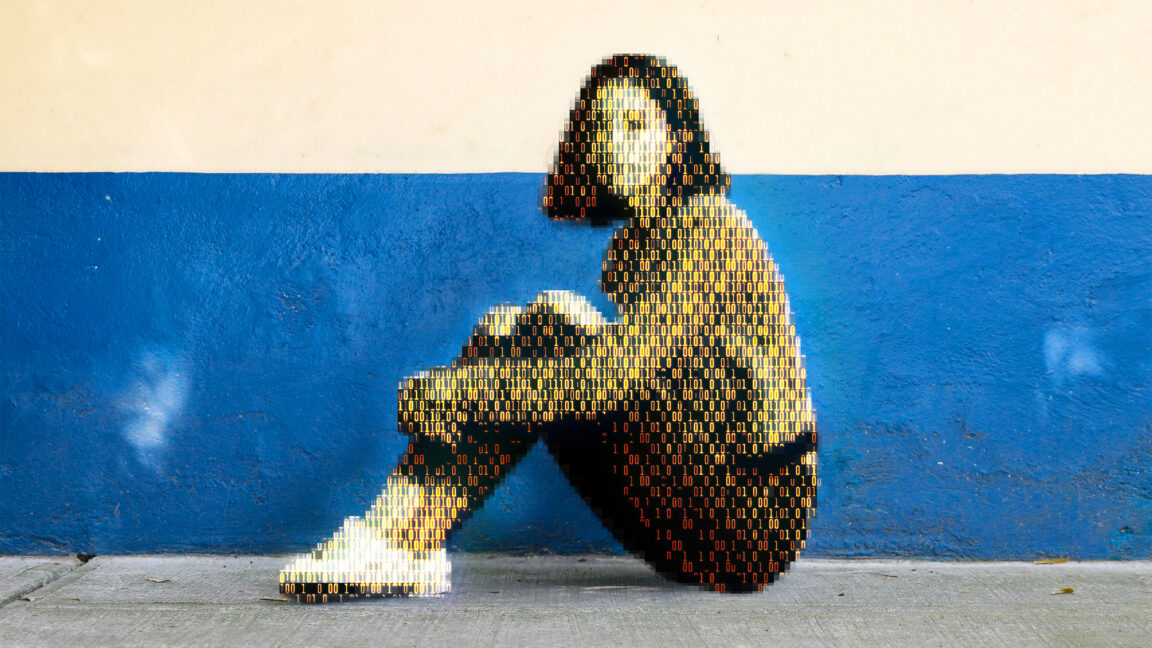

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

At this point how does it differ w/ generating AI powered CP? morons

Uh, well this one tells you if an image looks like it or not. It doesn’t generate images

If it knows if an image looks like it it can generate something like it, one step further

Correct, this kind of software is trained on CP data. So such models can be easily used to generate CP instead of recognizing it, which makes them very dangerous indeed.

Same idea as the current models that are trained to recognized cars, these models can also be used to generate a car from noise as a starting poiint.

In pretttty sure you can’t just run it in reverse like that. There’s a whole different training and operation methodology you have to use to support generating images rather than simple text classification

It differs in basically being something completely different. This is a classification model, doesn’t have generative capabilities. Even if you were to get the model and it’s weights, and you tried to reverse engineer an “input” that it would classify as CP, it would most likely look like pure noise to you.

Moron