the writer Nina Illingworth, whose work has been a constant source of inspiration, posted this excellent analysis of the reality of the AI bubble on Mastodon (featuring a shout-out to the recent articles on the subject from Amy Castor and @[email protected]):

Naw, I figured it out; they absolutely don’t care if AI doesn’t work.

They really don’t. They’re pot-committed; these dudes aren’t tech pioneers, they’re money muppets playing the bubble game. They are invested in increasing the valuation of their investments and cashing out, it’s literally a massive scam. Reading a bunch of stuff by Amy Castor and David Gerard finally got me there in terms of understanding it’s not real and they don’t care. From there it was pretty easy to apply a historical analysis of the last 10 bubbles, who profited, at which point in the cycle, and where the real money was made.

The plan is more or less to foist AI on establishment actors who don’t know their ass from their elbow, causing investment valuations to soar, and then cash the fuck out before anyone really realizes it’s total gibberish and unlikely to get better at the rate and speed they were promised.

Particularly in the media, it’s all about adoption and cashing out, not actually replacing media. Nobody making decisions and investments here, particularly wants an informed populace, after all.

the linked mastodon thread also has a very interesting post from an AI skeptic who used to work at Microsoft and seems to have gotten laid off for their skepticism

I’ve got this absolutely massive draft document where I’ve tried to articulate what this person explains in a few sentences. The gradual removal of immediate purpose from products has become deliberate. This combination of conceptual solutions to conceptual problems gives the business a free pass from any kind of distinct accountability. It is a product that has potential to have potential. AI seems to achieve this better than anything ever before. Crypto is good at it but it stumbles at the cash-out point so it has to keep cycling through suckers. AI can just keep chugging along on being “powerful” for everything and nothing in particular, and keep becoming more powerful, without any clear benchmark of progress.

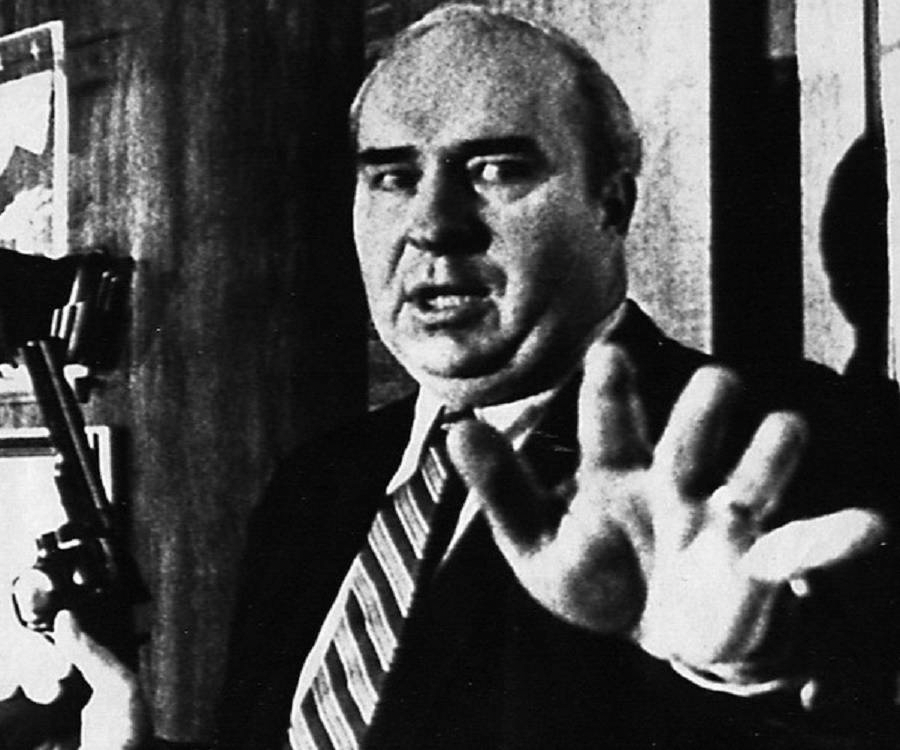

Edit: just uploaded this clip of Ralph Nader in 1971 talking about the frustration of being told of benefits that you can’t really grasp https://youtu.be/CimXZJLW_KI

this is also the marketing for quantum computing. Yes, there is a big money market for quantum computers in 2023. They still can’t reliably factor 35.

shit, I forgot about quantum computing. If you don’t game, do video production or render 3d models, you’re upgrading your computer to keep up with the demands of client-side rendered web apps and the operating system that loads up the same Excel that has existed for 30 years.

Lust for computing power is a great match for AI

i literally upgrade computers in the past decade purely to get ones that can take more RAM because the web now sends 1000 characters of text as a virtual machine written in javascript rather than anything so tawdry as HTML and CSS

the death of server-side templating and the lie of server-side rendering (which practically just ships the same virtual machine to you but with a bunch more shit tacked on that doesn’t do anything) really has done fucked up things to the web

as someone who never really understood The Big Deal With SPAs (aside from, like, google docs or whatever) i’m at least taking solace in the fact that like a decade later people seem to be coming around to the idea that, wait, this actually kind of sucks

deleted by creator

the worst part is I really despise this exact thing too, but have also implemented it multiple times across the last few years cause under certain very popular tech stacks you aren’t given any other reasonable choice

this is why my tech stack for personal work has almost no commonality with the tech I get paid to work with

React doesn’t have to suck for the user (lemmy is fast) but …

this is the thing.

6 degrees of transpiler separation.

The internet document transfer protocol needs a separation of page and app

deleted by creator

The comp.basilisk.faq answers that question!

Every day, we pay the price for embracing a homophobe’s 10-day hack comprising a shittier version of Lisp.

@[email protected] @self @trisweb I didn’t know masto picked up lemmy posts like this

@[email protected] @[email protected] @self Yep, it’s all the same protocol. It’s pretty weird though; no indication of what platform the post really came from or how it was intended to be viewed. I could see that being useful first-class information for the reader on whatever platform they’re reading from.

Trying to remember how I even got this post. Did you boost it from your masto account?

@trisweb @[email protected] @self yeah I figured the activitypub protocol used some kind of content type definition to control where stuff was appropriately published… I never got around to actually reading the docs.

I have no idea how it came to your feed. I found it because you boosted it!

as an open source federated protocol, ActivityPub and all the apps built on top of it are required to have a layer of jank hiding just under the surface

ActivityPub is a protocol for software to fail to talk to each other

@self has tapped Lemmy with carefully aimed hammers in a few places so that we federate both ways with Mastodon, which has been pretty cool actually

deleted by creator

seems to be on circumstances.run, which i’m on. that’s treehouse/glitch with authfetch

so not very? If your Mastodon has authfetch enabled then it doesn’t work properly. If it does then it does. I’m on circumstances.run which has authfetch on - it receives comments from awful.systems but doesn’t seem to pass them back.

@fasterandworse @self Fediverse problems. This could… use improvement. But it’s cool that it works!

100% on point. More people must remember that everything we know about large companies’ operations is still completely valid. Leadership doesn’t understand any of the technologies at play, even at a high level- they don’t think in terms of black boxes; they think in black, amorphous miasmas of supposed function or vibes, for short. They are concerned with a few metrics going up or down every quarter. As long as the number goes up, they get paid, the dopamine hits, and everyone stays happy.

The AI miasma (mAIasma? miasmAI?) in particular is near perfect. Other technologies only held a finite amount of potential to be hyped, meaning execs had to keep looking for their next stock price bump. AI is infinitely hypeable since you can promise anything with it, and people will believe you thanks to the smoke and mirrors it procedurally pumps out today.

I have friends who have worked in plenty of large corporations and have experience/understanding of the worthlessness of executive leadership, but they don’t connect that to AI investment and thus don’t see the grift. It’s sometimes exasperating.

what i’m trying to understand is the bridge between the quite damning works like Artificial Intelligence: A Modern Myth by John Kelly, R. Scha elsewhere, G. Ryle at advent of the Cognitive Revolution, deriving many of the same points as L. Wittgenstein, and then there’s PMS Hacker, a daunting read, indeed, that bridge between these counter-“a.i.” authors, and the easy think substance that seems to re-emerge every other decade? how is it that there are so many resolutely powerful indictments, and they are all being lost to what seems like a digital dark age? is it that the kool-aid is too good, that the sauce is too powerful, that the propaganda is too well funded? or is this all merely par for the course in the development of a planet that becomes conscious of all its “hyperobjects”?

I don’t claim to know any better than you, but my intuition says it’s the funding, combined with the fact that even understanding what the claims are takes a fair bit of technical sophistication, let alone understanding why they’re bullshit. The ever soaring levels of inequality — constant record highs in a couple generations at least — make it hard to realize just how much power the technocrats hold over the public perception and it can take a full lecture to explain even an educated and intelligent person how exactly the sentences the computer man utters are a crock of shit.

And more cynically, for some people it’s the old saw about not understanding things when your paycheck depends on it.

It’s a self-repairing problem, since you can’t fool most people forever, but the sooner the less people still buy into it, the better.

Sometimes it seems like wilful ignorance

Hilarious.

Only five years ago no one in the computer science industry would have taken a bet that AI would be able to explain why a joke was funny or perform creative tasks.

Today that’s become so normalized that people are calling things thought to be literally impossible a speculative bubble because advancement that surprised everyone in the industry initially and then again with the next model a year later hasn’t moved fast enough?

The industry is still learning how to even use the tech.

This is like TV being invented in 1927 and then people in 1930 saying that it’s a bubble because it hasn’t grown as fast as they expected it to.

Did OP consider the work going on at literally every single tech college’s VC groups in optoelectronic neural networks and how that’s going to impact decoupling AI training and operation from Moore’s Law? I’m guessing no.

Near-perfect analysis, eh? By someone who read and regurgitated analysis by a journalist who writes for a living and may just have an inherent bias towards evaluating information on the future prospects of a technology positioned to replace writers?

We haven’t even had a public release of multimodal models yet.

This is about as near perfect of an analysis as smearing paint on oneself and rolling down a canvas on a hill.

The industry is still learning how to even use the tech.

Just like blockchain, right? That killer app’s coming any day now!

This is like TV being invented in 1927 and then people in 1930 saying that it’s a bubble because it hasn’t grown as fast as they expected it to.

That’s the exact opposite of a bubble, then. A bubble is when the valuation of some thing grows much faster than the utility it provides.

Yea sure maybe we’re still in the early stages with this stuff. We have gotten quite a bit further from back when the funny neural network was seeing and generating dog noses everywhere.

The reason it’s a bubble is because hypemongers like yourself are treating this tech like a literal miracle and serial grifters shoehorning it into everything like it’s the new money. Who wants shoelaces when you can have AI shoelaces, the shoelaces with AI! Formerly known as the blockchain shoelaces.

holy christ shut the fuck up

deleted by creator

Teach me!

Did OP consider the work going on at literally every single tech college’s VC groups in optoelectronic neural networks and how that’s going to impact decoupling AI training and operation from Moore’s Law? I’m guessing no.

uhh did OP consider my hopes and dreams, powered by the happiness of literally every single American child? im guessing no. what a buffoon

Only five years ago no one in the computer science industry would have taken a bet that AI would be able to explain why a joke was funny

Iirc it still couldn’t do that, if you create variants of jokes it patterns matches it to the OG of the joke and fails.

or perform creative tasks.

Euh what, various creative tasks have been done by AI for a while now. Deepdream is almost a decade old now, and before that where were all kinds of procedural generation tools etc etc. Which could do the same as now, create a very limited set of creative things out of previous data. Same as AI now. This chatgpt cannot create a truly unique new sentence for example (A thing any of us here could easily do).

This chatgpt cannot create a truly unique new sentence for example (A thing any of us here could easily do).

What ?

Of course it can, it’s randomly generating sentences. It’s probably better than humans at that. If you want more randomness at the cost of text coherence just increase the temperature.

People tried this and it just generated the same chatgpt trite.

Of course it can, it’s randomly generating sentences. It’s probably better than humans at that. If you want more randomness at the cost of text coherence just increase the temperature.

you mean like a Markov chain?

These models are Markov chains yes. But many things are Markov chains, I’m not sure that describing these as Markov chains helps gain understanding.

The way these models generate text is iterative. They do it word by word. Every time they need to generate a word they will randomly select one from their vocabulary. The trick to generating coherent text is that different words are more likely to happen depending on the previous words.

For example for the sentence “that is a huge grey” the word elephant is more likely than flamingo.

The temperature is the way you select your word. If it is low you will always select the most likely word. Increasing the temperature will make the random choice more random giving each word a more equal chance.

Seeing as these models function randomly there is nothing preventing them from producing unique text. After all, something like jsbHsbe d dhebsUd is unique but not very interesting.

But many things are Markov chains

I don’t get particularly excited for algorithms from 1972 that come included with emacs, alongside Tetris and a janky text adventure but that is indeed the algorithm you’re rather excitedly describing

snore I guess

Have a browse through some threads on this instance before you talk about what the “computer science industry” was thinking 5 years ago as if this is a group of infants.

If you feel open to it, consider why people who obviously enjoy computing, and know a lot about it, don’t share your enthusiasm for a particular group of tech products. Find the factors that make these things different.

You might still disagree, you might change your mind. Whatever the fuck happens, you’ll write more compelling posts than whatever the fuck this is.

You might even provoke constructive, grown-up, discussions.

I must note that the poster in question earned the fastest ever ban from this instance, as their post was a perfect storm of greasy smarmy bullshit that felt gross to read, and judging by their post history that’s unfortunately just how they engage with information

Oh good. Their history was why I relented and wrote something. A typical king shit.

Did OP consider the work going on at literally every single tech college’s VC groups in

optoelectronicneural networks built on optical components to improve minimisation and howthat’s going to impact the decoupling of AI training and operation from Moore’s Lawthat’s one hope for making processing power gains so that the banner headlines about “Moore’s Law” are pushed back a little further? I’m guessing no.___You have the insider clout of a 15 year old with a search engine

You have the insider clout of a 15 year old with a search engine

my god

This is about as near perfect of an analysis as smearing paint on oneself and rolling down a canvas on a hill.

That sounds perfect to me dawg

you fucking idiot