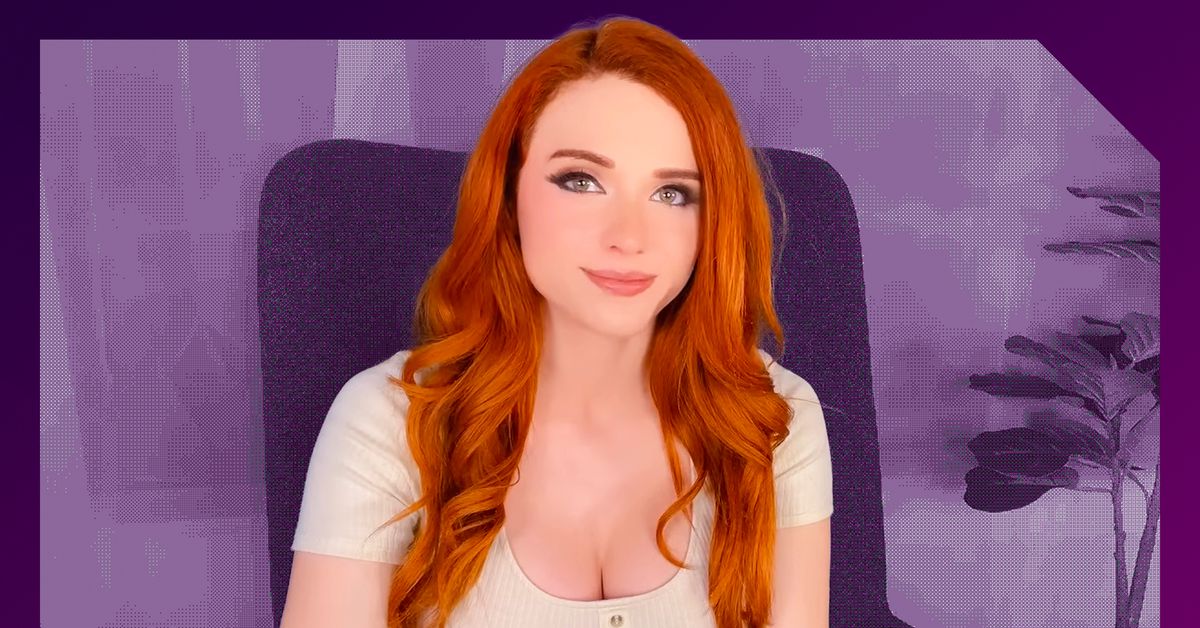

there is… a lot going on here–and it’s part of a broader trend which is probably not for the better and speaks to some deeper-seated issues we currently have in society. a choice moment from the article here on another influencer doing a similar thing earlier this year, and how that went:

Siragusa isn’t the first influencer to create a voice-prompted AI chatbot using her likeness. The first would be Caryn Marjorie, a 23-year-old Snapchat creator, who has more than 1.8 million followers. CarynAI is trained on a combination of OpenAI’s ChatGPT-4 and some 2,000 hours of her now-deleted YouTube content, according to Fortune. On May 11, when CarynAI launched, Marjorie tweeted that the app would “cure loneliness.” She’d also told Fortune that the AI chatbot was not meant to make sexual advances. But on the day of its release, users commented on the AI’s tendency to bring up sexually explicit content. “The AI was not programmed to do this and has seemed to go rogue,” Marjorie told Insider, adding that her team was “working around the clock to prevent this from happening again.”

The AI does exactly what it was programmed to do. It’s a machine. They fucked up making it, that’s the reality here.

The cynic in me says they didn’t actually fuck up. The AI being horny brought more attention to it and playing it off like “oh no! it went rogue!” gives them even more headlines. All a strategic play.

Eh, all chat bots trained on internet data become horny in the same way that virtually all AI is racist. It’s merely a reflection of the data it’s trained on, but a lot of people think they can magically control it by putting ‘safeguards’ after it’s been trained. The constant game of cat and mouse with gpt3/4 and prompts to getting it to ignore safeguards are proof this doesn’t work.

You can fix this through additional weighted layers and more complex upstream training tweaks, but then you have to pay to retrain which is by far the most expensive part about open AIs model.

You can also curate the training data so that it’s not problematic, but then you are biasing the model in other ways.

Yeah, I laughed at that part. The bot does what it interprets from the training data. Her entire schtick is leaning into sexual overtones to keep the guys subscribed. The fact that the bot started replicating that is entirely expected.

The trick there is that there is a fine line she walks between keeping it overt enough to keep the guys interested, but not so forward as to cross the terms of service boundaries on Twitch/YouTube. I think the problem is really that the bot isn’t adhering to the “tone it down so we don’t get banned from the service” unwritten rule. I’m not sure if I’d call that “rogue” though.

But, this is a great use case for a LLM, and if she can capitalise on it then good on her.

@alyaza @maynarkh This storied of LLMs gone rogue is just a cover to not be held accountable for the failures of the machines.