- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

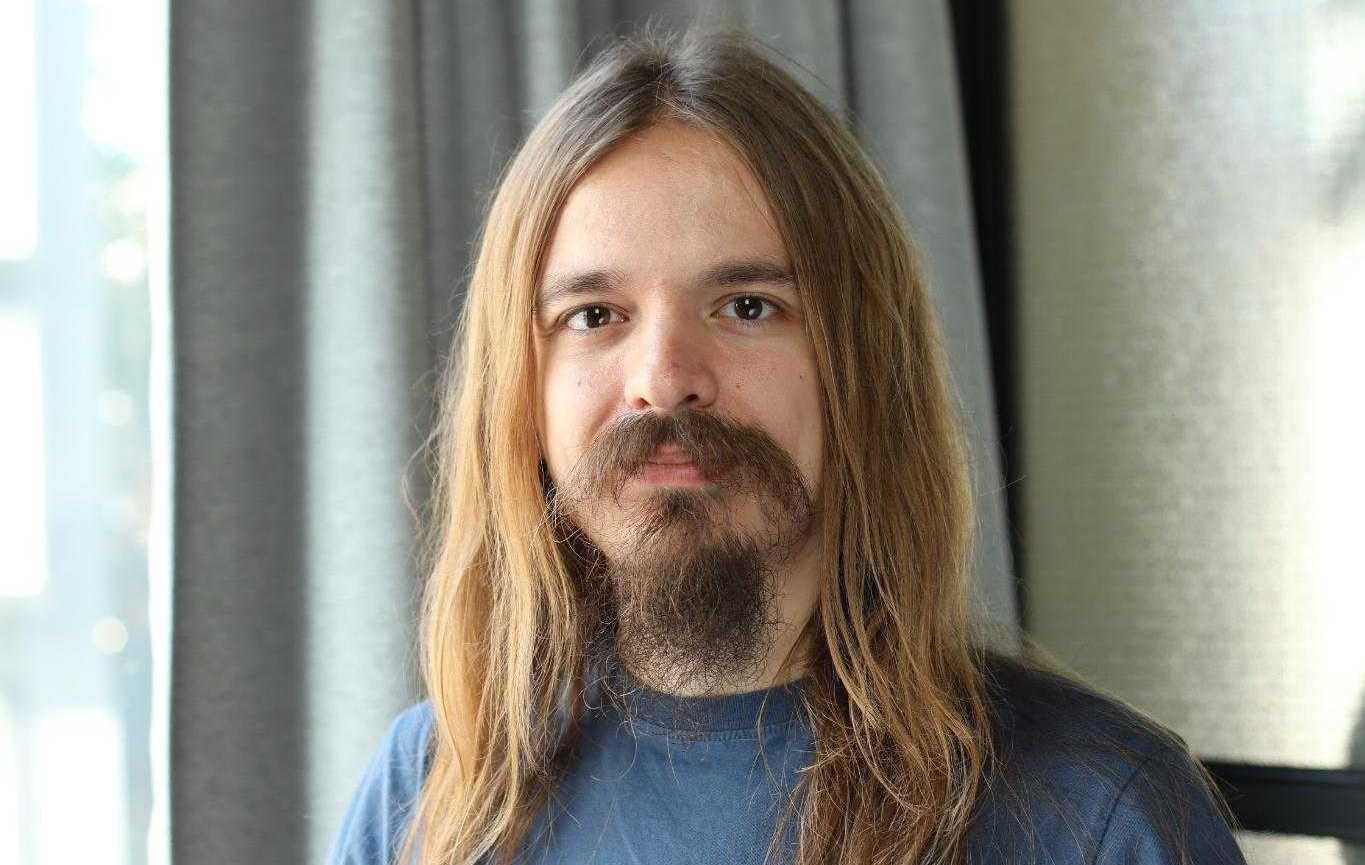

To Stop AI Killing Us All, First Regulate Deepfakes, Says Researcher Connor Leahy::AI researcher Connor Leahy says regulating deepfakes is the first step to avert AI wiping out humanity

It’s almost hard to imagine getting through this election cycle without at least one deep fake crisis

It does seem like reacting to a deepfake event is the only way anything will change there.

Roger Stone already alleged that an audio clip was AI-generated.

The clip said:

It’s time to do it. Let’s go find Swalwell. It’s time to do it. Then we’ll see how brave the rest of them are. It’s time to do it. It’s either Swalwell or Nadler has to die before the election. They need to get the message. Let’s go find Swalwell and get this over with. I’m just not putting up with this shit anymore.

Some day soon hackers will break into some national broadcast and play a deepfake of the president announcing a terrorist attack or nuclear war. The question is, will the world respond before verifying it’s real?

Hmm, I wonder what he greased these slopes with… butter? Lard? Margarine?

AI safety is not a slippery slope argument. It’s a serious area of academic research. Check out some Robert Miles videos or something.

As the interview says, the head-in-the-sand-style rebuttal is akin to early climate change denial.

Regulating AI will drive it underground, and corporations will still develop it in secret, because the military doesn’t care which regulations its weapons might breach.

If we develop AI that works, then no one will resort to AI that eats your face off.

ETA Corporations developing AI in secret will go full Stockton Rush, since launching with dangerous AI risks profit loss less than playing it safe. We’ve already had this conversation.

However, extinction by AI takeover is way cooler than extinction by overpollution, in my opinion.

Regulate does not equal stop, or even really slow for that manner. There are a number of measures we can mandate that wouldn’t slow any real research, but that would curtail malicious activity, like mandating some form of detection research to go alongside models, or pushing for better watermarking technology for genuine content.

Regulating means defining and proscribing activity the state asserts is harmful, such as putting packing and sell-by dates on meat to prevent selling hazardously old meat.

And yes, having to mind regulations absolutely cuts into profits and increases development time, especially once you consider how your regulations are going to be enforced. Since there are already markets for unethical AI applications, for instance, autonomous weapons platforms, some research and development programs are already clandestine so as to avoid close scrutiny. Since the US state is interested in some of them, it’s already motivated not to look too closely.

Besides which, the whole federal regulatory sector is already captured and interested not in serving the public, but in serving stakeholders, hence why we’re still waiting on net neutrality, and antitrust action on ISP regional monopolies.

…Or does he?

!!DUN DUN DUUUUUUUHN!!

Or did his deepfake say this?

You gotta bring the needle down in a stabbing motion to pierce the breastplate

Never going to happen. They found money in AI. It’s only going to get worse.

Ban photoshop, I dare ya.

Cause prohibition will totally stop AI girlfriend weebs

Pretty frustrating interview, I didn’t grasp what his actual issues ate with AI. I guess I’ll look for other articles somewhere else

I read the article and I have no idea what you are referring to. I think the author layed out their reasoning pretty…

Oh wait. You didn’t put the /s at the end, but it was implied?

Is it woosh over my head, or you not making sense? (no offense)

You also have to target the people who are building this technology

WTF is this nonsense take? So he’s essentially trying to ban AI research entirely.