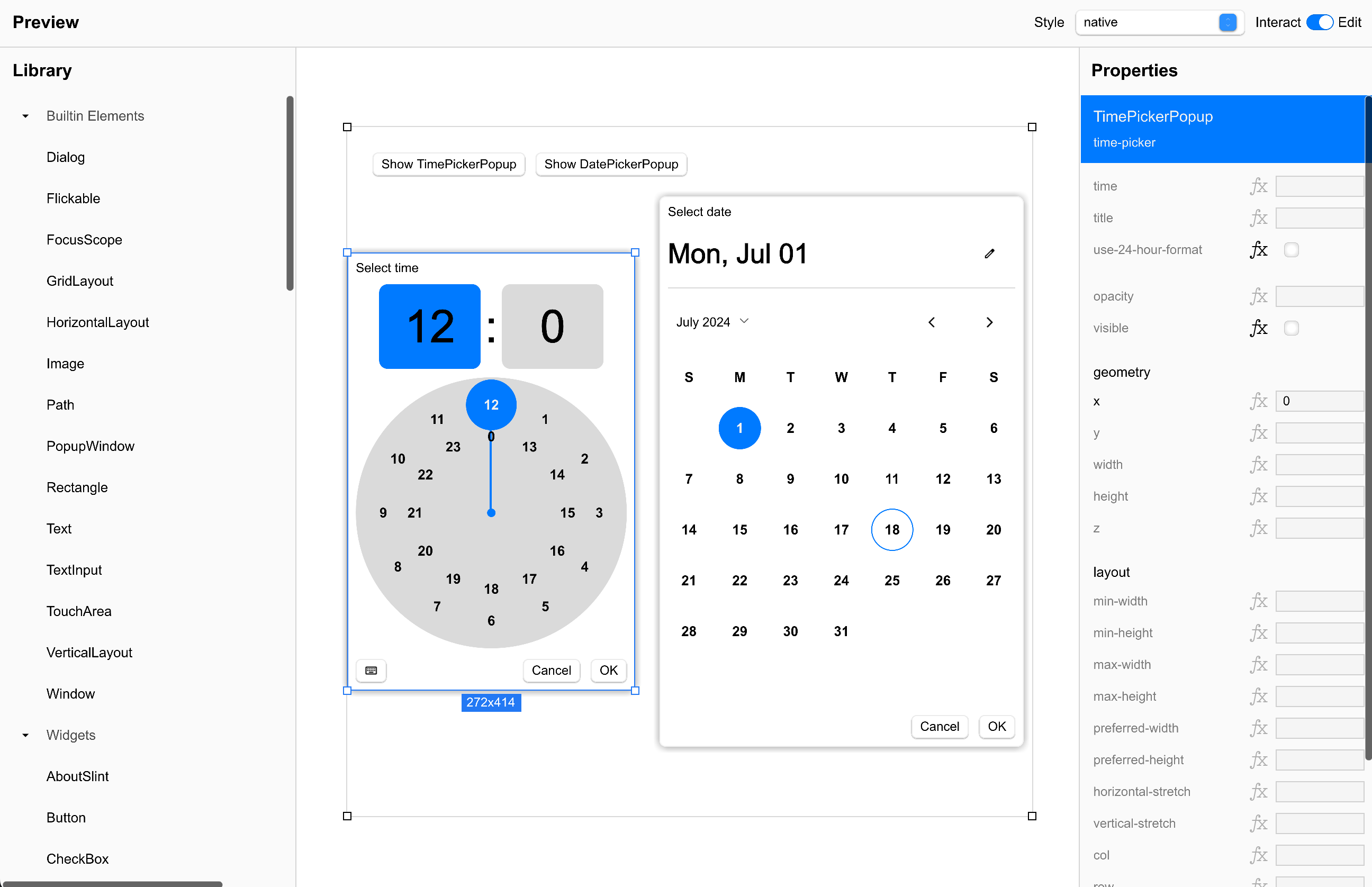

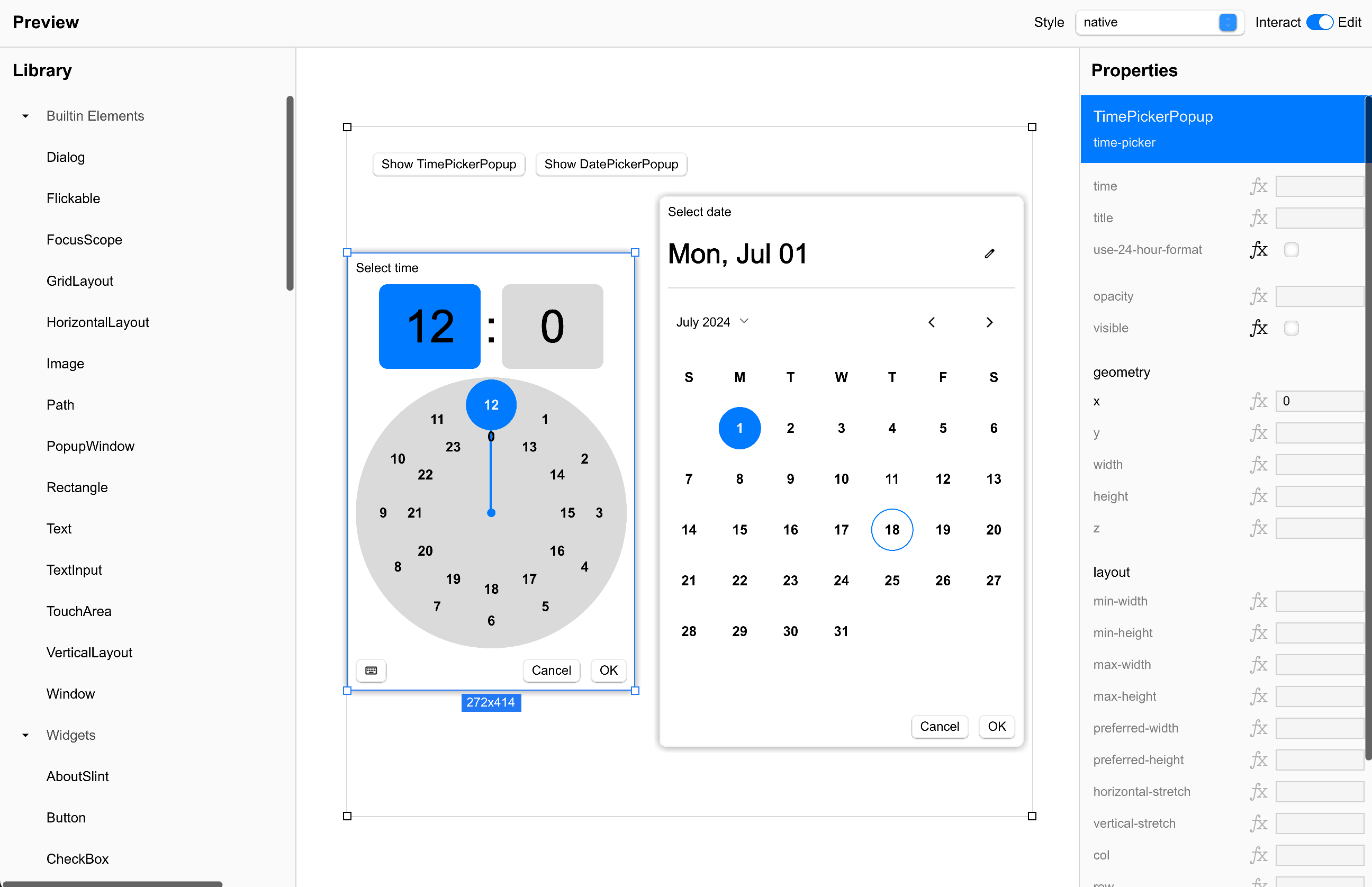

You are technically correct: Slint is free software. You can get Slint as GPL or commercial terms – or the royalty free license. The latter lets you do whatever you want anywhere with the exception of “embedded” (this exception makes is not open source).

When you contribute to any MIT license project you are in the same situation: Your code will be redistributed by some company under different license terms. That’s the point of MIT & Co. You contribute MIT code to a project, the project releases its code under MIT, and a company consumes the project and restricts its use. Slint is just cutting out the middle step here.

Disclaimer: I work for Slint and appreciate being paid for contributing to open source software. I also appreciate Slint being free software.

You contribute code to slint under MIT and you can also use that contributed code under MIT or any other license of your choosing, it stays your code after all. You can not use other peoples code from the slint repo under MIT though, that is correct. The royalty free license tries to get as close to MIT as we can while limiting the use on embedded… but with that limitation in place it is of course not an open source license.

Contributing back to Slint is in no way required, so if you do not like our contribution terms, then you are free to not do so. Ypu are also free to use something else if you do not like our license terms.

We try to make all of the terms as clear as possible. We rewrote the Slint licensing page several times, often with extensive community feedback, to get it as clear as it is right now. If you have ideas on how we can improve, I am all ears.