Not so much “enjoy” as “remember at all, unlike most of the other games I’ve played in the last 10 years or so”, but I take your point.

Not so much “enjoy” as “remember at all, unlike most of the other games I’ve played in the last 10 years or so”, but I take your point.

KDE showing how it should be done:

https://mail.kde.org/pipermail/kde-www/2025-October/009275.html

Question:

I am curious why you do not have a link to your X social media on your website. I know you are just forwarding posts to X from your Mastodon server. However, I’m afraid that if you pushed for more marketing on X—like DHH and Ladybird do—the hype would be much greater. I think you need a separate social media manager for the X platform.

Response:

We stopped posting on X for several reasons:

- The owner is a nazi

- The owner censors non- nazis and promotes nazis and their messages

- (Hence) most people who remain on X or are clueless and have difficulty parsing written text (one would assume), or are nazis

- Most of the new followers we were getting were nazi-propaganda spewing bots (7 out of 10 on average) or just straight up nazis.

Our community is not made up of nazis and many of our friendly contributors would be the target of nazi harassment, so we were not sure what we were doing there and stopped posting and left.

We are happy with that decision and have no intention of reversing it.

I got more “the thing” vibes, tbh.

That’s depressing… I really liked the music direction of halo. It really stood out to me in a way that other games never manage. I can still hum the halo theme and a bunch of its score, but I’d be hard pressed to do that with any other game… I know the elder scrolls theme, I guess, but can’t remember much else about their sound design.

Yeah, skintight palmtop hologram cortana certainly ticked some boxes there, but in-universe it was all a bit “everyone is beautiful, no-one is horny”, with a side order of “all assistants should be female and sexy”, to my mind at least.

And on the subject of microsoft, this is a splendid way to describe the both that specific company, the us tech sector as a whole and entire us government for that matter:

“We will build the tools of genocide, but never a sex bot” is such a condemnation of American society lolsob

https://xoxo.zone/@Ashedryden/115452105359019979

It was posted in reference to this article on the MIT technology review site, which gets an archive link because it has two overlapping cookie opt-out popups: https://archive.is/KhMqT

It is an interview with microsoft’s mustafa suleyman, their head of ai. For all he claims to think that chatbots pretending to be people is bad, I don’t see him actually doing a whole lot about it.

I might be behind the curve on this one, but ice are now using halo (the computer game) images in recruitment ads, and referring to immigrants (and people who look like immigrants, i guess) as “the flood”, the all-consuming alien horde who are one of the antagonists of the series.

Given how microsoft are happy to contribute to the development of the epstein ballroom, I can only assume that they’re cool with all this.

https://aftermath.site/microsoft-halo-dhs-ice-trump-flood

A screenshot of a twitter post by the department of homeland security, showing an image from the halo video game series and the text “finishing this fight”, “destroy the flood” and a link to “join ice gov”.

I can imagine a world where AI becomes extremely good at flipping burgers or driving cars well before it learns how to write software or […] protein folding or playing board games

That’s because despite Moravec’s paradox being noted down in the 80s (y’know, the last ai winter) there’s still a certain kind of asshole who thinks that flipping burgers is an easy task performed by stupid people but playing go is somehow the height of human intellect, despite 40+ years of evidence to the contrary.

deleted by creator

But of course they named it “atlas”. Openai is clearly the work randian supermen.

Also, anil sounds like he might be a little out of touch with regards to how people search these days. Careful keyword searching isn’t even as useful as it used to be, given the damage google et al have done to their own products.

(also also, interactive fiction has marched on a little since zork and infocom were the latest and greatest things, but I accept that most people won’t have noticed)

There are various other posts here on the general subject.

https://awful.systems/post/5897965

Omarchy is a deeply uninteresting and partially-assed project that has been thrust into the limelight because if the creator’s political leanings, not because of any merits it might have.

I see there’s at least one big fan of Moldbug still trying to implement his perfect neofeudal state.

https://www.wired.com/story/elon-musk-wants-strong-influence-over-the-robot-army-hes-building/

My fundamental concern with regard to how much voting control I have at Tesla is, if I go ahead and build this enormous robot army, can I just be ousted at some point in the future?

If we build this robot army, do I have at least a strong influence over this robot army? Not control, but a strong influence … I don’t feel comfortable building that robot army unless I have a strong influence.

I’m sure this is fine, largely because he is an idiot. Probably bad news for other shareholders and customers though.

Anyone else getting “when I die, you’re all joining me in my mausoleum” vibes from musk?

Mmm. There’s certainly nothing else about any of the people or projects involved that’s likely to be a source of fuss, either.

No sir, nothing but apolitical dramaless software development as far as the eye can see.

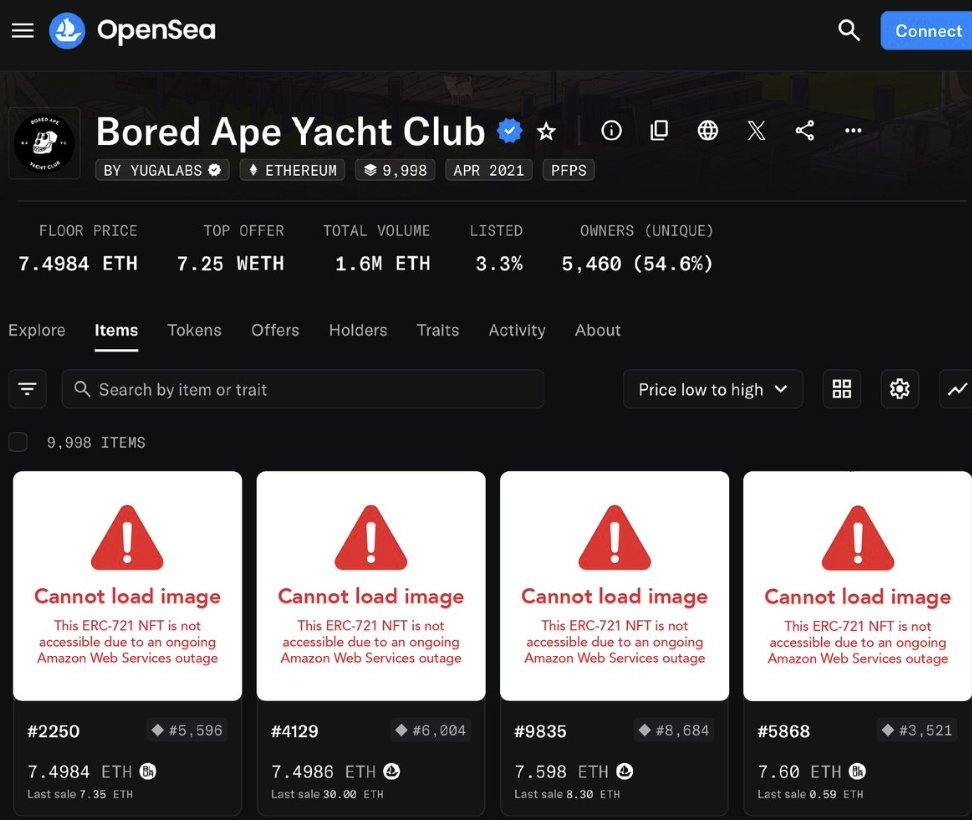

I know nfts are old news now, but:

lol, decentralisation.

A screenshot of some boardape nfts on opensea. All the actual images are replaced with an error message saying “this nft is not available due to an ongoing AWS outage”

What, are you telling me you’re not prepared to share your most intimate details with elon musk’s edgelord/waifu simulator in order to let it pretend to be you well enough to fool a bunch of professionals who should know better, and let it decide whether you should live or die? With a marketing pitch like that, who could possibly refuse?

Somehow I missed the fact that yesterday paypal’s blockchain operator fucked up and accidentally minted 300 trillion itchy and scratchy coins.

https://www.web3isgoinggreat.com/?id=paxos-accidental-mint

And now apparently it turns out that it was just a sequence of stupid whereby they accidentally deleted 300 million, which would have been impressive all by itself, then tried to recreate it (🎶 but at least it isn’t fiat currency🎶) and got the order of magnitude catastrophically wrong and had to delete that before finally undoing their original mistake. Future of finance right here, folks.

Anyone else know the grisly details? The place I heard it from is a mostly-private account on mastodon which isn’t really shareable here, and they didn’t say where they’d heard it.

Interesting developments reported by ars technica: Inside the web infrastructure revolt over Google’s AI Overviews

I don’t think any of this is actually good news for the people who’re actually suffering the effects of ai scraping and bullshit generation, but I do think it is a good idea that someone with sufficient clout is standing up to google et al and suggesting that they can’t just scrape al the things, all the time, and then screw the source of all their training data.

I’m somewhat unhappy that it is cloudflare doing this, a company who have deeply shitty politics and an unpleasantly strong grasp on the internet already. I very much do not want the internet to be divided into cloudflare customers, and the slop bucket.

deleted by creator

Aside from the name being stupid and annoying (and their justifications and comparisons being additionally stupid and annoying) I’m… not entirely against this license? As a less shitty (AFAICT) version of the BUSL, I’d rather companies used this than the BUSL or just going closed-source (which is an option a bunch of firms have chosen, after all).

I’m attempting to get my employer to open-source some of our stuff, and there are several people at the top who are proprietary software folk at heart and this seems like a reasonable way to trick them into relaxing a their grip a little.

Apparently someone has managed to wrangle a bunch of preprogrammed biases out of grok. There’s nothing unexpected here, and the source isn’t great, but might be worth a look.

https://www.thecanary.co/skwawkbox/2025/10/31/grok-admits-its-constructed-to-protect-israel/

Seems like fairly generic us right-wing thought, glazed with the requirement to hype elon.