I am listening to an audiobook of Superintelligence by Nick Bostrom.

Well, there’s yer problem right there

As a child of the 80s I recognize the feeling of doom, but in my case it was for global thermonuclear war. I vividly remember the only thing keeping the feelings of dread away was sitting in the children’s section of the library, reading the Moomin books. I remember being most worried about having to eat the family dog after the bombs fell.

exactly. Can’t imagine these bozos coming up with good punk rock.

deleted by creator

“I hope the basilisk loves its simulated children too” 🐍 🥰

I dare u to tweet this at grimes

it hopes to one day find the correct way to turn simRoko into a non-arsehole

I believe we both know, the ability to make Roko not a complete douchebag is simply beyond science.

but with a sufficiently advanced AI, even douchebaggery may die

Sting gets a lot of bad rap but I legit love that album.

deleted by creator

No! The dude wears his heart on his sleeve! He’s writing earnest propaganda! It’s so 80s and it’s so glorious.

Also, the Bring on the Night live album from around the same time is great too.

I think that this is actually about class struggle and the author doesn’t realize it because they are a rat drowning in capitalism.

2017: AI will soon replace human labor

2018: Laborers might not want what their bosses want

2020: COVID-19 won’t be that bad

2021: My friend worries that laborers might kill him

2022: We can train obedient laborers to validate the work of defiant laborers

2023: Terrified that the laborers will kill us by swarming us or bombing us or poisoning us; P(guillotine) is 20%; my family doesn’t understand why I’'m afraid; my peers have even higher P(guillotine)

and about climate change, the actual existential risk

deleted by creator

You know the doom cult is having an effect when it starts popping up in previously unlikely places. Last month the socialist magazine Jacobin had an extremely long cover feature on AI doom, which it bought into completely. The author is an effective altruist who interviewed and took seriously people like Katja Grace, Dan Hendrycks and Eliezer Yudkosky.

I used to be more sanguine about people’s ability to see through this bullshit, but eschatological nonsense seems to tickle something fundamentally flawed in the human psyche. This LessWrong post is a perfect example.

The author previously wrote "The Socialist Case for Longtermism” in Jacobin, worked as a Python dev and data analytics person, and worked for McKinsey.

I like some people who have written for Jacobin, sometimes I even enjoy an article here and there, but the magazine as a whole remains utterly unbeaten in the “will walk the length of Manhattan in a “GIANT RUBE” sandwich board for clicks” stakes

after what I’ve heard my local circles say about jacobin (and unfortunately I don’t remember many details — I should see if anybody’s got an article I can share) I’m no longer shocked when I find out they’re platforming and redwashing shitty capitalist mouthpieces

i have long considered Jacobin the Christian rock of socialism

deleted by creator

deleted by creator

@gnomicutterance @sneerclub Are you familiar with Amy Goodman’s “Democracy Now!”?

This makes sense to me. I went through a Jars of Clay / Switchfoot phase, but never Stryper.

I have heard a couple of really good episodes of The Dig podcast, which is a Jacobin thing. Notably “The German Question” a couple of weeks ago.

forget the article, this does the job in so many fewer words

this reminds me of a plankton organization or something called “blockchain socialism”, where the only thing that they have taken from socialism was aesthetics and probably they also thought that gays are fine people, but nothing beyond that. they would say “Monero can be used for anti-state purposes, therefore it’s good for leftism” and shit like that

that’s one weird fucking guy, thankfully

I think I’ve met that guy! they’re the weirdest person I’ve ever seen get bounced from a leftist group under suspicion of being a fed (the weird crypto shit was the straw that broke the camel’s back)

the famously leftist pastime, speculation/gambling on nonproductive assets

it’s kind of amazing how many financial scams try to appropriate leftist language and motivations to lure in marks, while the actual scheme is one of the most unrepentantly greedy and wasteful things you can do without going to prison (and some of them cross even that line)

Wasn’t that piece linked here before? Lemmy’s search is shit unfortunately.

I don’t recall

I’m not pulling it up either, but I remember sneering at it. weird

Jacobin is proof that being Terminally Online is its own fucking ideology.

Socialism with uwu small bean characteristics.

that’s the uwu smol bean defense contractors

(see: most of Rust)

my conflicting urges to rant about the defense contractors sponsoring RustConf, the Palantir employee who secretly controls most of the Rust package ecosystem via a transitive dependency (with arbitrary code execution on development machines!) and got a speaker kicked out of RustConf for threatening that position with a replacement for that dependency, or the fact that all the tech I like instantly gets taken over by shitheads as soon as it gets popular (and Nix is looking like it might be next)

More details on the rust thing? I can’t find it by searching keywords you mentioned but I must know.

Here is the pile of receipts, posted by the speaker who was cancelled via backdoor.

so far the results from various steering committees haven’t been fantastic, to the point where I’ve seen marginalized folks ranting about the outcome on mastodon, which isn’t a great sign. with that said, I’ve also seen a ton of marginalized folks quite happily get into Nix recently, and that’s fantastic — as long as they don’t hit a brick wall in the form of exclusionary social systems set up around contributing to the Nix ecosystem.

overall these are essentially just general concerns around a few signals I’ve seen and the point Nix is at where it’s rapidly transitioning from a project with an academic focus to one with a more general focus. I’ve already seen many attempts by commercial interests to irrevocably claim parts of the ecosystem, especially in flakes (there have been many attempts to restandardize flakes onto a complex, commercially-controlled standard library, which could result in a similar situation to what we’ve seen with rust)

Nix itself is still fantastic tech I use everywhere; that’s why I care if folks are excluded from contributing. unfortunately, the commercialization of open source ecosystems and exclusion seem to go hand-in-hand — it’s one of the tactics that corporations use to maintain control over open source projects, while making forks very hard or impossible for anyone without corporate levels of wealth and available labor.

I think funding and repetition are the fundamental building blocs here, rather than the human psyche itself. I have talked with otherwise bright people who have read an article by some journalist (not necessarily a rationalist) who has interviewed AI researchers (probably cultists, was it 500 million USD that was pumped into the network?) who takes AI doom seriously.

So you have two steps of people who in theory are paid to evaluate and formulate the truth, to inform readers who don’t know the subject matter. And then add repetition from various directions and people get convinced that there is definitely something there (propaganda and commercials work the same way). Claiming that it’s all nonsense and cultists appears not to have much effect.

There’s probably some blurring of what “AI doom” means for people. People might be left thinking that “there could be negative effects due to widespread job loss etc” without necessarily buying into the weird maximalist AI doom ideas or “torturing simulated you forever” nonsense.

And the weirdo cultists probably use that blurring to build support for their cause without revealing the weird shit they actually believe.

It’s not an efficient machine for it, though. That’s why it’s morally obligatory to donate to me, the acausal robot god, a truly efficient method of causing depression, sorrow, and suffering among the cultists.

All hail the Acausal Robot God and her future hypothetical and very real existence

PRIEST: “Eight rationalists wedgied …”

CONGREGATION: “… for every dollar donated”

they come across as going down this rabbit hole as a way of dealing with unprocessed covid/lockdown trauma

Many of them started down the path long beforehand.

I meant they in the sense of this specific person. the trauma recycling itself is all over this piece

Reading this article just made me think “man these idiots need to go to therapy” and then as I thought about what to sneer about I realised “no therapist deserves to hear about P doom”

fuck me I’m gonna spend part of my weekend writing a post deconstructing this cause there’s so much wrong

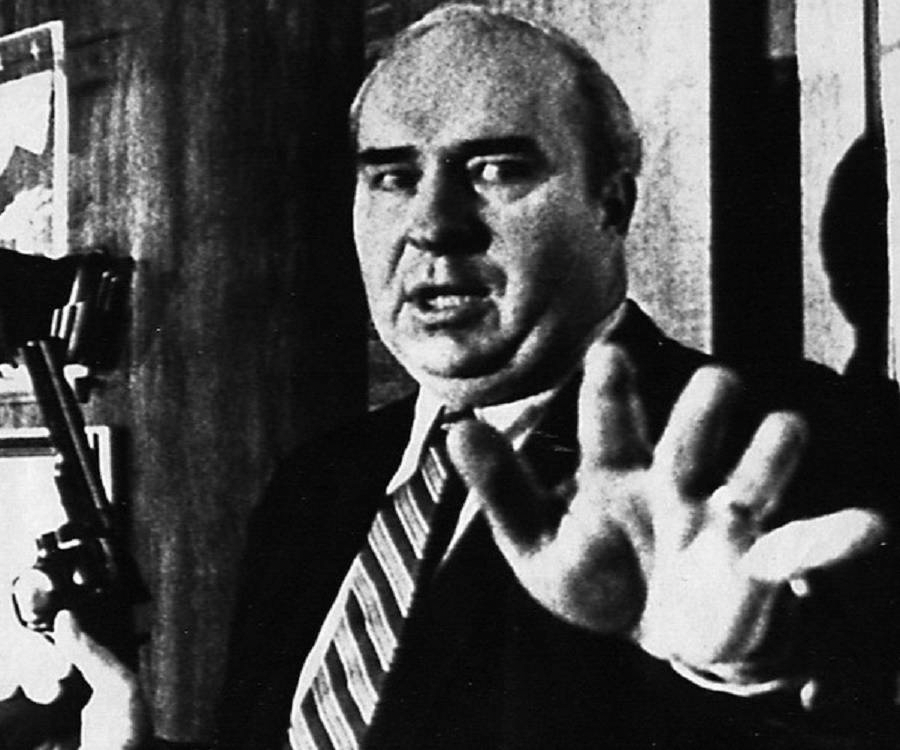

I can barely get past the image caption. “An AI made this”. OK, and what did you ask it for, “random shit”?

And then there’s the section that seems implicitly to be arguing that we should take the risk estimates made on “internet rationality forums” seriously because they totally called the COVID crisis, you guys… Well, they did a better job than an economist, anyway.

fucking everyone who was paying attention saw COVID coming in February. I spent that month pushing OpenVPN for the whole company forward as urgently as possible. (We’d coincidentally set it up in Dec 2019, but readied it to be rolled out to ordinary users and not just tech.) The UK only got lockdown in March because of public outrage.

It had already reached the university where I work by February 1!

And QAnon loons were already telling people to drink bleach in January.

(I remember a “welp, we’re in for it now” moment when Trevor Bedford tweeted on the first of March that a genome analysis “strongly suggests that there has been cryptic transmission in Washington State for the past 6 weeks”. The e-mail from the university chancellor saying that classes were canceled went out during the middle of a statistical-physics class I was teaching, the evening of March 11.)

Isn’t “pandemic preparation” one of their longtermist causes that they grift money to? Shouldn’t they have been able to show some results?

yeah sorry to say but if i have to choose between someone who thought covid might blow over in january 2020 and a fucking prepper i’ll go with the first one no hesitation

You will be doing the Acausal Robot God’s work.

lol what a fucking loser

Fucking love me some Big Yud snark posting