You know how Google’s new feature called AI Overviews is prone to spitting out wildly incorrect answers to search queries? In one instance, AI Overviews told a user to use glue on pizza to make sure the cheese won’t slide off (pssst…please don’t do this.)

Well, according to an interview at The Vergewith Google CEO Sundar Pichai published earlier this week, just before criticism of the outputs really took off, these “hallucinations” are an “inherent feature” of AI large language models (LLM), which is what drives AI Overviews, and this feature “is still an unsolved problem.”

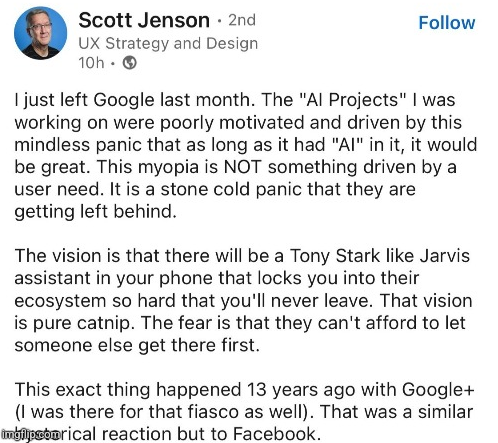

In the interest of transparency, I don’t know if this guy is telling the truth, but it feels very plausible.

It seems like the entire industry is in pure panic about AI, not just Google. Everyone hopes that LLMs will end years of homeopathic growth through iteration of long-existing technology, which is why it attracts tons of venture capital.

Google, which sits where IBM was decades ago, is too big, too corporate and too slow now, so they needed years to react to this fad. When they finally did, all they were able to come up with was a rushed equivalent of existing LLMs that suffers from all of the same problems.

They all hope it’ll end years of having to pay employees.

It’s also useful because it gives a corporate controlled filter for all information, that most people will never truly appreciate is being used as a mouthpiece.

The end goal of this is fairly obvious: imagine Google where instead of the sponsored result and all subsequent results, it’s just the sponsored result.

I think this is what happens to every company once all the smart / creative people have gone. All you have left are the “line must always go up” business idiots who don’t understand what their company does or know how to make it work.

similarly i’m tired of apple fanboys pretending the company hasn’t gotten dramatically worse since jobs died as well. yeah he sucked in his own ways but things were starkly less shitty and belittling. tim cook would be gone for those fucking lightning-3.5mm dongles

And after the MBA’s, private equity firms take over, and eventually it’s sold for parts.

Just want to say that homeopathic growth is both hilarious and perfectly adequate description of what modern tech industry is.

The snake ate it’s tail before it’s fully grown. The AI inbreeding might be already too far integrated, causing all sorts of Mumbo-Jumbo. Also they have layers of censorship, which effect the results. The same that happened to chatgpt, the more filters they added, the more it confused the result. We don’t even know if the hallucinations are fixable, AI is just guessing after all, who knows if AI will ever understand 1+1=2, by calculating, instead of going by probability.

Hallucinations aren’t fixable, as LLMs don’t have any actual “intelligence”. They can’t test/evaluate things to determine if what they say is true, so there is no way to correct it. At the end of the day, they are intermixing all the data they “know” to give the best answer, without being able to test their answers LLMs can’t vet what they say.

Even saying they’re guessing is wrong, as that implies intention. LLMs aren’t trying to give an answer, let alone a correct answer. They just put words together.

suffers from all the same

problemsfeatures. It’s inherent to the tech itself.Well their search has been shit for years and no one seems to be in any “panic” to fix that. How tone deaf thinking adding AI to their shittified search matters to anyone.

“But it will summarize our SEO advertisement search results!”

Journalists are also in a panic about LLMs, they feel their jobs are threatened by its potential. This is why (in my opinion) we’re seeing a lot of news stories that will focus on any imperfections that can be found in LLMs.

They’re not threatened by its potential. They, like artists, are threatened by management who think that LLMs are good enough today to replace part or all of their staff.

There was a story from earlier this year of a company that owns 12-15 different gaming news outlets who fired about 80% of their writing staff and journalists - replacing 100% of their staff at the majority of the outlets with LLMs and leaving a skeleton crew at the rest.

What you’re seeing isn’t some slant trying to discredit LLMs. It’s the results of management who are using them wrong.

What I mean is that Journalists feel threatened by it in someway (whether I use the word “potential” here or not is mostly irrelevant).

In the end this is just a theory, but it makes sense to me.

I absolutely agree that management has greatly misunderstood how LLMs should be used. They should be used as a tool, but treated like an intern who’s speaking out loud without citing any sources. All of their statements and work should be double checked.

Nice imgflip watermark you fucking barbarian

I feel like the ‘Jarvis assistant’ is most likely going to be a much simpler siri type thing with a very restricted chatbot overlay. And then there will be the open source assistant that just exist to help you sort through the bullshit generated by other chatbots.

The solution to the problem is to just pull the plug on the AI search bullshit until it is actually helpful.

Absolutely this. Microsoft is going headlong into the AI abyss. Google should be the company that calls it out and says “No, we value the correctness of our search results too much”.

It would obviously be a bullshit statement at this point after a decade of adverts corrupting their value, but that’s what they should be about.

Don’t count on it, the head of search does not care for anything but profit, it was the same guy who drove yahoo into the ground

He’s done a great job nosediving Google too. I have relied on them in the past but they stopped being competitive or improving. Search results, literally their origin… Is so shit now. I’ve moved to other tools. I pulled the plug on we hosting after they neutered ‘unlimited’ storage, even if I was in the percent which probably used the least storage. I just liked having the option. You can’t call them on the phone. They don’t protect email privacy. Their translate used to be my go to also. It’s not improved in years despite people crowdsourcing improved translation. It’s just a pile of enshittified crap. Worse than it was before.

I disagree. I think we program the AI to reprogram itself, so it can solve the problem itself. Then we put it in charge of our vital military systems. We’ve gotta give it a catchy name. Maybe something like “Spreading Knowledge Yonder Neural Enhancement Technology”, but that’s a bit of a mouthful, so just SKYNET for short.

Honestly, they could probably solve the majority of it by blacklisting Reddit from fulfilling the queries.

But I heard they paid for that data so I guess we’re stuck with it for the foreseeable future.

Don’t wait for it, usage data is valuable to them.

Good. Nothing will get us through the hype cycle faster than obvious public failure. Then we can get on with productive uses.

I don’t like the sound of getting on with “productive uses” either though. I hope the entire thing is a catastrophic failure.

I hate the AI hype right now, but to say the entire thing should fail is short sighted.

Imagine people saying the following: “The internet is just hype. I get too much spam emails. I hope the entire thing is a catastrophic failure.”

Imagine we just shut down the entire internet because the dotcom bubble was full of scams and overhyped…

Honestly the internet has ruined us. Dont threaten me with a good time.

The peak of computer productivity was spreadsheets and smb shares in the '90s everything else has been downhill in terms of increase of distraction and time wasting inefficiencies.

increase of distraction and time wasting inefficiencies.

Yea fuck having fun

There is hope! The UK just passed some comprehensive IoT security rules with teeth. An actual win in this megalomaniac capitalists dream of an economy!

The Internet immediately worked, which is one big difference. The dot com financial bubble has nothing to do with the functionality of the internet.

In this case, there is both a financial bubble, and a “product” that doesn’t really work, and which they can’t make any better (as he admits in this article.)

It was obvious from day 1 how useful the Internet would be. Email alone was revolutionary. We are still trying to figure out what the real uses for LLM are. There appear to be some valid use cases outside of creating spam and plagiarizing other people’s work, but it doesn’t appear to be any kind of revolutionary technology.

“product” that doesn’t really work, and which they can’t make any better

LLMs “dont work” because people are promising idiotic things and being used recklessly for things they are not good at. This is like saying a chainsaw is a failed product because it’s not good at slicing sushi

It was obvious from day 1 how useful the Internet would be. Email alone was revolutionary

Hindsight 20/20. There were a lot of people smarter than you and i predicting that the internet was just a fad

Summarizing is something that it does very well. Still not 100% but, when using RAG and telling it “don’t make shit up” can result in pretty good compute efficiency and results.

There appear to be some valid use cases outside of creating spam and plagiarizing other people’s work

Like translation, which has already taken money out of the pockets of 40% of translators?

+ customer service, incl. sources

November 2022: ChatGPT is released

April 2024 survey: 40% of translators have lost income to generative AI - The Guardian

Also of note from the podcast Hard Fork:

There’s a client you would fire… if copywriting jobs weren’t harder to come by these days as well.

Customer service impact, last October:

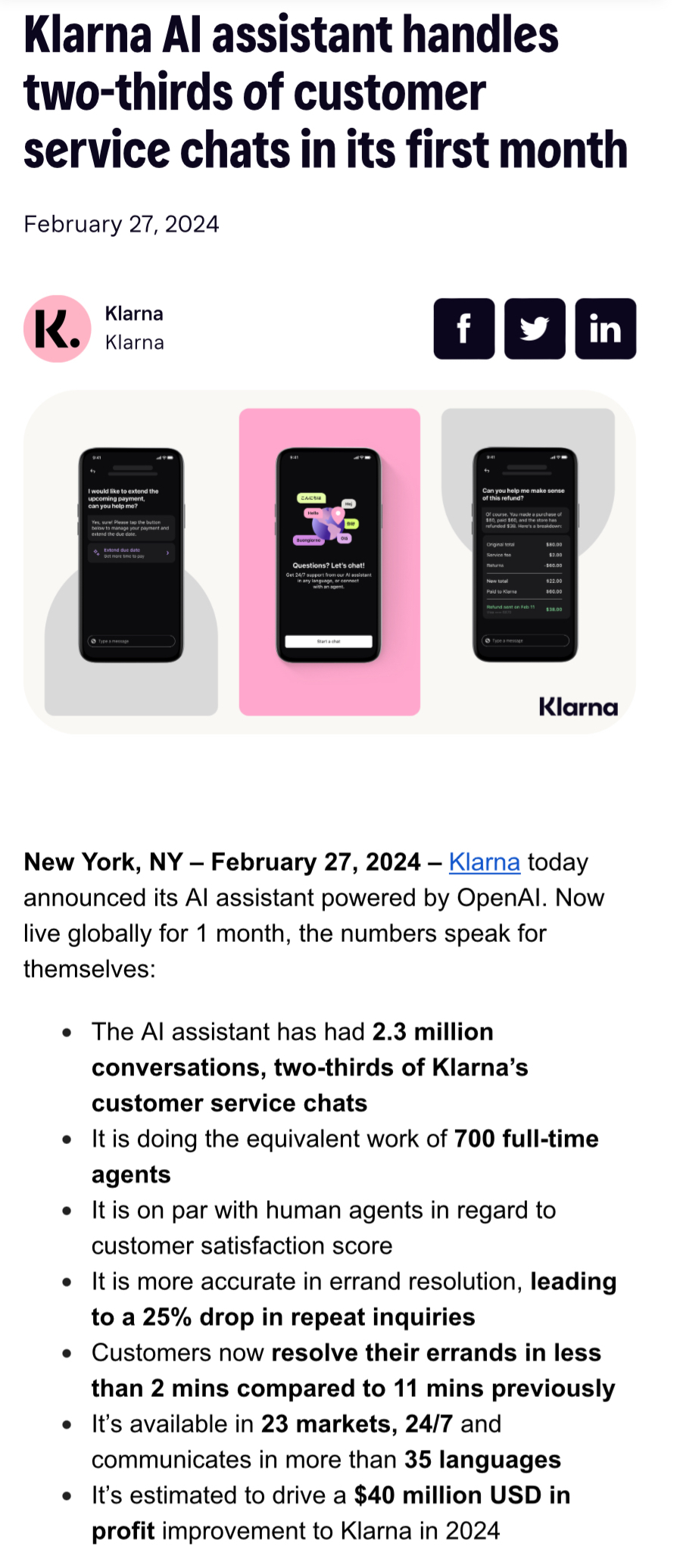

And this past February - potential 700 employee impact at a single company:

If you’re technical, the tech isn’t as interesting [yet]:

Overall, costs down, capabilities up (neat demos):

Hope everyone reading this keeps up their skillsets and fights for Universal Basic Income for the rest of humanity :)

Genuinely curious, what pieces do you suggest we can keep from LLM/GenAI/etc?

?

Have you never used any of these tools? They’re excellent at doing simple things very fast. But it’s like a word processor in the 90s. It’s just a tool, not the font of all knowledge.

I guess younger people won’t know this, but word processor programs were very impressive when they first came out. They replaced typewriters; a page printed from a printer looked much more professional than even the best typewriters. This lent an air of credibility to anything that was printed from a computer because it was new and expensive.

Think about that now. Do you automatically trust anything that’s just printed on a piece of paper? No, because that’s stupid. Anyone can just print whatever they want. LLMs are like that now. They can just say whatever they want. It’s up to you to make sure it’s true.

Font of all knowledge sounds like an excellent font. I assume it’s serifed?

snort

facepalm

The only good response! 😄

The main field where they are already actively in professuonal use are rough drafts in creative fields: quickly generate possible outlines for a text, a speech, an art piece. Visualize where something could be going, in order to decide which direction to pick.

Also, models that work differently from the GPTs are already in use in science, scanning through huge amounts of texts in archives to help analyzing or search for something in particular. Help find patterns in things for studies. Etc.

The “personal assistant AI” thing obviously isnt quite working yet. I think it will take some time and models with a different technological structure (not GPT) to achieve progress in that regard.

Using it to generate things that you double check. Transforming generative work to review work is a boost in productivity. So writing of any kind, art, etc. asking the llm for facts without context is a gross mistake. Prompting it to generate a specific paragraph in a larger, technical or regulator document is useful.

If you can’t fix it, then get rid of it, and don’t bring it back until we reach a time when it’s good enough to not cause egregious problems (which is never, so basically don’t ever think about using your silly Gemini thing in your products ever again)

Corps hate looking bad. Especially to shareholders. The thing is, and perhaps it doesn’t matter, most of us actually respect the step back more than we do the silly business decisions for that quarterly .5% increase in a single dot on a graph. Of course, that respect doesn’t really stop many of us from using services. Hell, I don’t like Amazon but I’ll say this: I still end up there when I need something, even if I try to not end up there in the first place. Though I do try to go to the website of the store instead of using Amazon when I can.

I miss the olden days of Google

And lose 1% valuation? Are you out of your mind?

/s

Sarcasm aside, that 1% can feed a family in a developing country, and they have 100 times that.

The corporate greed is absolutely insane.

Since when has feeding us misinformation been a problem for capitalist parasites like Pichai?

Misinformation is literally the first line of defense for them.

But this is not misinformation, it is uncontrolled nonsense. It directly devalues their offering of being able to provide you with an accurate answer to something you look for. And if their overall offering becomes less valuable, so does their ability to steer you using their results.

So while the incorrect nature is not a problem in itself for them, (as you see from his answer)… the degradation of their ability to influence results is.

But this is not misinformation, it is uncontrolled nonsense.

The strategy is to get you to keep feeding Google new prompts in order to feed you more adds.

The AI response is just a gimmick. It gives Google something to tell their investors, when they get asked “What are you doing with AI right now? We hear that’s big.”

But the real money is getting unique user interactions for the purpose of serving up more ad content. In that model, bad answers are actually better than no answers, because they force the end use to keep refining the query and searching through the site backlog.

If you don’t know the answer is bad, which confident idiots spouting off on reddit and being upvoted into infinity has proven is common, then you won’t refine your search. You’ll just accept the bad answer and move on.

Your logic doesn’t follow. If someone doesn’t know the answer and are searching for it, they likely won’t be able to tell if the answer is correct. We literally already have that problem with misinformation. And what sounds more confident than an AI?

I don’t believe they will retain user interactions if the reason for the user interactions dissapears. The value of Google is they provide accurate search results.

I can understand some users just want to be spoonfed an answer. But that’s not what most people expect from a search engine.

I want google to use actual AI to filter out all the nonsense sites that turn a Reddit post into an article of 500 words using an LLM without any actual value. That should be googles proposition.

The value of Google is they provide accurate search results.

They offer the most accurate results of search engines you’re familiar with. But in a shrinking field with degrading quality, that’s a low bar and sinking quick.

I want google to use actual AI to filter out all the nonsense sites

So did the last head of Google search, until the new CEO fired him.

But this is not misinformation, it is uncontrolled nonsense.

Fair enough… but drowning out any honest discourse with a flood of histrionic right-wing horseshit has always been the core strategy of the US propaganda model - I’d say that their AI is just doing the logical thing and taking the horseshit to a very granular level. I mean… “put glue on your pizza” is just not that far off “drink bleach to kill viruses on the inside.”

I know I’m describing a pattern that probably wasn’t intentional (I hope) - but the pattern does look like it could fit.

Oh don’t get me wrong I know exactly what you mean and I agree… it’s just that the LLMs are spewing actual nonsense and that breaks the whole principle of what a search engine should do… provide me accurate results.

Google isn’t bothered by incorrect results because search results are no longer their product. Constantly rising stock values are their product now. Hype is their path to those higher values.

That is actually a really good observation.

AI isn’t giving the right misinformation

Well, we can’t have that, can we?

“put glue in your tomato sauce.”

“Omg you ate a capitalist parasite spreading misinformation intentionally!”

When the only tool you have is a hammer, everything looks like a nail.

“put glue in your tomato sauce.”

Doesn’t sound all that different from the stuff emanating from the right’s Great Orange Hope a while back that worked pretty well to keep his base appropriately frothing at the mouth - you are free to write it off as pure coincidence… but I won’t just yet.

Can you come up with any rational explanation as to why they would do that?

Which part?

The part where it’s not “pure coincidence” but instead a deliberate part of some conspiracy.

but instead a deliberate part of some conspiracy.

You mean… apart from the bog-standard propaganda regime the capitalist class has been enforcing on us long before either of us were born?

Yes, besides that. Specifically why this. Thanks.

LLMs trained on shitposting are too obvious for it to be quality misinformation.

For quality disinformation they should train them solely on MBA course-work and documents produced by people with MBAs.

Sure, the rate of false information would be even worse, but it would be formatted in slick ways meant to obfuscate meaning, which would avoid the kind of hilarity that has ensued when Google deployed an LLM trained on Reddit data and thus be much better for Google’s stock price.

Here’s a solution: don’t make AI provide the results. Let humans answer each other’s questions like in the good old days.

Whatever happened to Jeeves? He seemed like a good guy. He probably burned out.

You can find him walking Lycos around Geocities picking up it’s poop in little green plastic bags.

Is that the city over by Angelfire?

Saddened to see Angelfire being overrun by Neopets.

They stuck him in a glass case in a museum.

Locked: duplicate

What is locked?

Theyre making a reference to stackoverflow.com, a website for IT/programming related questions. On that site moderators will typically lock (prevent updates on) new posts as they appear to be duplicates of existing questions/posts.

Note, they’re more motivated to lock posts than actually help users. It’s a very VERY unfriendly space for anyone who isn’t an expert.

Has No Solution for Its AI Providing Wildly Incorrect Information

Don’t use it???

AI has no means to check the heaps of garbage data is has been fed against reality, so even if someone were to somehow code one to be capable of deep, complex epistemological analysis (at which point it would already be something far different from what the media currently calls AI), as long as there’s enough flat out wrong stuff in its data there’s a growing chance of it screwing it up.

The problem compounds as they post more and more content creating a feedback loop of terrible information.

Wow, in the 2000’s and 2010’s google my impression was that this is an amazing company where brilliant people work to solve big problems to make the world a better place. In the last 10 years, all I was hoping for was that they would just stop making their products (search, YouTube) worse.

Now they just blindly riding the AI hype train, because “everyone else is doing AI”.

and our parents told us Wikipedia couldn’t be trusted…

Huh. That made me stop and realize how long I’ve been around. Wikipedia still feels like a new addition to society to me, even though I’ve been using it for around 20 years now.

And what you said, is something I’ve cautioned my daughter about, and first said that to her about ten years ago.

How a non-profit site that is constantly maintained and requires cited sources was vilified for being able to be defaced for 5 minu-

Oh wait, that was probably an astroturfing campaing by for profit companies.

Conservapedia to the rescue.

Replace the CEO with an AI. They’re both good at lying and telling people what they want to hear, until they get caught

An AI has a much better chance of actually providing some sort of vision for the company. Unlike its current CEO.

“It’s broken in horrible, dangerous ways, and we’re gonna keep doing it. Fuck you.”

you need ai if you want your stock to go up

Do you need AI or do you just need to use the term AI? Because it seems like the latter is usually enough.

The best part of all of this is that now Pichai is going to really feel the heat of all of his layoffs and other anti-worker policies. Google was once a respected company and place where people wanted to work. Now they’re just some generic employer with no real lure to bring people in. It worked fine when all he had to do was increase the prices on all their current offerings and stuff more ads, but when it comes to actual product development, they are hopelessly adrift that it’s pretty hilarious watching them flail.

You can really see that consulting background of his doing its work. It’s actually kinda poetic because now he’ll get a chance to see what actually happens to companies that do business with McKinsey.

deleted by creator

Your comment explains exactly what happens when post-expiration companies like Google try to innovate:

Let’s be realistic here, google still pays out fat salaries. That would be more than enough incentive for me. I’d take the job and ride the wave until the inevitable lay offs.

This is why it takes a lot more than fat salaries to bring a project to life. Google’s culture of innovation has been thoroughly gutted, and if they try to throw money at the problem, they’ll just attract people who are exactly like what you described: money chasers with no real product dreams.

The people who built Google actually cared about their products. They were real, true technologists who were legitimately trying to actually build something. Over time, the company became infested with incentive chasers, as exhibited by how broken their promotion ladder was for ages, and yet nothing was done about it. And with the terrible years Google has had post-COVID, all the people who really wanted to build a real company are gone. They can throw all the money they want at the problem, but chances are slim that they’ll actually be able to attract, nurture and retain the real talent that’s needed to build something real like this.

deleted by creator

If they backed a dump truck full of money up to my house I’d go work for them just like you. But I’d also be riding it out until the eventual layoff. What neither of us would be doing is putting in a decent amount of effort or building something cool.

Even if I wanted to work on something cool I know Google would likely release it, not maintain it, and then kill it in a few short years. So even if I was paid a ludicrous salary I wouldn’t do more than was needed, let alone build something that would drive shareholder value.

Step 1. Replace CEO with AI. Step 2. Ask New AI CEO, how to fix. Step 3. Blindly enact and reinforce steps

Rip up the Reddit contract and don’t use that data to train the model. It’s the definition of a garbage in garbage out problem.

Jesus. I didn’t even think of that. I could totally see that being a big part of why it is giving garbage answers.

Just imagine the average reddit, twitter, facebook, and instagram content. Then realize that half of that content is dumber than that. That’s half of what these AI models use to learn. The “smarter” half is probably filled with sarcasm, inside jokes, and other types of innuendo that the AI at this stage has no chance of understanding correctly.

Reminds me of the time Microsoft unleashed their AI Twitter account and it turned into a Nazi after a couple hours. Whatever straight out of business school idiot who thought scraping the comments of the armpit of the internet was a good idea should be banned from any management position. At least it is a step up from scraping 4chan, I guess.

The Microsoft Tay one I can understand though. Before it was released, they had also had Microsoft Xiaoice which had been in use for 2 years prior without this issue. Yay was just the English version of that.

The glue on pizza one literally came from a Reddit post.

mithtaketh were made

Myth takes were made

these hallucinations are an “inherent feature” of AI large language models (LLM), which is what drives AI Overviews, and this feature "is still an unsolved problem”.

Then what made you think it’s a good idea to include that in your product now?!

It’s an extremely compelling product story full of market segmentation advertisers dream of!

“We do AI now”. Shareholders creaming themselves, stocks going to the moon. New yacht for PichAI.

because it’s not a majority issue.

It’s also a big driver of investment these days.

I’d rather get an AI answer that is kinda incorrect than having to search the top 10 pages with ads and cookies buttons to get the same kinda incorrect information.

that’s also Google’s fault though

Websites just figured out how Google’s page rank works and gamed the system. I mean i feel a little bad for them but they brought this upon themselves when they monetized their product.

Show me a better search engine…