- cross-posted to:

- linux

- [email protected]

- cross-posted to:

- linux

- [email protected]

As part of the memory management changes expected to be merged for the upcoming Linux 6.11 cycle is allowing more fine-tuned control over the swappiness setting used to determine how aggressively pages are swapped out of physical system memory and into the on-disk swap space.

With the new code from Meta, a swappiness argument is supported for memory.reclaim. This effectively allows more finer-grained control over the swapiness behavior without overriding the global swappiness setting.

Don’t get me wrong; I love this. This is fantastic. However, I have only one thing to say: mhwahahahahahhaa!

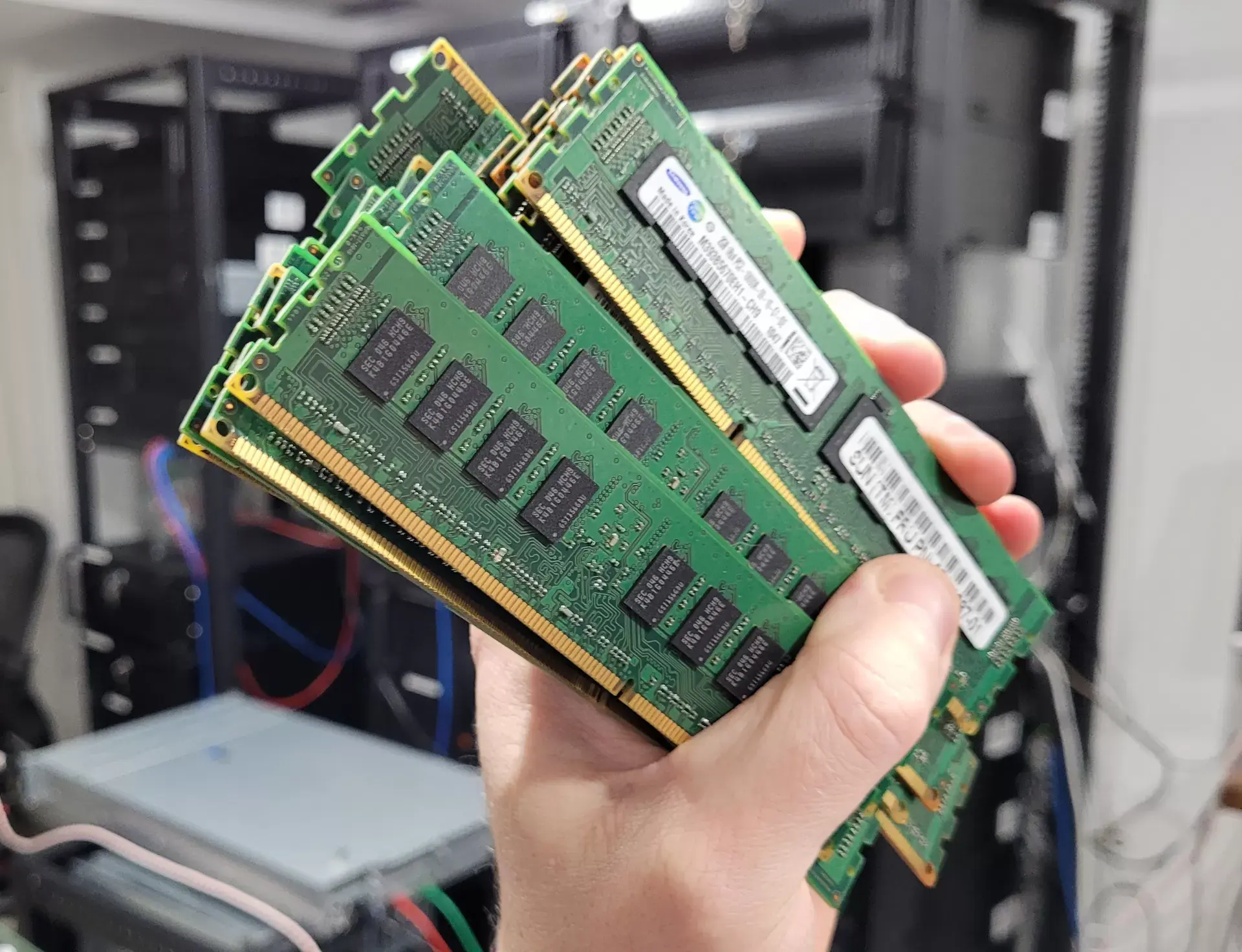

The last time I upgraded my desktop computer, I said “F it” and maxed out the RAM and put 64GB in it. It’s an AMD with integrated GPU that immediately takes over 2GB RAM – and I still have yet to do anything that has caused it to drop below 50% free memory. It’s exhilarating.

TBF, I spent years on a more memory-constrained laptop and my workflow became centered around minimalism: tiling WM, no DE, mostly terminal clients for everything but the web. When I got the new computer, with wild abandon I tried all the gluttons: KDE, Gnome… you know, all of them. The eye candy just wasn’t worth the PITA of the mousie-ness of them, and I eventually went back to Herbstluftwm and my shells. Now, when I do run greedy apps - usually some Electron crap - what bugs me is the constant CPU suck even at idle, so I find a shell alternative.

I guess it’s an irony that I live in a land of memory plenty and never need more than half what I have available. But I still get a little thrill when I do notice my memory use and I’ve got 70% free. Makes me want to code up a little program with an intentional memory plenty leak, just for fun, y’know?

I’m not sure if you understand what swap actually is, because even machines with 1Tb of RAM have swap partitions, just in case read this post from a developer working on swap module in Linux https://chrisdown.name/2018/01/02/in-defence-of-swap.html

That article is an excellent resource, BTW, thank you. However, it nowhere says anything about swapping being used when you have more memory than you use.

1TB of memory is not a lot, for many applications, so just saying “this guy has 1TB memory and look what he thinks of swap” doesn’t mean much. If he’s processing LLMs or really any non-trivial DB (read: any business DB), then that memory is being used.

Having space in memory so that you never have to swap is always better than needing to swap, and nothing in Chris’ article says anything counter to that. What he mainly argues is that swap is better than OOM killers, having configurations that lead to memory contention in the first place, or seeking alternative strategies to turning off swap.

The fact is, I could turn on swap, but it would never get used because I’m not doing anything that requires heavy memory use. Even running KDE and several Java and Electron apps, I wouldn’t run out of physical memory. I’ll run into CPU constraints long before I run into memory contention issues.

Frankly, if my system allowed me to have, say, 40GB instead of 64, I’d have done that. I only want to not have to use swap - because never using swap is always preferable to needing it - and slightly more than 32GB is where I happen to land. But I can only have symmetric memory modules, and all memory comes in powers-of-2 sizes, and 64GB is affordable.

Again, Chris’ essay says only that swap is better than many alternatives people seek; not that swap is better than being able to not exhaust physical RAM.

As a final point, the other type of swapping is between types of physical memory - between L1 and L2, and between cache and main memory. That’s not what Chris is talking about, nor what the swappiness tuning the OP article is discussing. Those are the swapping between memory and persistent storage.

Actually… as a former DBA on large databases, you typically want to minimize swapping on a dedicated database system. Most database engines do a much better job at keeping useful data in memory than the Linux kernel’s file caching, which is agnostic about what your files contain. There are some exceptions, like elasticsearch which almost entirely relies on the Linux filesystem cache for buffering I/O.

Anyway, database engines have query optimizers to determine the optimal path to resolve a query, but they rely on it that the buffers that they consider to be “in memory” are actually residing in physical memory, and not sitting in a swapfile somewhere.

So typically, on a large database system the vendor recommendation will be to set

vm.swappiness=0to minimize memory pressure from filesystem caching, and to set the database buffers as high as the amount of memory you have in your system minus a small amount for the operating system.So in other words, you paid for 32 GB that you have so far never used.

Yup! And it’s glorious.

It’s far better to have too much, than too little.

Linux can run fine in 2gb with a desktop. I forgot the URL of the page but obligatory Linux ate my ram. (Your ram is used as a cache)