Last November, The Bookseller reported Dutch publisher Veen Bosch & Keuning, owned by publishing titan Simon & Schuster, was testing the use of artificial intelligence to help translate several of its books to English.

Even with humans, there are good translations and bad translations.

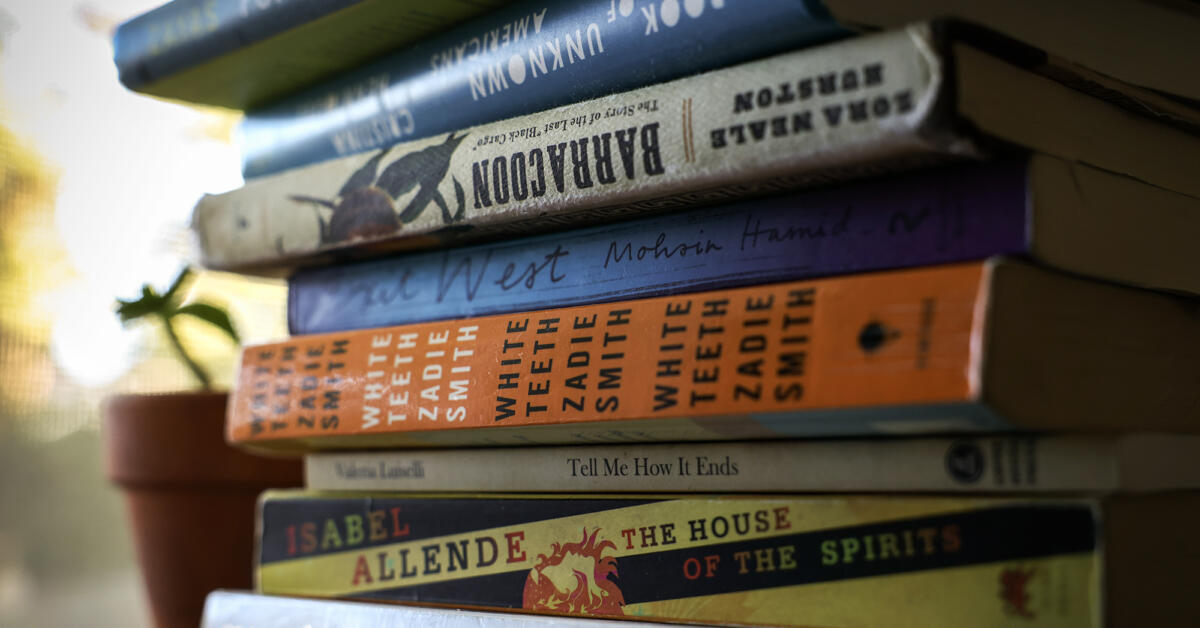

Some of my favorite authors did not natively write in English and the translators did a stellar job of capturing the nuance of the original.

I can’t imagine AI giving anything other than a straight denotative translation. It would be readable, but with no soul.

Here’s a passage from Carlos Ruiz Zafon’s “The Shadow of the Wind” in Spanish (“La sombra del viento”):

“En una ocasión oí comentar a un cliente habitual en la librería de mi padre que pocas cosas marcan tanto a un lector como el primer libro que realmente se abre camino hasta su corazón. Aquellas primeras imágenes, el eco de esas palabras que creemos haber dejado atrás, nos acompañan toda la vida y esculpen un palacio en nuestra memoria al que, tarde o temprano —no importa cuántos libros leamos, cuántos mundos descubramos, cuánto aprendamos u olvidemos—, vamos a regresar. Para mí, esas páginas embrujadas siempre serán las que encontré entre los pasillos del Cementerio de los Libros Olvidados.”

The English translation:

“Once, in my father’s bookshop, I heard a regular customer say that few things leave a deeper mark on a reader than the first book that finds its way into his heart. Those first images, the echo of words we think we have left behind, accompany us throughout our lives and sculpt a palace in our memory to which, sooner or later - no matter how many books we read, how many worlds we discover, or how much we learn or forget - we will return. For me those enchanted pages will always be the ones I found among the passageways of the Cemetery of Forgotten Books.”

Google translate:

“I once heard a regular customer at my father’s bookstore comment that few things leave a lasting impression on a reader as much as the first book that truly makes its way into their heart. Those first images, the echo of those words we think we’ve left behind, stay with us for a lifetime and sculpt a palace in our memory to which, sooner or later—no matter how many books we read, how many worlds we discover, how much we learn or forget—we will return. For me, those haunted pages will always be the ones I found in the aisles of the Cemetery of Forgotten Books.”

Agreed. Even human translations are usually crap 99% of the time if they are done as pure surface level translations without having someone who understands the original in depth essentially rewrite it in the target language.

A lot of technical documentation is translated automatically. I’m German, so most sites will give me the German translation. And more often than not, I struggle to understand even the basics of what they talk about. Until I switch to English, the original language.

I use local instances of Aya 32B (and sometimes Deepseek, Qwen, LG Exaone, Japanese finetunes, others depending on the language) to translate stuff, and it is quite different than Google Translate or any machine translation you find online. They get the “meaning” of text instead of transcribing it robotically like Google, and are actually pretty loose with interpretation.

It has soul… sometimes too much. That’s the problem: It’s great for personal use where it can ocassionally be wrong or flowery, but not good enough for publishing and selling, as the reader isn’t necessarily cognisant of errors.

In other words, AI translation should be a tool the reader understands how to use, not something to save greedy publishers a buck.

EDIT: Also, if you train an LLM for some job/concept in pure Chinese, a surprising amount of that new ability will work in English, as if the LLM abstracts language internally. Hence they really (sorta) do a “meaning” translation rather than a strict definitional one… Even when they shouldn’t.

Another thing you can do is translate with one local LLM, then load another for a reflection/correction check. This is another point for “open” and local inference, as corporate AI goes for cheapness, and generally tries to restrict you from competitors.

Or a tool for the translator to save time?

These language models don’t get the meaning of anything. They predict the next cluster of letters based on the clusters of letters that have come before. Sorry, but if it feels to you like they’re captured the meaning of something, you’re being bamboozled

It’s a metaphor.

They’re translating the input tokens to intent in the model’s middle layers, which is a bit more precise.

Actually, as to your edit, the it sounds like you’re fine-tuning the model for your data, not training it from scratch. So the llm has seen english and chinese before during the initial training. Also, they represent words as vectors and what usually happens is that similiar words’ vectors are close together. So subtituting e.g. Dad for Papa looks almost the same to an llm. Same across languages. But that’s not understanding, that’s behavior that way simpler models also have.

True! Models not trained on a specific language are generally bad at that language.

However, there are some exceptions, like a Japanese tune of Qwen 32B which dramatically enhances it Japanese, but the training has to be pretty extensive.

And even that aside… the effect is still there. The point it to illustrate that LLMs are sort of “language independent” internally, like you said.

I’ve spent years reading shitty machine translations of novels, its incomparable to something that a human translated