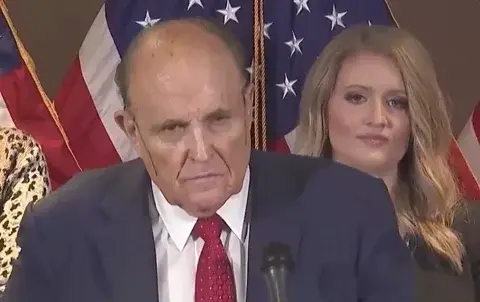

Image Transcription: Meme

A photo of an opened semi-trailer unloading a cargo van, with the cargo van rear door open revealing an even smaller blue smart car inside, with each vehicle captioned as “macOS”, “Linux VM” and “Docker” respectively in decreasing font size. Onlookers in the foreground of the photo gawk as a worker opens each vehicle door, revealing a scene like that of russian dolls.

I’m a human volunteer content transcriber and you could be too!

Just need to put a JIT compiled language logo inside the blue car and caption it as “Containerise once, ship anywhere”.

Hoping somebody organizes a /c/TranscribersOfLemmy or /m/TranscribersOfKbin

Not just OSX: anyone using WSL on windows is an offender too

But as a WSL user, dockerised Dev environments are pretty incredible to have running on a windows machine.

Does it required 64 gig of ram to run all my projects? Yes. Was it worth it? Also yes

I’m even worse, I have used wsl in a windows vm on my mac before haha

And use that to virtualize Android, to go even further beyond

My experience using docker on windows has been pretty awful, it would randomly become completely unresponsive, sometimes taking 100% CPU in the process. Couldn’t stop it without restarting my computer. Tried reinstalling and various things, still no help. Only found a GitHub issue with hundreds of comments but no working workarounds/solutions.

When it does work it still manages to feel… fragile, although maybe that’s just because of my experience with it breaking.

You can cap the amount of cpu/memory docker is allowed to use. That helps a lot for those issues in my experience, although it still takes somewhat beefy machines to run docker in wsl

When it happens docker+wsl become completely unresponsive anyway though. Stopping containers fails, after closing docker desktop

wsl.exe --shutdownstill doesn’t work, only thing I’ve managed to stop the CPU usage is killing a bunch of things through task manager. (IIRC I tried setting a cap while trying the hyper-v backend to see if it was a wsl specific problem, but it didn’t help, can’t fully remember though).This is the issue that I think was closest to what I was seeing https://github.com/docker/for-win/issues/12968

My workaround has been to start using GitHub codespaces for most dev stuff, it’s worked quite nicely for the things I’m working on at the moment.

I found the same thing until I started strictly controlling the resources each container could consume, and also changing to a much beefier machine. Running a single project with a few images were fine, but more than that and the WSL connection would randomly crash or become unresponsive.

Databases in particular you need to watch: left unchecked they will absolutely hog RAM.

I work in a windows environment at work and my VMs regularly flag the infrastructure firewalls. So WSL is my easiest way to at least be able to partially work in my environment of choice.

I’ve used WSL to run deepspeed before because inexplicably microsoft didn’t develop it for their own platform…

deleted by creator

Does docker really spin up a VM to run containers?

Yes, under windows and osx at least.

Is that still true? I use Linux but my coworker said docker runs natively now on the M1s but maybe he was making it up

I suspect they meant it runs natively in that it’s an aarch64 binary. It’s still running a VM under the hood because docker is really just a nice frontend to a bunch of Linux kernel features.

docker is really just a nice frontend to a bunch of Linux kernel features.

What does it do anyway? I know there’s lxc in the kernel and Docker not using it, doing it’s own thing, but not much else.

I can’t remember exactly what all the pieces are. However, I believe its a combination of

- cgroups: process isolation which is why you can see docker processes in ps/top/etc but you can’t for vms. I believe this is also what gets you the ability to run cross distro images since the isolation ensures the correct shared objects are loaded

- network namespaces: how they handle generating the isolated network stack per process

- some additional mount magic that I don’t know what its called.

My understanding is that all of the neat properties of docker are actuall part of the kernel, docker (and podman and other container runtimes) are mostly just packing them together to achieve the desired properties of “containers”.

It makes it very easy to define the environment and conditions in which a process is run, and completely isolate it from the rest of the system. The environment includes all the other software installed in said isolated environment. Since you have complete isolation you can install all the software that comes with what we think if as a linux “distribution”, which means you can do something like run a docker container that is “ubuntu” or “debian” on a “CentOS” or whatever distribution.

When you start a

Dockerfilewith the statementFROM ubuntu:version_tagyou are more or less saying “I want to run a process in an environment that includes all of the3 software that would ship with this specific version of ubuntu”A linux distro == Kernel + “user land” (maybe not the correct terminology). A docker container is the “user land” or “distro” + whatever you’re wanting to run, but the Kernel is the host system.

I found this pretty helpful in explaining it: https://earthly.dev/blog/chroot/

I’ll also say that folks say pretty nonchalantly deride Docker and other tools as if it’s just “easy” to set these things up with “just linux” and Docker is something akin to syntax sugar. I suspect many of these folks don’t make software for a living, or at least don’t work at significant scale. It might be easy to create an isolated process, it’s absurd to say that Docker (or Podman, etc…) doesn’t add value. The reproducibility, layering, builders, orchestration, repos, etc… are all build on top of the features that allow isolation. None of that stuff existed before docker/other container build/deploy tools.

Note: I’m not a Linux SME, but I am a software dev who uses Docker every day — I am likely oversimplifying some things here, but this is a better and more accurate oversimplification than “docker is like a VM”, which is a helpful heuristic when you first learn it, but ultimately wrong

Whoah, thanks! Saved, i’ll read it later.

Maybe they just meant that it runs ARM binaries instead of running on Rosetta 2.

Docker requires the Linux kernel to work.

M1 is just worse arm. Since most people use x86_64 instead of arm, docker had to emulate that architecture and therefore had performance issues. Now you’ve got arm specific images that don’t require that hardware emulation layer, and so work a lot better.

Since that didn’t solve the Linux kernel requirement, it’s still running a VM to provide it.

Not making it up, but possibly confused. OCI containers are built on Linux-only technologies.

So that’s why it’s so memory hungry…

Try limiting it down to 2GB (there is an option in the Docker Desktop app). Before I discovered this option, the VM was normally eating 3-4GB of my memory.

On macos it does

Don’t forget the ARM64 to AMD64 conversion.

I was about to comment this. That van also contains QEMU if your host is on ARM64.

Bloody hell

Edit: Reminds of the pimp my ride meme. “We made you an OS so you can VM your VM inside a VM!”

deleted by creator

This was one of the reasons we switched to docker in the first place. Our Devs with M series processors spent weeks detangling issues with libraries that weren’t compatible.

Just started using Docker and all of those issues went away

When I was in school I once used a IOS emulator running inside a docker container of MacOS running on a linux machine. It works surprisingly smoothly.

The difference between Docker and a VM is that Docker shares a kernel, but provides isolated processes and filesystems. macOS has a very distinct kernel from Linux (hence why Docker on macOS uses a Linux VM), I would be shocked if it could run on a Linux Docker host. Maybe you were running macOS in a VM?

Nope, Mac OS as a Docker container, it’s a thing: https://hub.docker.com/r/sickcodes/docker-osx

Also you don’t need a Linux VM to run docker containers on a Mac host btw

TIL, good to know!

The first layer in that docker container is actually KVM. So you run the container to run kvm, which then emulates osx.

Add a JVM just for the hell of it

A foldable bike in the trunk

Now add

dindWe’re reaching levels of containerization that shouldn’t even be possible!

We need to go deeper and put a VM in linux

And of course, all of the above installed via Homebrew for extra recursion

Can someone please explain me like i am 5 what is docker and containers ? How it works? Can i run anything on it ? Is it like virtualbox ?

Think of a container like a self contained box that can be configured to contain everything a program may need to run.

You can give the box to someone else, and they can use it on their computer without any issues.

So I could build a container that contains my program that hosts cat pictures and give it to you. As long as you have docker installed you can run a command “docker run container X” and it’ll run.

Well, I wasn’t the one asking, but I learned from that nonetheless. Thank you!

Is it like virtualbox ?

VirtualBox: A virtual machine created with VirtualBox contains simulated hardware, an installed OS, and installed applications. If you want multiple VMs, you need to simulate all of that for each.

Docker containers virtualize the application, but use their host’s hardware and kernel without simulating it. This makes containers smaller and lighter.

VMs are good if you care about the hardware and the OS, for example to create different testing environments. Containers are good if you want to run many in parallel, for example to provide services on a server. Because they are lightweight, it’s also easy to share containers. You can choose from a wide range of preconfigured containers, and directly use them or customize them to your liking.

A container is a binary blob that contains everything your application needs to run. All files, dependencies, other applications etc.

Unlike a VM which abstracts the whole OS a container abstracts only your app.

It uses path manipulation and namespaces to isolate your application so it can’t access anything outside of itself.

So essentially you have one copy of an OS rather than running multiple OS’s.

It uses way less resources than a VM.

As everything is contained in the image if it works on your machine it should work the same on any. Obviously networking and things like that can break it.

But it’s Unix-like!

Uses a Linux VM for all the assignments anyway.

macOS is not unix-like, it is literally Unix.

reminds me of a russian doll

I would not be surprised to learn this photo was taken in Russia.

Time to check out Podman.

Podman on MacOS is the same, is it not? Running a containers inside a VM?

Yep. Just a nice GUI.

Podman does the same. Podman runs fedora.

it’s like an automotive turducken

Now run a KinD cluster inside that, with containers running inside the worker containers.