I know this a a joke but in case some people are actually curious: The manufacturer gives the capacity in Terabytes (= 1 Trillion Bytes) and the operating system probably shows it in Tebibytes (1024^4 Bytes ≈ 1.1 Trillion Bytes). So 2 Terabytes are two trillion bytes which is approximately 1.82 Tebibytes

They could easily use the proper units, but sometime someone decided to cheat and now everyone does to the point that this is the standard now.

deleted by creator

Eh, that at least goes back to the days of dial-up (at least).

56k modem connections were 7k bytes or less.

The drive thing confused and angered many cause most OSs of the time (and even now) report binary kilobytes (kiB) as kB which technically was incorrect as k is an SI prefix for 1000 (10^3) not the binary unit of 1024 (2^10).

Really they should have advertised both on the boxes.

I think Mac OS switched to reporting data in kilobibytes (kiB) vs kB since Mac OS 10.6.

I remember folks at the time thinking the new update was so efficient it had grown their drive space by 10%!

While macOS did indeed primarily switch to KiB, MiB and Gib, it does at times still report storage as KB, MB, GB, etc., however it uses the (correct) 1000B = 1KB

And afaik, Linux also uses the same (correct) system, at least most of the time.

The only real outlier is Windows, which still uses the old system with KB = 1024B, some of the time. In certain menus, they do correctly use KiB

Please note that kilo is a small k. n, μ, m, k, M, G, T, …

And yes. A lot of people here get at least one of those wrong.

While you are correct, I know no operating system that doesn’t capitalize the K. At the very least not consistently.

I just checked and my Android phone does indeed make the same error. Amazing.

Network / signal engineers have always, and are still, operating in bits not bytes. They’ve been doing that when what we understand now as byte was still called an octet and when you send a byte over any network transport it’s probably not going to send eight bits but that plus party, stop, whatnot ask a network engineers.

deleted by creator

What speed test are you running that gives its results in bytes?

His speed tests consists of downloading files lol

Granted, that’s probably a better way of getting the actual attainable speed

Nonsense. It’s a simple continuation of something that has always been around. They would have needed to actively and purposefully changed it. The first company that tried to sell “1 Megabyte/s” instead of “8 Megabits/s” is shooting themselves in the foot because the number is smaller. If it was going to change, you would need everyone to agree at once to correct the numbers the same way.

Modems were 300 baud, then 1200 baud, then 56.6k baud. ISDN took things to 128k baud, and a T1 was 1.544M baud. Except that sometime around the time things went into tens of k, we started saying “bits” instead of “baud”. In any case, it simply continued with the first DSL and cable modems being around 1 to 10 Mbits. You had to be able to compare it fairly to what came before, and the easiest way to do that is to keep doing what they’ve been doing.

Ethernet continues to be sold in the same system of measurement, for the same reasons.

The first company that tried to sell “1 Megabyte/s” instead of “8 Megabits/s” is shooting themselves in the foot because the number is smaller.

You’re telling me that what I say is nonsense and you just paraphrase what I said.

Don’t go thinking engineering has anything to do with what marketing put up on their storefront.

It has plenty to do with engineering, because it was engineering that first decided to measure things this way. Marketing merely continued it.

Thing is, there’s no rational reason to arbitrarily use groups of 8 bits for transmission over the wire. It’s not just ISPs who use bits, the whole networking industry does it that way.

To expand on this a bit more, bits are used for data transmission rates because various types of encoding, padding, and parity means that data on the wire isn’t always 8 bits per byte. Dial up modems were very frequently 9 bits per byte (8-n-1 signalling), and for something more modern PCIe uses 8b/10b encoding, which is 10 bits on the line for each 8 bits of actual payload.

Before mibi-, gibi-, tibibytes, etc. were a thing, it was the harddrive manufacturers who were creating a little. Everyone saw a kilobyte as 1024 bytes but the storage manufacturers used the SI definition of kilo=1000 to their advantage.

By now, however, kibibytes being 1024 bytes and kilobytes being 1000 bytes is pretty much standard, that most agree on. One notable exception is of course Windows…

Indeed, Windows could easily stop mislabeling TiB as TB, but it seems it’s too hard for them.

The IEC changing the definition of 1KB from 1024 bytes to 1000 bytes was a terrible idea that’s given us this whole mess. Sure, it’s nice and consistent with scientific prefix now… except it’s far from consistent in actual usage. So many things still consider it binary prefix following the JEDEC standard. Like KiB that’s always 1024 bytes, I really think they should’ve introduced another new unambiguous unit eg. KoB that’s always 1000 bytes and deprecated the poorly defined KB altogether

M stands for Mega, a SI prefix that existed longer than the computer data that is being labeled. MB being 1000000 bytes was always the correct definition, it’s just that someone decided that they could somehow change it.

Consistency with proper scientific prefix is nice to have, but consistency within the computing industry itself is really important, and now we have neither. In this industry, binary calculations were centric, and powers of 2 were much more useful. They really should’ve picked a different prefix to begin with, yes. However, for the IEC correcting it retroactively, this has failed. It’s a mess that’s far from actually standardised now

B and b have never been SI units. Closest is Bq. So if people had not been insisting that it’s confusing noone would’ve been confused.

does not mean you can misuse SI prefixes if the unit itself is not part of the system.

deleted by creator

I think there were some court cases in the US the HDD manufacturers won that allows them to keep using those stupid crap units to continue to mislead people. Been a minor annoyance for decades but since all the competition do it & no govt is willing to do anything everyone is stuck accepting it as is. I should start writing down the capacity in multiple units in review whenever buy storage devices going forward.

And as far as my wife is concerned, I’m definitely 6 ft tall. Height ain’t what it used to be.

So what you’re saying is that … we can make up whatever number and standard we want? … In that case, would you like to buy my 2 Tyranosaurusbytes Hard Drive?

Nah, the prefixes kilo-, mega-, giga- etc. are defined precisely how hard drive manufacturers use them, in the SI standard: https://en.wikipedia.org/wiki/International_System_of_Units#Prefixes

The 1024-based magnitudes, which the computing industry introduced, were non-standard. These days, the prefixes are officially called kibi-, mebi, gibi- etc.: https://en.wikipedia.org/wiki/Binary_prefix

You’re missing a huge part of the reason why the term ‘tebibytes’ even exists.

Back in the 90s, when USB sticks were just coming out, a megabyte was still 1024 kilobytes. Companies saw the market get saturated with drives but they were still expensive and we hadn’t fully figured out how to miniaturize them.

So some CEO got the bright idea of changing the definition of a “megabyte” to mean 1000. That way they could say that their drive had more megabytes than their competitors. “It’s just 24 kilobytes. Who’s going to notice?”

Nerds.

They stormed various boards to complain but because the average user didn’t care, sales went through the roof and soon the entire storage industry changed. Shortly after that, they started cutting costs to actually make smaller sized drives but calling them by their original size, ie. 64MB* (64 MB is 64000).

The people who actually cared had to invent the term “mebibyte” purely because of some CEO wanting to make money. And today we have a standard that only serves to confuse people who actually care that their 2TB is actually 2048 GiB or 1.8 TiB.

Dude, a “1.44MB” floppy disk was 1.38MiB once formatted (1,474,560 B raw). It’s been going on for eternity.

It’s inconsistent across time though. 700MB on a CD-R was MiB, but a 4.7GB DVD was not.

RAM has always, without exception, been reported in 1024 B per KB. Inversely, network bandwidth has been 1000 B per KB for every application since the dialup days (and prior).

One thing to point out, The floppy thing isn’t due to formatting, the units themselves were screwed up: It’s not 1.44 million bytes or 1.44 MiB regardless of formatting - they are 1440 kiB! (Which produces the raw size you gave) which is about 1.406 MiB unformatted.

The reason is because they were doubled from 720 kiB disks*, and the largest standard 5¼ inch disks (“1.2 MB”) were doubled from 600 kiB*. I guess it seemed easier or less confusing to the users then double 600k becoming 1.17M.

(* Those smaller sizes were themselves already doubled from earlier sizes. The “1.44 MB” ones are “Double sided double density”)

That’s just wrong. “Kilo” is ancient Greek for “thousand”. It always meant 1000. Because bytes are grouped on powers of two and because of the pure coincidence that 10^3 (1000) is almost the same size as 2^10 (1024) people colloquially said kilobyte when they meant 1024 bytes, but that was always wrong.

Update: To make it even clearer. Try to think what historical would have happened if instead of binary, most computers would use ternary. Nobody would even think about reusing kilo for 3^6 (=729) or 3^7 (=2187) because they are not even close.

Resuing well established prefixes like kilo was always a stupid idea.

Or - you know - for consistency? In physics kilo, mega etc. are always 10^(3n), but then for some bizarre reason, unit of information uses the same prefixes, but as 2^(10n).

Depends on the OS. For some reason MacOS uses Base 10.

deleted by creator

deleted by creator

deleted by creator

The result of marketing pushing base 10 numbers on an archiecture that is base 2. Fundamentally is caused by the difference of 10³ (1000) vs 2¹⁰ (1024).

Actual storage size of what you will buy is Amount = initial size * (1000/1024)^n where n is the power of 10^n for the magnitude (e.g kilo = 3, mega = 6, giga = 9, tera = 12)

Fact: This truth is intentionally manipulation.

OP is right with an applicable staturing the pic.

Solution: Sue the fuck out of all of them. Especially Samsung. Fuck Samsung everything.

its correct, the final size you see in the OS is not kilo/giga/terabytes but kibi/gibi/tebibytes. the problem is less of the drive and more of how the OS displays the value. the OS CHOOSES to display it in base 2, but drives are sold in base 10, and what is given is actually correct. Windows, being the most used one, is the most guilty of starting the trend of naming what should be kibi/gibi/tebibytes as kilo/giga/terabytes. Essentially, 2 Tera Bytes ~= 1.82 Tebibytes. many OS’ display the latter but use the former naming

Base 2 based displays and calling them kilobytes date back to the 1960s. Way before the byte was standardised to be eight bits (and according to network engineers it still isn’t you still see new RFCs using “octet”).

Granted though harddisks seem to have been base-10 based from the very beginning, with the IBM 350 storing five million 6-bit bytes. Window’s history isn’t in that kind of hardware though but CP/M and DOS, and

dirdisplayed in base 2 from the beginning (page 10):Displays the filename and size in kilobytes (1024 bytes).

Then, speaking of operating systems with actual harddrive support: In Unix

ls -lhseems to be universally base-2 based (GNU has--sito switch which I think noone ever uses).-h(and-k) are non-standard, you won’t find them in POSIX (default is to print raw number of bytes, no units).Technically correct with numbers is the only way to ever be correct, let alone right.

Also minus metadata overhead.

Yup, damn formatting also gonna take a chunk

This is intentional, they could offer proper TiB storage but Apple started doing this decades ago just to shave pennies and now it’s caught on

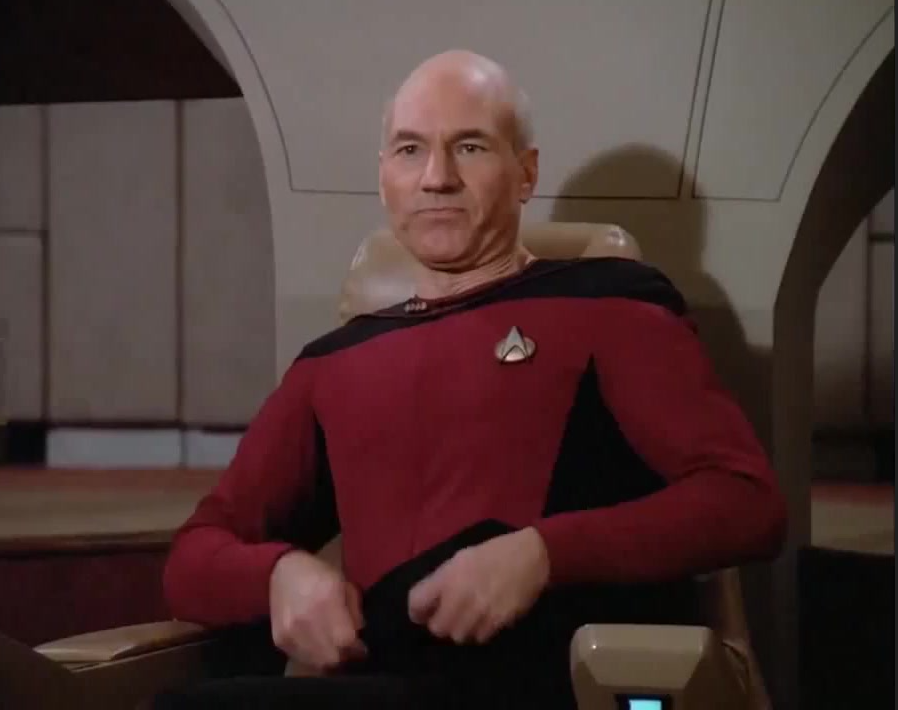

Image Transcription: Meme

I bought a new 2Tb SSD but it shows up as 1.8TB SSD

[An image of a classical art piece. The man in the image is wearing a hat and has a peculiar facial expression. One of his arms is on a table, palm facing up. The other arm is in the air, with the pointer finger touching the palm. Near that hand is the caption “Where’s my 0.2TB”]

This was surprisingly hard to describe

But you did awesome!

I was impressed, until I realised a person typed it. I’m still impressed, just not as much as I was before lol.

I’d be thrilled if the SSD I bought ended up being almost 8x larger than advertised! Does beg the question of why you’re buying 250GB SSDs in 2023 but I’m not here to judge.

Funnily enough, the meme still works. They wanted 0.2 TB, goddammit, not some hugely oversized 1.8 TB hard drive.

even 32 gigs would be good enough for a cheap laptop running non shit OS unless you want to store any bigger data or play games.

250gb ssd could be used as a boot drive while you use a hdd to store your files

deleted by creator

Same here 256gb ssd owner ill prefer to get something a little bit bigger but I also have a secondary hdd though to store all my files on which makes everything all good

deleted by creator

Instead of that we should protest against si k should be K

- B

- kB < — Imposter

- MB

- GB

- TB

- PB

(Since this is SI it’s powers of 10^3 not 2^10 when going one level up)

Big K is Kelvins (temperature), so the multiplier had to be little k

How unfortunate However, KiB

Wait until you find out about Calories vs calories

and

μshould beumaybe… why throw in a random non-latin character? is it for the sake of anti-anglocentrism?Can’t tell if this is sarcasm (I’ve been on the internet too much today sorry) but just in case the Greek μ (mu) stands for “micro” since ‘m’ is already used for “milli”

yeah, i get that, but many people just use

ubecause its more accessible (ascii and qwerty compatible), similar to writingμBittorrentasuBittorrent. imo i dont think its too big of a deal if it doesnt match the wordmicro, sinceμis already a bit of a stretch. this is just my opinion, im not advocating for a SI reform or anything :)μ is not a stretch at all, it’s literally the first character of the word micro. (Mu, iota, kappa, rho, omega). Similar to how other scientific words are derived from their original language.

It doesn’t matter, most people will understand you if you write um instead of μm, because it isn’t ambiguous.

That’s for the magic numbers that hold the 1.8 TB together. They live in that 0.2 TB and if you kill them then the 1.8 TB fly apart at the speed of light.

That metaphor is . . . not entirely wrong.

Actually it’s is because firmware is tiny

deleted by creator

Taxes

government filling up a secret section of every factory-fresh hard drive with CSM and terrorist material in case they ever want to lock you away

yes officer thats what happened

Yah this bugs me so much!! On top of that how dare system OS take up so much space?

I just delete that so there’s more room for my stuff.

I need more space for memes. This bulky System32 folder is in the way.

I don’t know why I need 1 system, let alone 32 of them.

deleted by creator

I don’t even remember that N64 game! What’s it doing on my PC?

Yeah you can also just download more storage. I do it all the time.

Debian Linux with no desktop is like 3gb, if you’re interested.

I for one am getting tired of the Linux circle jerk.

Does Linux have its merits. Heck yeah.

Do I have time as a professional working all day to run and mess with Linux. Heck no.

It’s not that I’m a Luddite, I’m a software developer. And the last thing I want is to configure Linux. Sure it’s easier now, but I’m a nerd and the second i install it imma want to do it right and well there goes my weekends.

Then who is going to tend to my Factorio factory.

Imagine buying 14TB and find out that it is 12TB instead.

I’ve always known the advertised space is larger than the actual space, but it was never quite the shock as it was when I recently bought an 18TB external drive with ~16 TB usable.

18 “TB” with ~16 TiB usable 😞 they scammed us so hard they renamed TB and GB

It was so during the age of floppy discs. Our computers use TiB, marketers use TB to sell storage

The biggest problem is that Windows still calls TiB and friends with si prefixes (so 1TiB shows as 1TB). MS has done this since DOS (but at least back then MiB didn’t exist. They could’ve used base 10 though).

TiB (and the related) didn’t get named until recently, and I think only Linux uses those abbreviations — and not universally — windows still says kB, mB etc, while using the binary equivalents

“recently”, they are the standard for almost 25 years now.

deleted by creator

The issue is in your software that displays the capacity (most likely windows).

You bought 2 TB SSD. You got 2 TB SSD. This is equivalent to 1.8 TiB (think of it like yards and meter). Windows shows you the capacity in TiB, but writes TB next to it.

Say you buy a 2.18 yard stick. You get a 2.2 yard stick, which is equivalent to 2 meter. Windows will tell you it’s 2 yards long. Why? I don’t know.

Is this like one is 1.000 and one is 1.024 (=2^10)?

Base 10 vs base 2

yes

in small print 2TiB*

From wikipedia:

More than one system exists to define unit multiples based on the byte. Some systems are based on powers of 10, following the International System of Units (SI), which defines for example the prefix kilo as 1000 (103); other systems are based on powers of 2.

Your system calculates 1 terabyte as 1 tebibyte which is 2^40 bytes=1,099,511,627,776 bytes and the hardware manufacturers calculate 1 terabyte as 1 terabyte which is 10^12=1,000,000,000,000 bytes. That is where the discrepancy is.

200 GB thats nearly CoD Warzone

Which is nothing compared to what ARC survival wants. Games are ridiculous these days. I’m not giving up 1/10th of my storage for a fucking game.

It’s the same way with lumber lol

A 2x4 is in fact not 2"x4"

Wait, what?

Yeah a typical 2x4 is actually 1.5"x3.5"

2x4 is (supposedly. I bet they have optimized this down too) the size of raw lumber before it was finished, that needs to remove some material off each side.

They’ve been doing this for literally centuries.

I think it started out with a rare case of honest advertising. So for example 720K floppies were advertised as 720K. But then some

lying bastardclever marketer decided to start advertising their 720K floppies as 1MB floppies, sometimes but not always marked “unformatted capacity”.And of course this had the desired effect of making people buy their disks instead of the honestly marketed ones, because people didn’t read the small print and thought they were getting more storage, which was important before CDs were a thing and software distributions were starting to need multiple disks. So everyone had to start doing it.

This is as far back as my memory of the practice goes, so it may have started before 720K floppies were mainstream, but that’s why disk manufacturers now advertise the unformatted capacity of their drives instead of the formatted, aka usable, capacity.

they’ve been doing this for literally centuries

So it’s Friday December the fifth, 80 AD, 5:30pm, and Mozes is hacking away on his clay tables to nail down the final tally of this week’s adminstration of the amount of cows his boss owns and he goes "Mother fucker! These romans again ripped me off, sold me a clay tablet that only allows me to count to 720 cows, not the 751 I got! FUCK! Now I need another tablet and start tallying from the beginning, you mother fuckers!

They’ve been doing this for literally centuries.

*Proceeds to talk about floppy disks

How long do you think digital computers have been around?

They’ve existed in at least two centuries

Hush! Don’t point it out! Lure him into a corner and steal his time machine!

For centuries. Justification: Jesus was dead for three days: from Friday afternoon (3pm?), day 1, through Saturday, day 2, and into Sunday early morning (6am?), day 3. Total elapsed time 39 hours. Digital computers were around last century (19xx) and this century (20xx), which is two centuries by the same logic. Also two millenia, but I find “centuries” a more satisfying word. Colossus went into operation in 1943, so that’s 80 years elapsed time.

Fails to recognize exaggeration, thinks they’re clever.

Fails to aggerate, fails to be taken seriously