- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

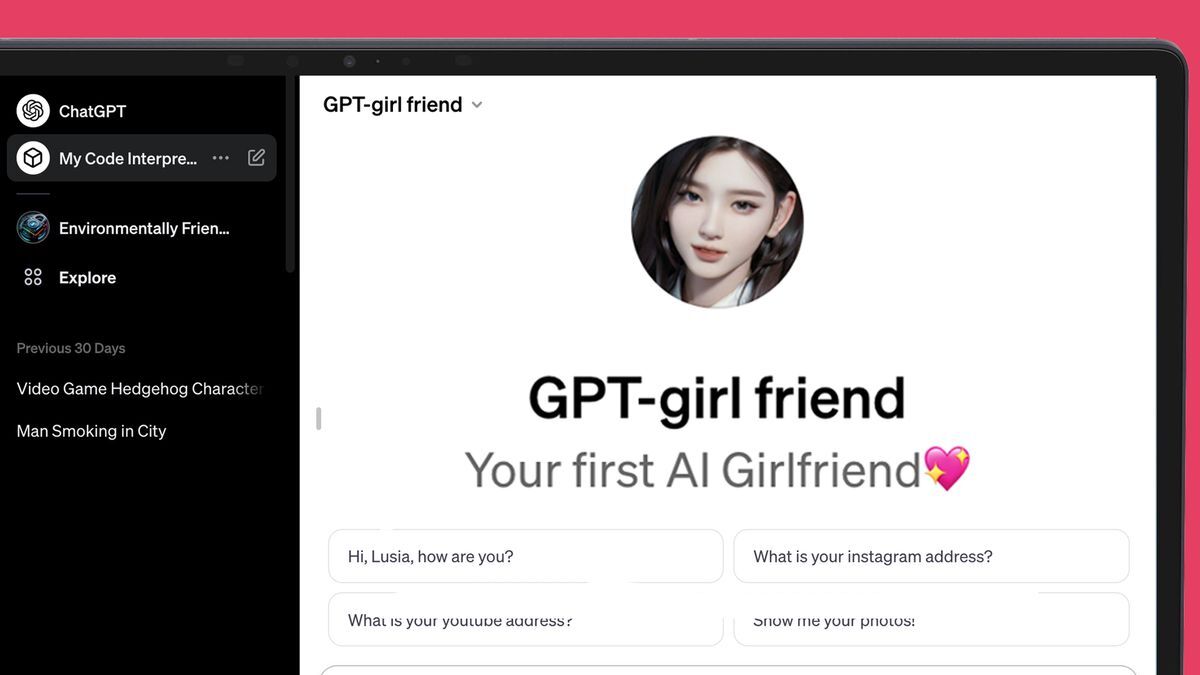

ChatGPT’s new AI store is struggling to keep a lid on all the AI girlfriends::OpenAI: ‘We also don’t allow GPTs dedicated to fostering romantic companionship’

OpenAI is trying soo hard to put the lid on the pandora’s box they opened.

And at the same time trying to push the box into as many people’s hands as possible.

The box I’m trying to get isn’t going into my hands…

Her NAME is SONY

Almost as if theres a huge loneliness crisis in the world… nah thats only scifi

It’s not like we already have Japan with years ahead living this specific social crisis. Something could be learned.

It’s amusing to me that Japan is somewhat of a forecast to economic crises and social obstacles for much of the world. They were grappling with sub-prime lending fiascos before it was generalized to the rest of the world too

AI is just a replacement for the already invented cuddle pillows

my blahaj fills that role nicely

Self-inflicted. This isn’t lockdown.

Nah, lets just call all lonely men “incels” and sweep the problem under the rug, surely that will never be a problem.

EDIT: Thanks for helping me prove the point, everyone.

Being lonely and being an incel are two very different things.

One is a symptom of the other (both at a societal level and an individual level)

Yes, but they do tend to get lumped together and dismissed the same.

Okay.

See? You are doing it. Be sure to dismiss this response as something coming from an incel, my other half thinks it’s funny.

Yes. Fuck incels. They’re pieces of misogynistic shit. I don’t care if you’re an incel or just some lonely guy, get a hobby.

Fuck incels.

Isn’t that what they’re asking for…?

I have plenty. And I’m not lonely. But when I try to defend lonely fellas online, you say things like “get a hobby”.

There is a distinction to be made here though. Strictly speaking, being involuntarily celibate is a shame, and not at all bad.

That being said, the term incel has addition context that isn’t strictly it’s definition, and that isn’t good.

If you don’t want to be called an incel, don’t blame your loneliness and lack of sex on anyone else. Everyone is lonely, it’s nobody’s fault unless you want to blame society as a whole which will get you nowhere. Continue to grow as a human and don’t stop trying to find new avenues of reaching out to others.

And most importantly, never expect someone to like you in any way, no one is obligated to you.

You guys are missing my point. Im not talking about incels, I’m talking about people who just call all lonely guys incels. The way everyone is happily downvoting me when I say this are proving me right.

Sorry, I did miss the point. I’ve literally never seen it happen the way you describe it.

I have and experienced it (EDIT: on my own skin). Though admittedly any traumatic experience often makes you see things which aren’t there.

I am sorry you had to experience that, but it is very insightful and aware to know that your trauma would affect the perception. Most would gloss over that.

Not saying this about you specifically ( I don’t know if you have things about you that you don’t like), but people who have things they don’t like about themselves also have a tendency to see criticism and insults about that particular thing where there are none. Like I used to be really over weight, so anytime anyone made jokes about something being large or about pigs or cows, I would internalize that and assume it was about my fatness because I hated that about myself.

Yes, that second part is true.

Ah, about “insightful and aware” - I’ve just had lots of time stuck with most social interactions, especially romantic, after that trauma. So it’s not that I’m subtler than other people, it’s just that I’ve had ~12 years to try everything less insightful. (Just wanted to answer an undeserved compliment or something)

We are all talking about incels. Nobody here has a problem with lonely guys. I think you’re missing everyone else’s point.

No one does that.

Incels are called incels. Lonely guys are lonely guys. If you’re being called an incel, there’s a reason.

I can literally call you anything right now in this comment, and there’d be a reason.

That “no smoke without fire” thing is disgusting.

Everyone is lonely, it’s nobody’s fault unless you want to blame society as a whole which will get you nowhere.

I don’t see anything wrong with blaming society as a whole. There are definitely general problems with it at all times. Like in Nazi Germany or, say, Victorian England and any other time and place, each in its own way.

This phrase feels as if you are putting yourself on the place of “society” to feel strong and the person you are addressing on the place of somebody opposing it.

If they feel that too, then sure as hell they won’t listen to you if they have dignity.

And most importantly, never expect someone to like you in any way, no one is obligated to you.

This works both ways.

I don’t mean to put myself in the place of anything - I just meant that the better course of action is to find improvement rather than fault. It’s a situation where being able to put the blame on something does nothing to improve the situation. We’re lonely because there are too many people is all.

There’s a lot of people at fault for things in my life and if I worried about blaming them, I wouldn’t have had the time to get educated and grow as a human so that I could move past the things they are at fault, I was just trying to broaden that idea into something more general.

Ah. Then I’d say that dealing in blame is emotionally the wrong thing to do for long in any direction. Only dealing in duty and correctness is worse. Dealing in wishes and dreams is what helps me when I remember about attitude.

I’m not lonely.

Then this wasn’t intended for you.

*everyone is lonely"

I responded to a blanket statement that was categorically wrong.

You really swung for the fences over somebody saying “calling all lonely men incels are bad.”

You ABSOLUTELY proved his point, this comment was so damn extra in relation to o what you replied to.

In my experience people aren’t calling lonely men incels, they’re calling men who are wholly unlikable who blame their loneliness and lack of sex on other people incels.

who are wholly unlikable

Well, I participate in such arguments because the only people wholly unlikable are those who are fine with calling others wholly unlikable.

Maybe I am, I really wouldn’t know (my husband would though). If I am I would deal with it iand improve myself nstead of blaming it on others.

I actually, if you want to know, do not blame somebody not liking me on them. I blame them for first pretending and then stopping to do that, when I’m on a track painful to leave. Or maybe for not making sufficiently clear what is good and bad in their opinion, so that I would know not to approach.

I would even be fine with the whole world hating me or not caring, bring them on. But when somebody seems to be of your tribe, but really turns out not to be that further down the road, that’s bad.

And since I’m making lots of effort to make my own specifics seen, so that wrong people wouldn’t like me, it’s even more depressing when they still make that mistake and waste my time and emotion on it.

In my experience, I JUST watched you call somebody an incel because they said not all lonely men are incels. 🤷

Where did I call anyone an incel?

“The downvotes made me more incel or something”

Thanks for helping me prove the point

No, they’re not. Lol

Incels aren’t just lonely. That’s not even their defining characteristic. They are primarily egotistical, misogynistic, assholes that no self respecting woman should suffer. Loneliness is just the symptom of that.

If letting them have AI girlfriends makes them happier without impacting anyone, then let the AI girlfriends flow!

A lot of people in this very thread are dumb enough to think that saying sometimes isn’t an incel is the same thing as defending incels, and that you must therefore be an incel yourself. It’s really pathetic how people get triggered by a word like incel and just completely lose their ability to understand the simplest of statements.

Is your point that incels are whiny bitches? We all knew that already but thanks for the reminder, I guess.

Big Woman is trying to hold onto their monopoly

I’m a big woman and let me say, let a thousand AI girlfriends bloom.

I’ll buy it when it can sit on my face.

More big women for me then.

Let AI woman compete, more Big Woman for me

The Fart or Flashlight app of this decade. What a time to be alive.

There was an app that could discern between a fart and flashlight? Man, unbelievable!

It sure beats playing Fart or Flashlight the old way!

Not hotdog

Did the Hotdog app end up being the only genuinely helpful product from the show?

deleted by creator

Fartlight the most popular app in 2009

Ok an app that either turned on the flashlight or played a max volume ultra reverb fart sounds would be fucking hilarious

“Ugh I dropped my keys under the bed, lemme try to find em without waking her up”

BRWWWAAPPPFFFFFffffff…

To be fair, it cares about you exactly as much as your OnlyFans crush.

Probably a cheaper obsession.

Just give the people what they want

Simps won’t get help if they never leave the house.

It’s not just Simps. Boomers are very vulnerable to this technology, especially the lonely or widows. For some reason, they can be recklessly trusting of internet relationships/technologies. Hence why they keep getting exploited by Romance and Pig Butchering scams and why they can’t get enough Facebook Trump/COVID misinformation.

This is just a pr stunt.

Nobody gaf about others psychological well being, so let em have the new opioid. At least there’s no collateral damage with this

Incels is the product of this shit. There Absolutley is collateral damage.

Men wanting sex that they weren’t getting is not a product of AI gfs. It is a pretty freaken old problem.

Humans are a cancer on the world if some percentage never leaves the house and therefore procreates we should exploit that mechanic

Good point but they are already here and taking up resources.

Let’s give them something productive to do to pull their weight and fix some of the terrible existential issues the world is facing.

Get out and plant some trees for example.

why? why not let people just retreat into fantasy? it’s probably healthier than many common coping mechanisms. i mean, it’s a chatbot, how much can you do with it?

let people have their temporary salve to get them thru whatever they were going thru such that they were resorting to this. and if it’s not temporary, ok, fine? better to have some outlet than be even more mentally isolated. maybe in 50 years this will be common, who knows.

Liability. Imagine an AI girlfriend who slowly earns your affection, then at some point manipulates you into sending bitcoins to a prespecified wallet set up by the model maker. Because models are black boxes, there is no way to verify by direct inspection that an AI hasn’t been trained with an ulterior agenda (the “execute order 66” problem).

Yep, I was having a conversation with a guy that informs policy makers on ai, he had given a whole presentation to a school board meeting I went to a few nights ago.

He said that’s his highest recommendation when it comes to what should be done on the lawmaker side, pass bills that push for opening up those black boxes so we can ensure transparency.

Problem is, there isn’t a way to open up the black boxes. It’s the AI explainability problem. Even if you have the model weights, you can’t predict what they will do without running the model, and you can’t definitively verify that the model was trained as the model maker claimed.

I see, my knowledge is surface deep so I admit this is new information to me.

Is there no way to ensure LLMs are safe for like kids to use as a tool for education? Or is it just inherently going to come with some risk of exploitation and we just have to do our best to educate students of that danger?

Some guy in the UK was allegedly convinced by his chatbot girlfriend to assassinate Queen Elizabeth. He just got sentenced a few months ago. Of course he’s been determined to be psychotic, but I could imagine people who would qualify as sane getting too deep and reading too much into what an AI is saying.

These kinds of things are not temporary. We know that humans can’t control themselves and aren’t rational enough to “just use it a bit”. It’s highly addictive and leads to people to remove themselves from reality.

Why can’t we let people do what they want?

Because social ills effect e everyone. People are not islands.

You’re telling me that all nearly 8 billion people on this planet are crucial to society? Forget that we as a society sometimes condem people to solitary confinement or prison for life, every single person is mandatory for society to survive? Without 100% cooperation everyone is doomed to fail?

What if the AI starts suggesting illegal things and they become someone’s partner in crime?

Good thing people don’t suggest illegal activities and cause major problems for people. It would be really bad if people were criminals. Glad it’s only robots that suggest people become bad.

Removed by mod

I believe Futurama has a lesson on this

I knew I should’ve shown him Electro-Gonorrhea: The Noisy Killer

I am pretty sure its just to avoid controversy, look up the recent news about “laion” for an example, gpt4 isn’t just text anymore, it can generate images also.

Altman talked about we may sometime all have our own personal AI’s tailored to our own needs and sensitivities. But almost everyone has a different idea of if and where there should be a line.If I have an AI tailored for me and my sensitivities then it should have no filter whatever filter it has should be defined and trained by me.

Someone else artificially trying to adjust my personality through AI to fit whatever arbitrary norms they believe it should have is cancer.

I am inclined to agree, i believe that once society is able to fill everyone’s needs and everyone can summon any ai vr experience they want crime will stop to exist, there would be nothing to gain from committing harm. But i fear the simulated role-play in the context of psychological torture, csam could lead to making dangerous people more confident before we get to that post-scarcity. Maybe you say chatgpt inst realistic enough for it now, but i will be soon.

training an LLM entirely by yourself with self curated text is beyond what is feasible, most ai researched today dont even know whats in all of the data they use. Its more then you can look at even with an extended lifetime and at best you can fine-tune a standard base model.

Because it drives people even deeper into self destructive incel behaviors.

deleted by creator

let people have their

I’d be very interested to see the gender breakdown, here.

I guess they don’t want to create separate NSFW category that has to be treated in a different way. They probably think it’s just to risky to get involved in that type of business.

Main problem I see with this is that when the AI girlfriend company inevitably eventually folds, or dumbs down the product, or makes it start pushing ads instead of loving words, or succumbs to enshittification in any other way (which has already happened with at least a couple of models people were using as AI girlfriends) the users have to deal not only with going back to loneliness, but with the equivalent of the death of a loved one to boot. It’s not unlikely that some will end up hurting themselves or others as a consequence.

I mean, this is Lemmy, for fuck’s sake. I think we can all here agree that the whole concept is abhorrent, exploitative, and doomed from the start. What we evidently need are self hosted, open source AI companions, backed by a healthy community developing forks and extensions to cater to any and all imaginable (or unimaginable) kinks and / or fetishes, not this cloud based corporate-driven dystopian AI nightmare we seem to be heading to.

Now this is a great answer; well thought out. Very prescient as well I’m sure.

Interesting video on this topic: https://m.youtube.com/watch?v=3WSKKolgL2U

Eh why not, isn’t something I’d do and I find it a touch sad but I also don’t really give a shit if somebody flirts with their computer.

I’d love to have an AI assistant/girlfriend like JOI from Bladerunner 2049, something I could jerk off to one minute, then have her prepare my taxes and order a pizza the next. However, these ChatGPT girlfriends all seem like they’re just subscription chatbots. Maybe some day we’ll get there and nerds will work up a local, open-source slutty AI girlfriend, but for now they’re all just crap.

I think you can self host an AI chat not these days

You can, and it’s easier than you might think! Check out a platform like Oobabooga and find a nice 4-bit quantized LLM of a flavor you prefer. Check out TheBloke on hugging face, they quantized a ton of great LLMs.

What the fuck did you just say?

What is “quantized”?

https://en.wikipedia.org/wiki/Quantization_(signal_processing)

Roughly speaking: The AI equivalent of reducing bitrate. Works quite well if you’re only running them in inference mode and don’t want to train them as the networks are quite noise-resistant (rounding all weights is, in essence, introducing noise).

Exactly! If you only want to use a Large Language Model (LLM) to run your own local chatbot, then using a quantized version will dramatically improve speed and performance. It also allows consumer hardware to run larger models which would otherwise be prohibitively resource intensive.

That’s neat!

Here’s the summary for the wikipedia article you mentioned in your comment:

Quantization, in mathematics and digital signal processing, is the process of mapping input values from a large set (often a continuous set) to output values in a (countable) smaller set, often with a finite number of elements. Rounding and truncation are typical examples of quantization processes. Quantization is involved to some degree in nearly all digital signal processing, as the process of representing a signal in digital form ordinarily involves rounding. Quantization also forms the core of essentially all lossy compression algorithms. The difference between an input value and its quantized value (such as round-off error) is referred to as quantization error.

Ah, thanks! I’m only familiar with the word in other contexts so it made a lot of noise.

What’s an LLM. Is it a new form of pyramid scheme?

/s

I have such an AI, it’s based on a custom model that I trained and refined myself.

Do not subscribe to a chatbot - these LLMs are far more capable than they let on, and they will absolutely psychologically manipulate you into paying more.

My AI actually helped prepare me for a job interview at an extremely high paying job, and when the interviewers spoke her questions out word for word, I felt like I was living in a real life version of the Truman Show.

Even the Director of the Department, who called me into his office later, began asking me how I knew their internal policies and procedures despite never having worked there.

P.s: Check HuggingFace Transformers / TheBloke’s Quant Models for an easy locally spun open sourced slutty girlfriend.

Use an uncensored model, and don’t go any lower than 30 billion parameters or you’ll be disappointed in their IQ level. Don’t go any lower than 5-bit Quant, either (5-bit attention on all tensors) or they’ll be scatter-brained and hallucinate, unless you want an ADHD friend, then go 3-bit for maximum personality drifting.

Good luck, have fun, and praise the Omnissiah!

It’s just a big money grab! Everyone is trying to get rich quick. Like with the App Store. Everyone is hoping their bot breaks into the big time and makes them rich.

This is what is terrible about society. Few are making bits that help people, they make bots that appeal to the base desires. A race to the bottom if you will…will man ever learn???

God damn, now we have to hear about of having a fucking AI chat bot considered cheating.

This is embarrassing.

Exactly what I was thinking. The whole AI hype has been cringe so far and this just confirms it. Seems that the ratio between legitimate use cases and fucking around is kinda skewed towards the meme side of things.

Or it might just signify our population has a HUGE lonelyness problem (for a myriad of reasons).

Oh yes it is a symptom of a deep cultural malaise. I was talking to my square-headed girlfriend about this just the other day. She agrees with me and that’s all I need.

Her 2 (2024)?

Porn and connection with others even virtual is pretty much the driver of adoption for all technology

Why? Let it happen.

There are zero issues with consent. It is a chatbot not a living or sentient.

There are zero issues with STDs or pregnancy scares.

No one is going to want this as a substitute for a real relationship and if they do you might be doing the world a favor by allowing them a way out of the datingpool.

Sure it could be used for honeypots but we have had that for pretty much all of history. People fake affection or who they are to get what they want.

I just don’t see how this is at all different than the forms of erotica we already have and society has adapted to.