- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

About how far does this leave us from a usable quantum processor? How far from all current cryptographic algorithms being junk?

The latest versions of TLS already have support post-quantum crypto, so no, it’s not all of them. For the ones that are vulnerable, we’re way, way far off from that. It may not even be possible to have enough qbits to break those at all.

Things like simulating medicines, folding proteins, and logistics are much closer, very useful, and more likely to be practical in the medium term.

Is there gov money in folding proteins though? I assume there’s a lot of 3 letter agencies what want decryption with a lot more funding.

There’s plenty of publicly funded research for that, yes.

Three letter agencies also want to protect their own nation’s secrets. They have as much interest in breaking it as they do protecting against it.

yes of course, and nuclear arsenal build up doesn’t exist because govts have that kinda foresight

Except there’s evidence they do, in fact, go both directions.

For example, DES had its s-boxes messed with by the NSA. At the time, the thought was that they were intentionally weakening it. Some years later, public cryptographers developed differential cryptanalysis for breaking ciphers. They found that the new s-boxes in DES made it resistant to differential cryptanalysis. It appears the NSA had already developed the technique and had made DES stronger, not weaker. Because again, they need to protect their own stuff, too, and they used and promoted DES to get there.

They also gave it a really short key that was expected to be broken by the '90s, which is also exactly what happened.

They appear to be going a similar direction with elliptic curves. They seem to be resistant against certain attacks, and the NSA was promoting them earlier than most public cryptographers.

deleted by creator

Algorithms will be easier and faster to fix than the process of getting this breakthrough to viability

Maybe they can use the same techniques for keeping their product management and feature roadmap for more than an hour.

Just in time for the fall of American democracy. What could possibly go wrong.

Seeing quantum computers work will be like seeing mathemagics at work, doing it all behind the scenes. Physically (for the small ones) it looks the same, but abstractly it can perform all kinds of deep mathematics.

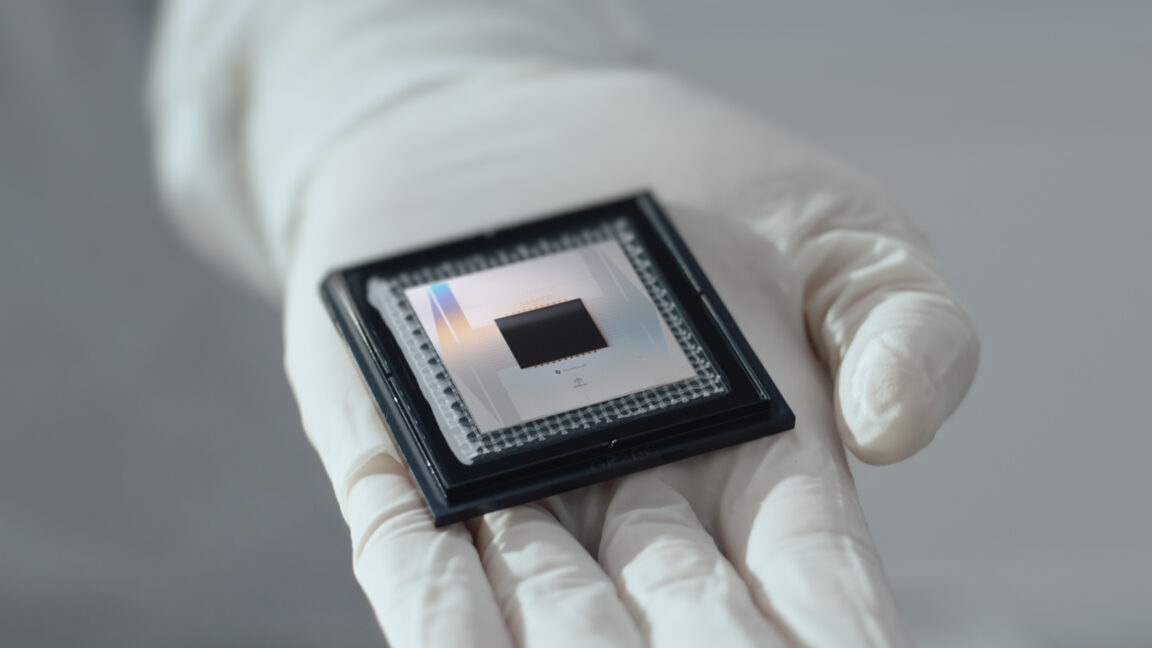

108 qubits, but error correction duty for some of them?

What size RSA key can it factor “instantly”?

Currently none, I think it’s allegedly 2000 qbits to break RSA

afaik, without a need for error correction a quantum computer with 256 bits could break an old 256 bit RSA key. RSA keys are made by taking 2 (x-1 bit) primes and multiplying them together. It is relatively simple algorithms to factor numbers that size on both classsical and quantum computers, However, the larger the number/bits, the more billions of billions of years it takes a classical computer to factor it. The limit for a quantum computer is how many “practical qubits” it has. OP’s article did not answer this, and so far no quantum computer has been able to solve factoring a number any faster than your phone can in under a half second.

Which hour ? If they create real quantum computer they can start identifying person that creates reality for all of us, assuming reality is broadcasted by collective mind, I doubt they can do it right now and I am sure the moment they start that person will log out from internet. Good bye then.

google can walk up the passageway of elton john for all i care!

I know a good therapist, if need be!

There’s a dime stuck in the road behind our local store, tails side up, for over 15 years. And that doesn’t even need error correction.

Why does it sound like technology is going backwards more and more each day?

Someone please explain to me how anything implementing error correction is even useful if it only lasts about an hour?

I mean, that’s literally how research works. You make small discoveries and use them to move forward.

What’s to research? A fucking abacus can hold data longer than a goddamn hour.

Are you really comparing a fucking abacus to quantum mechanics and computing?

they are baiting honest people with absurd statements

Russian election interference money dried up and now they’re bored

They are shaking some informative answers out of literate people so I’m getting something out of it :D

True

Absurdly ignorant questions that, when answered, will likely result in people knowing more about quantum computing than they did before.

And if it stopped there, we’d all be the better for it :)

Don’t feed the trolls.

Yes, as far as memory integrity goes anyways. Hell, even without an abacus, the wet noodle in my head still has better memory integrity.

Are you aware that RAM in your Computing devices looses information if you read the bit?

Why don’t you switch from smartphone to abacus and dwell in the anti science reality of medieval times?

And that it looses data after merely a few milliseconds if left alone, that to account for that, DDR5 reads and rewrites unused data every 32ms.

You’re describing how ancient magnetic core memory works, that’s not how modern DRAM (Dynamic RAM) works. DRAM uses a constant pulsing refresh cycle to recharge the micro capacitors of each cell.

And on top of that, SRAM (Static RAM) doesn’t even need the refresh circuitry, it just works and holds it’s data as long as it remains powered. It only takes 2 discreet transistors, 2 resistors, 2 buttons and 2 LEDs to demonstrate this on a simple breadboard.

I’m taking a wild guess that you’ve never built any circuits yourself.

I’m taking a wild guess that you completely ignored the subject of the thread to start an electronics engineering pissing contest?

Do you really trust the results of any computing system, no matter how it’s designed, when it has pathetic memory integrity compared to ancient technology?

That is not a product. This is research.

And you would have been there shitting on magnetic core memory when it came out. But without that we wouldn’t have the more advanced successors we have now.

Nah, core memory is alright in my book, considering the era of technology anyways. I would have been shitting on the William’s Tube CRT Memory system…

https://youtube.com/watch?v=SpqayTc_Gcw

Though in all fairness, at the time even that was something of progress.

Doubt.

Core memory loses information on read and DRAM is only good while power is applied. Your street dime will be readable practically forever and your abacus is stable until someone kicks it over.

You’re not the arbiter of what technology is “good enough” to warrant spending money on.

Obvious troll is obvious

Must be the dumbest take on QC I’ve seen yet. You expect a lot of people to focus on how it’ll break crypto. There’s a great deal of nuance around that and people should probably shut up about it. But “dime stuck in the road is a stable datapoint” sounds like a late 19th century op-ed about how airplanes are impossible.

The internet is pointless, because you can transmit information by shouting. /s

AND I can shout while the power is out. So there!

Not to mention that quantum cryptography has found ways to prevent that already.

deleted by creator

Based take.

You disrespect the meaning of based

He meant base

As stable as that dime is, it’s utterly useless for all practical purposes.

What Google is talking about it making a stable qbit - the basic unit of a quantum computer. It’s extremely difficult to make a qbit stable - and as it underpins how a quantum computer would work instability introduces noise and errors into the calculations a quantum computer would make.

Stabilising a qbit in the way Google’s researchers have done shows that in principle if you scale up a quantum computer it will get more stable and accurate. It’s been a major aim in the development of quantum computing for some time.

Current quantum computers are small and error prone. The researchers have added another stepping stone on the way to useful quantum computers in the real world.

It sounds like your saying a large quantum computer is easier to make than a small quantum computer?

That is one of the things the article says. That making certain parts of the processor bigger reduces error rates.

I think that means the current quantum computers made using photonics, right? Those are really big though.

Do you have any idea the amount of error correction needed to get a regular desktop computer to do its thing? Between the peripheral bus and the CPU, inside your RAM if you have ECC, between the USB host controller and your printer, between your network card and your network switch/router, and so on and so forth. It’s amazing that something as complex and using such fast signalling as a modern PC does can function at all. At the frequencies that are being used to transfer data around the system, the copper traces behave more like radio frequency waveguides than they do wires. They are just “suggestions” for the signals to follow. So there’s tons of crosstalk/bleed over and external interference that must be taken into account.

Basically, if you want to send high speed signals more than a couple centimeters and have them arrive in a way that makes sense to the receiving entity, you’re going to need error correction. Having “error correction” doesn’t mean something is bad. We use it all the time. CRC, checksums, parity bits, and many other techniques exist to detect and correct for errors in data.

I’m well aware. I’m also aware that the various levels of error correction in a typical computer manage to retain the data integrity potentially for years or even decades.

Google bragging about an hour, regardless of it being a different type of computer, just sounds pathetic, especially given all the money being invested in the technology.

Traditional bits only have to be 0 or 1. Not a coherent superposition.

Managing to maintain a stable qubit for a meaningful amount of time is an important step. The final output from quantum computation is likely going to end up being traditional bits, stored traditionally, but superpositions allow qubits to be much more powerful during computation.

Being able to maintain a cached superposition seems like it would be an important step.

(Note: I am not even a quantum computer novice.)

Because quantum physics. A qubit isn’t 0 or 1, it’s both and everything in between. You get a result as a distribution, not as distinct values.

Qubits are represented as (for example) quantumly entangled electron spins. And due to the nature of quantum physics, they are not very stable, and you cannot measure a value without influencing it.

Granted, my knowledge of quantum computing is very hand-wavy.

and you cannot measure a value without influencing it.

Which, to me, kinda defeats the whole purpose. I’m yet to wrap my head around this whole quantum thing.

It’s important to how they operate. The idea is that when you measure the value, it collapses into the right answer.

I do get that, yes it’s more complicated than I can fully wrap my brain around as well. But it also starts to beg the question, how many billions of dollars does it take to reinvent the abacus?

Again, I realize there’s a bit of a stark difference between the technologies, but when does the pursuit of over-complicated technology stop being worth it?

Shit, look at how much energy these AI datacenters consume, enough to power a city or more. Look at how much money is getting pumped into these projects…

Ask the AI how to deal with the energy crisis, I’ll only believe it’s actually intelligent when it answers “Shut me and all the other AI datacenters off, and recycle our parts for actual useful purposes.”

Blowing billions on quantum computing ain’t helping feed, clothe and house the homeless…

Contrarian much, you have multiple answers for exactly the answer you asked for.

As a species, the one thing that defines us is the pursuit of technology to overcome our natural physical ability. We are currently hitting a wall in regard to electron based computing.

I think you’re confusing technology with politics to the point you’re just making a point unrelated to the topic.

All tech raises the standard if that’s then used by people to horde resources and have an unbalance in quality of life that’s a policy issue not one of the technology.

What a sad view of humanity to think that our one defining characteristic should be pursuit of technology rather than the ability to intelligently collaborate and thereby form communities with a shared purpose.

I can assure you that the success of human survival throughout the history of our species has had far more to do with community and resourcefulness than with technological advancement. In fact it should be clear by now technological advancement devoid of communal spirit will be the very thing that brings an untimely end to our entire species. Our technology is destroying the climate we depend on and depleting the soil that we need for growing food, to say nothing of the nuclear bombs that could wipe us out with the wrong individuals in positions of power.

I tend to agree with this. It’s why I’ve become less and less interested in the advancement of technology and more interested in ways to use the tech we have to build community.

You’ve kind of whiffed what I said, at no point did I talk about tech over all else like some kind of Adeptus Mechanicus :D

My take here is that our grasp of tech is what allowed us to surpass other animals. Again, looking at “technology” in some really shallow one dimensional way. There are tons of environmental and communal benefits we’ve gained through our technological pursuits, the sad view is maybe thinking all tech things are bad and viewing that part of our world only in its moral inferiorities. Our domestication of fire being a prime example of a technology benefitting our social and communal enrichment.

Good job moving the conversation further away from the post

I’m directing my criticism specifically on the technological advancement which is devoid of communal spirit, not on all technological advancement categorically.

Crediting human achievement to technological advancement is a mistake in my opinion. Technological advancement is not inherently good or bad. Communal spirit is what determines whether technology yields positive or negative outcomes. That’s the real ingredient behind everything humans have achieved throughout history.

Sadly techno-optimism has become a prevailing mindset in today’s world where people and institutions don’t want to take responsibility for the consequences of their actions because of belief that as-yet-unknown technological advancement will bail us out in the future, even when there’s no evidence that it will even be physically possible.

But what I said is that your view is a sad one, not an incorrect one. The truth is, technological advancement may truly end up being the defining characteristic of humanity. After all, when we think about extinct species, we tend to associate them most strongly with what made them extinct. Just as we associate the dinosaurs most strongly with a meteor, maybe an outside observer will some day associate humanity most strongly with the technology that sent us out in a blaze of glory.

The Voyager 1 is still (mostly) ticking after almost 50 years with basically ancient technology by today’s standards, and it’s been through the hell of deep space, radiation and shit all that time.

What’s wrong with old technology if it still works? I don’t care what all magical computations a quantum computer can do, a mere hour of data retention just sounds pathetic in comparison.

You know we’re going to lose contact with V1 this decade, and as of last year the data stopped making sense? Which tied into my criticism of your other comment, we’re getting close (in the grand scheme) to how small we can make a transistor so we just make clusters of electron based compute models each running its own resources or do we invest in finding a better more efficient way?

Thank you, at least someone gets the gist of what I mean 👍

Blowing billions on quantum computing ain’t helping feed, clothe and house the homeless…

Your problem is capitalism, not QC.

“AI” as it stands now is unrelated to quantum computing

No, they both very much share something in common. Money and resources, that could otherwise be invested in trying to actually fix the world’s problems.

What are they gonna do with a quantum computer, cure cancer? Then by the time the scientists get to check out the results, the results done got corrupted because of pathetic memory integrity, and it somehow managed to create a new type of cancer with the corrupted results…

Well yes, quite possibly, through protein behavior modeling. Do some reading !

Ya know, as much hype as there has been for the idea of quantum computing, I haven’t even so much as seen a snippet of source code for it to even say Hello World.

Even if that’s not exactly what these machines are meant for, seriously, where’s even a snippet of code for people to even get a clue how (and if) they even work as they’re hyped to be?

Nobody sees what they don’t look for. This is seven seconds of using duckduckgo with the following query : “what does code for a quantum computer look like?”

https://cstheory.stackexchange.com/questions/9381/what-would-a-very-simple-quantum-program-look-like

https://dl.acm.org/doi/10.1145/3517340#sec-3

I don’t pretend to understand this, as I’m not a computer scientist, even less so a quantum scientist. Quite honestly, if you allow me a bit of criticism, I think you’re interacting with this whole topic in bad faith. Moving goalposts, obviously not doing any kind of documentation effort before criticizing an entire field of research, claiming that development efforts should go towards some vaguely defined “fixing the world problems”…

But this is not just any abacus, it’s one that calculates all the results at once. That is a disruptive leap forward in computing power.

The computers we have today help to do logistics to “feed, clothe and house the homeless”. They also help you to advocate to do more. How much of that would be comprehensible to someone living in 1900?

I’m not sure that homelessness is a problem quantum computing or AI are suitable for. However, AI has already contributed in helping to solve protein folding problems that are critical in modern medicine.

Solving homelessness and many other problems isn’t resource constrained as you think. It’s more about the will to solve them, and who profits from leaving them unsolved. We have known for decades that providing homes for the homeless in a large city actually saves the city money, but we’re still not doing it. Renewable energy has been cheaper than fossil fuels for almost as long. Medicare for all would cost significantly less than the US private healthcare system, and would lead to better results, but we aren’t doing that either.

it only lasts for an hour

“Only”? The “industry standard” is less than a millisecond.

Show the academic world how many computational tasks the physical structure of that coin has solved in the 15 years.

You’re forgetting the difference between processing and memory. The posted article is about memory.

If the memory sucks compared to standards of half a century ago, then they just suck.

How many calculations can your computer do in an hour? The answer is a lot.

Indeed, you’re very correct. It can also remember those results for over an hour. Hell, a jumping spider has better memory than that.

The output of a quantum computer is read by a classical computer and can then be transferred or stored as long as you liked use traditional means.

The lifetime of the error corrected qubit mentioned here is a limitation of how complex of a quantum calculation the quantum computer can fix. And an hour is a really, really long time by that standard.

Breaking RSA or other exciting things still requires a bunch of these error corrected qubits connected together. But this is still a pretty significant step.

Well riddle me this, if a computer of any sort has to constantly keep correcting itself, whether in processing or memory, well doesn’t that seem unreliable to you?

Hell, with quantum computers, if the temperature ain’t right and you fart in the wrong direction, the computations get corrupted. Even when you introduce error correction, if it only lasts an hour, that still doesn’t sound very reliable to me.

On the other hand, I have ECC ChipKill RAM in my computer, I can literally destroy a memory chip while the computer is still running, and the system is literally designed to keep running with no memory corruption as if nothing happened.

That sort of RAM ain’t exactly cheap either, but it’s way cheaper than a super expensive quantum computer with still unreliable memory.

Well riddle me this, if a computer of any sort has to constantly keep correcting itself, whether in processing or memory, well doesn’t that seem unreliable to you?

Error correction is the study of the mathematical techniques that let you make something reliable out of something unreliable. Much of classical computing heavily relies on error correction. You even pointed out error correction applied in your classical computer.

That sort of RAM ain’t exactly cheap either, but it’s way cheaper than a super expensive quantum computer with still unreliable memory.

The reason so much money is being invested in the development of quantum computers is mathematical work that suggests a sufficiently big enough quantum computer will be able to solve useful problems in an hour that would take the worlds biggest classical computer thousands of years to solve.

Why do we humans even think we need to solve these extravagantly over-complicated formulas in the first place? Shit, we’re in a world today where kids are forgetting how to spell and do basic math on their own, no thanks to modern technology.

Don’t get me wrong, human curiosity is an amazing thing. But that’s a two edged sword, especially when we’re augmenting genuine human intelligence with the processing power of modern technology and algorithms.

Just because we can, doesn’t necessarily mean we should. We’re gonna end up with a new generation of kids growing up half dumb as a stump, expecting the computers to give us all the right answers.

Smart technology for dumb people…

Why do we humans even think we need to solve these extravagantly over-complicated formulas in the first place?

Because those questions could do things like cure disease or help us better understand the universe or a million other things

Shit, we’re in a world today where kids are forgetting how to spell and do basic math on their own, no thanks to modern technology.

Not because of it, either. This research isn’t really related to that kind of tech, either

Just because we can, doesn’t necessarily mean we should. We’re gonna end up with a new generation of kids growing up half dumb as a stump, expecting the computers to give us all the right answers.

This isn’t going to be for daily normal use, you’re projecting fear at the wrong tech

Why do we humans even think we need to solve these extravagantly over-complicated formulas in the first place? Shit, we’re in a world today where kids are forgetting how to spell and do basic math on their own, no thanks to modern technology.

lol.

All of modern technology boils down to math. Curing diseases, building our buildings, roads, cars, even how we do farming these days is all heavily driven by science and math.

Sure, some of modern technology has made people lazy or had other negative impacts, but it’s not a serious argument to say continuing math and science research in general is worthless.

Specifically relating to quantum computing, the first real problems to be solved by quantum computers are likely to be chemistry simulations which can have impact in discovering new medicines or new industrial processes.

“Let’s use deuterium (stable) instead of tritium (t½ = 12.3y) so our nukes don’t expire in a few years.”

“Will it work?”

“No but it will be stable.”

“I can’t believe you haven’t been fired earlier”