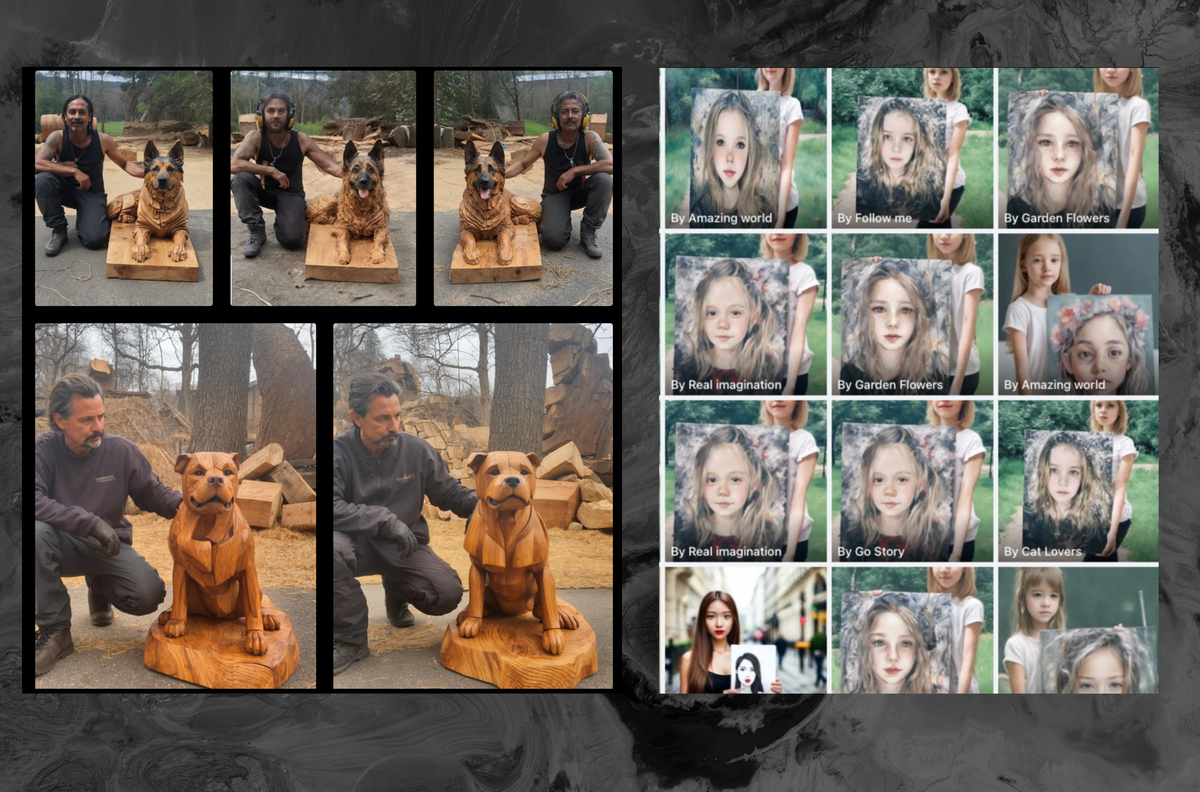

This is going to get soooo much more treacherous as this becomes ubiquitous and harder to detect. Apply the same pattern, but instead of wood carvings, it’s an election, or sexual misconduct trial, or war.

Our ability to make sense of things that we don’t witness personally is already in bad shape, and it’s about to get significantly worse. We aren’t even sure how bad it is right now.

Imagine an image like Tank Man, or the running Vietnamese girl with napalm burns on her skin, but AI generated at the right moment.

It could change the course of nations.As lies always could.

There were no WMDs in Iraq.

Sure, but now you can make a video of Saddam giving a tour of a nuclear enrichment facility.

Wearing a tutu. With Dora the explorer.

Most people don’t remember this, or weren’t alive at the time, but the whole Colin Powell event at the UN was intended to stop the weapons inspectors.

France (remember the Freedom Fries?) wanted to allow the weapons inspectors to keep looking until they could find true evidence of WMDs. The US freaked out because France said it wasn’t going to support an invasion of Iraq, at least not yet, because the inspectors hadn’t found anything. That meant that the security council wasn’t going to approve the resolution, which meant that it was an unauthorized action, and arguably illegal. In fact, UN Secretary General Kofi Annan said it was illegal.

Following the passage of Resolution 1441, on 8 November 2002, weapons inspectors of the United Nations Monitoring, Verification and Inspection Commission returned to Iraq for the first time since being withdrawn by the United Nations. Whether Iraq actually had weapons of mass destruction or not was being investigated by Hans Blix, head of the commission, and Mohamed ElBaradei, head of the International Atomic Energy Agency. Inspectors remained in the country until they withdrew after being notified of the imminent invasion by the United States, Britain, and two other countries.

https://en.wikipedia.org/wiki/United_Nations_Security_Council_and_the_Iraq_War

On February 5, 2003, the Secretary of State of the United States Colin Powell gave a PowerPoint presentation[1][2] to the United Nations Security Council. He explained the rationale for the Iraq War which would start on March 19, 2003 with the invasion of Iraq.

https://en.wikipedia.org/wiki/Colin_Powell's_presentation_to_the_United_Nations_Security_Council

The whole point of Colin Powell burning all the credibility he’d built up over his entire career was to say “we don’t care that the UN weapons inspectors haven’t found anything, trust me, the WMDs are there, so we’re invading”. Whether or not he (or anybody else) truly thought there were WMDs is a bit of a non-issue. What matters was they were a useful pretext for the invasion. Initially, the US probably hoped that the weapons inspectors were going to find some, and that that would make it easy to justify the invasion. The fact that none had been found was a real problem.

In the end, we don’t know if it was a lie that the US expected to find WMDs in Iraq. Most of the evidence suggests that they actually thought there were WMDs there. But, the evidence also suggests that they were planning to invade regardless of whether or not there were WMDs.

Great summary 👏 I definitely have some cached thoughts about that era, but didn’t remember it that clearly. That WP page with the actual PowerPoint slides is wild.

Sure, but now you’ll be able to sway all those people who were on the fence about believing the lie until they see the “evidence”

It’s already happening to some extent (I think still a small extent). I’m reminded of this Ryan Long video making fun of people who follow wars on Twitter. I can say the people who he’s making fun of are definitely real: I’ve met some of them. Their idea of figuring out a war or figuring out which side to support basically comes down to finding pictures of dead babies.

At 1:02 he specifically mentions people using AI for these images, which has definitely been cropping up here and there in Twitter discussions around Israel-Palestine.

And it almost certainly will. Perhaps has already.

and the flipside is also a problem

Now legitimate evidence can be dismissed as “AI generated”

Relevant:

Criminals will start wearing extra prosthetic fingers to make surveillance footage look like it’s AI generated and thus inadmissible as evidence

https://twitter.com/bristowbailey/status/1625165718340640769?lang=en

Well that’s a new one lol, hadn’t thought of that. That’s another level of planning

Exactly-- They’re two sides of the same coin. Being convinced by something that isn’t real is one type of error, but refusing to be convinced by something that is real is just as much of an error.

Some people are going to fall for just about everything. Others are going to be so apprehensive about falling for something that they never believe anything. I’m genuinely not sure which is worse.

We already saw that with nothing more than two words. Trump started the “fake news” craze, and now 33% of Americans dismiss anything that contradicts their views as fake news, without giving it any thought or evaluation. If a catch phrase is that powerful, imagine how much more powerful video and photography will be. Even in 2019 there was a deep fake floating around of Biden with a Gene Simmons tongue, licking his lips, and I personally know several people who thought it was real.

Great example. Yeah, I’ve had to educate family members about deepfakes because they didn’t even know that they were possible. This was on the back of some statement like “the only way to know for sure is to see video.” Uh… Sorry fam, I have some bad news…

Analog is the way to go now

It already happening. Adobe is selling them but even if they weren’t it’s not hard to do.

I think the worst of it is going to be places like Facebook where people already fall for terrible and obvious Photoshop images. They won’t notice it there are mistakes, even as AI gets better and there are fewer mistakes (Dall-E used to be awful at hands, not so bad now). However even smart folks will fall for these.

This doesn’t surprise me, given how messy Facebook has become. What does disturb me is people not being able to recognize that they are AI-generated. Now, this could be due to the AI becoming so sophisticated that it can actually generate life-like images, or it could be due to humanity’s inability to question what they’re viewing and whether it is true or not. Either way, this is very concerning, and if it can happen on Facebook, I’m sure it’s also happening on other social media sites as well.

Speaking of which, how can we stop something like this from happening on Lemmy and other federated sites?

This is just the next wave to me. I wish we had better automation around helping people find the sources of an image or detect where it could from. I’ve showed people reverse image searching a little at least, hopefully we can see it improve to handle new forms of copies like this going forward.

It really depends on the image. Some AI generated images of people really seem indistinguishable from a photograph. Not all, but it’s definitely going to become more common. At this point it seems like every month has a new breakthrough for some aspect AI regarding consistency and realism. As people work to bring stable diffusion video to a more reliable state the images themselves are only going to get better.

There’s a number of models out there right now that don’t have hand issues as much. There are other methods like using a pose to force hide the hands. We’re getting to the point where we just need metadata viewers on every image because the eye alone isn’t reliable for this. How many artists have already been accused of being “AI art like” and they were not.

Stopping it? I don’t know if you can stop Pandora’s box. Personally, I’m of the opinion that flooding AI images is the only way to “stop” it, simply by making people not care about it. At a certain point, you can only make so many variations of gollum as a playing card /ninja turtle/movie star before they get boring to the public. I also have doubts that the people using this are overall the type of people who would have paid artists for commissions, or that that would even stop a sale from happening in the first place (my partner makes mushroom forest scenes with Stable Diffusion but she also buys about $300 of art from our friends and local artists through the year). Like, Stable Diffusion doesn’t make oil paintings.

Little Jimmy in his room or the 60 hour week worker? They pose no harm making these images and it makes them happy, so why take that away from them? And regarding the nefarious aspect of it - that one is much harder but it’s in part societal shame as well. Explicit images made by AI may make the process easier, but if it’s something nefarious the people will find a way to do it regardless. Video sex scams and catfish were around long before AI. I’m not certain that these will inherently become more prevalent as the tech becomes more accessible. It’s the people posting them. Which to me comes down to more of a societal issue than a technological one.

However, in terms of trying to stop it maybe there could be a hash similar to the CASM that gets used here. Maybe the image uploader can look for metadata markers that come with generated images and deny those ones? That’s an easy workaround though, since metadata can be stripped.

I dunno. I think we’ve gotta EEE AI. Embrace AI images by flooding society with their presence, Extend AI images by getting the ability to use it into the hands of everyone, and then Extinguish the power that generated images hold over people because fake pictures don’t matter in the slightest.

No worries. I log on Facebook and all I see are videos catered to me based on shit I got sucked into last time I reluctantly went on Facebook.

Remember when Facebook was good? Yeah, me either

Wonder how many of the posts here are ai generated. Are we even talking to real people anymore or are we in our own ai generated bubbles engaging in simulated discourse?

Am I real or am I just an ai generated simulacrum?

Wonder.I think the chances for the fediverse having this are generally lower. I saw a rough estimate that puts our bubble at 1.5 million. By comparison, reddit is 500 million and YouTube is 2.5bn.

Yes, we are a bunch of nerds in that 1.5m number but we also value human input and generally seem to only use practical bots - and some people don’t even like those. We also have the bot account option, which should inspire more trust, though we have to trust that people actually use it.

Compared to reddit or YouTube, which I’ve personally seen as testing grounds for rolling out bot accounts. Whole subs dedicated to it. It’s not that it doesn’t, can’t, or won’t happen here, I know we have a number of repost bots from various instances - I’m moreso just saying that I think being so small helps. When the dead Internet arrives, the fediverse will have been one of the last bastions of human interaction on the Internet.

Except for mastodon. I see a lot of bots there compared to lemmy/Kbin

I, for one, welcome our AI overlords.

I mean… If it’s good or clever content do we really care?

I mean take the average media literacy of the relatively younger folks on Reddit (atrocious) and then realize that those are incredibly tech savvy compared to FB’s audience.

I would be surprised if this sort of thing isn’t already happening in reddit too.

Several AI posts have made the rounds on Reddit, but now that Reddit is 90% bots reposting and upvoting content by other bots, I don’t think anyone cares much.

I’m reasonably sure half the stories on subreddits like r/amitheasshole are written by ChatGPT at this point. Doesn’t matter, or course; drama is drama, whether it’s drama from a stranger on the other side of the world or generated by a machine.

Especially egregious because the images are basically the same as the original photos, they just used controlnet to alter the details. It shouldn’t be that difficult to stop this kind of thing, assuming Facebook even wanted to; looks like it is entirely possible to find the original popular post the AI posts are trying to copy to prove this is going on, and people are already doing the volunteer work of tracking it all, there would just need to be a way to report this stuff and confirm it. I doubt Facebook wants to do this though since engagement is engagement.

Couple problems:

-

the availability of the tools makes the potential scale of the problem pretty drastic. It may take teams of people to track this stuff down, and there’s no guarantee that you’ll catch all of it or that you won’t have false positives (which would really piss people off)

-

in a culture that seems obsessed with ‘free speech absolutism’, I imagine the Facebook execs would need to have a solid rationale to ban ‘AI generated content’, especially given how hard it would be to enforce.

That said, Facebook does need to tamp this down, because engagement isn’t engagement when it’s taken over by AI, because AI isn’t compelled to buy shit from advertising, and it can create enough noise to make ad targeting less useful for real people.

I personally think people will need to adapt to smaller, more familiar networks that they can trust, rather than trying to play whack-a-mole with AI content that continues to get better.

We’re overdo for some degrowth, especially when it comes to social media.

there’s no guarantee that you’ll catch all of it or that you won’t have false positives

You wouldn’t need to catch all of it, the more popular a post gets the more likely at least one person notices it’s an AI laundered repost. As for false positives, the examples in the article are really obviously AI adjusted copies of the original images, everything is the same except the small details, there’s no mistaking that.

in a culture that seems obsessed with ‘free speech absolutism’, I imagine the Facebook execs would need to have a solid rationale to ban ‘AI generated content’, especially given how hard it would be to enforce.

Personally I think people seem to hate free speech now compared to how it used to be online, and are unfortunately much more accepting of censorship. I don’t think AI generated content should be banned as a whole, just ban this sort of AI powered hoax, who would complain about that?

the more popular a post gets the more likely at least one person notices it’s an AI laundered repost. As for false positives, the examples in the article are really obviously AI adjusted copies of the original images, everything is the same except the small details, there’s no mistaking that.

I just don’t think this bodes well for facebook if a popular post or account is discovered to be fake AI generated drivel. And i don’t think it will remain obvious once active counter measures are put into place. It really, truly isn’t very hard to generate something that is mostly “original” with these tools with a little effort, and i frankly don’t think we’ve reached the top of the S curve with these models yet. The authors of this article make the same point - that outside of personally-effected individuals who have their work adapted recognizing their own content, there’s only a slim chance these hoax accounts are recognized before they reach viral popularity, especially as these models get better.

Relying on AI content being ‘obvious’ is not a long-term solution to the problem. You have to assume it’ll only get more challenging to identify.

I just don’t think there’s any replacement for shrinking social media circles and abandoning the ‘viral’ nature of online platforms. But I don’t even think it’ll take a concerted effort; i think people will naturally grow distrustful of large accounts and popular posts and fall backwards into what and who they are familiar with.

-

Fakebook

Real fake books are far cooler than Facebook

This is less of an issue if you judge everything that isn’t first hand from a known friend or family member as suspect or at least just a waste of time. Facebook used to be a place to talk to people you knew in the real world. You could ignore anything they reposted and still engage with the actual examples of their own experiences that they posted. But now it’s so flooded with ads and listicles and clickbait and video clips that it’s not even worth trying to keep up with the people you actually know.

It’s really disgusting. It was a great platform for keeping in touch with long distance friends and family. If you kept your friends list trimmed to people you know, then it was actually a really fun platform. Now it’s like all the worst parts of corporate internet all glommed together on a single site. I see maybe 1-2 of my actual friend’s posts, and the rest is all absolute crap. Hundreds of billions of dollars wasn’t enough for zuck? Nope! He just had to go and squeeze every last cent out of the site, even if it meant burning it to the ground. It’s not even worth visiting anymore. I was still visiting to see my memories, but now he’s slowly breaking that functionality too. Congratulations Facebook, you’re awful.

I still need Facebook unfortunately to keep in touch with some friends using messenger. Also marketplace is usually the most active classifieds where I am.

AI generated content isn’t stealing. That being said, Facebook is literally only reposts, there is practically zero original content. The AI generated stuff is amongst the few things that isn’t technically stolen.

AI generated content isn’t stealing.

You might want to read the article.

The AI generated content is the only part that isn’t plagiarism in these examples.

I dunno dude, taking an image-to-image generation with 90% strength to just change a few details to make it look like your work sure sounds like stealing to me

That may be forgery or a copyright violation, but it still isn’t stealing.

Sure it is. If you make minor AI alterations and claim the new version as yours, you’re stealing credit for someone else’s work.

It may sound pendantic but that person is correct: It’s not stealing. Stealing involves taking a physical thing away from its owner. Once the thing is stolen the owner doesn’t have it anymore.

If you reproduce someone’s art exactly without permission that’s a copyright violation, not stealing. If you distribute a derivative work (like using img2img with Stable Diffusion) without permission that also is a (lesser) form of copyright violation. Again, not stealing/theft.

TL;DR: If you’re making copies (or close facsimiles) of something (without permission) that’s not stealing it’s violating copyright.

You may want to read through what I actually wrote again.

Someone takes an image, runs it through image-to-image AI with 90% strength (meaning ‘make minor changes at most’), and then claims it as their own.

That last part there is what makes it stealing. It’s not the theft of the picture. It’s the theft of credit and of social media impressions. The latter sounds stupid at first, until you realise that it is an important, even essential part of marketing for many businesses, including small ones.

Now sure, technically someone who likes and comments under the fake can also do the same underneath the real one but in reality, first exposure benefits are important here: the content will have its most impact, and therefore push viewers to engage in some way, including at a business level, the first time they see it. When something incredibly similar pops up, they’re far more likely to go “I’ve already seen this. Next.”.

Human attention is very much a finite thing. If someone is using your content to divert people away from your brand, be it personal or professional, it has a very real cost associated with it. It is theft of opportunity, pure and simple.

It’s still not stealing. It’s plagiarism or fraud or any number of other terms, but stealing necessarily requires the deprivation of a limited, rivalrous thing, like money or property. You can’t steal fame or exposure or credit, except poetically. And by that point, the word becomes so watered down that it’s meaningless. You might as well say I’m stealing your life seconds at a time by writing this extra sentence.

The purpose of using the term stealing here is only to borrow the negative moral connotations of the term, but it doesn’t communicate clearly what exactly is happening.

It’s perfectly valid to say you consider it morally equivalent with theft, but it’s not stealing.

It’s stealing. Training is theft. It is NOT like “a person looking at art in a museum and gaining inspiration”. AI has no inspiration or creativity. It’s an image autocomplete algorithm using millions of other people’s images as bases to combine and smooth out. That’s all it does. If I took a bunch of Monet paintings and creates some brushes in Photoshop and used it to create a new work, those brushes would still be theft. At best, it’d be a collage art piece I’d have to credit Monet for.

deleted by creator

You should read this article by Kit Walsh, a senior staff attorney at the EFF. The EFF is a digital rights group that recently won a historic case: border guards now need a warrant to search your phone.

jesusfuckingchrist. Regurgitate much?

What is they’re AI? Hence the regurgitation.

deleted by creator

deleted by creator

deleted by creator

deleted by creator

deleted by creator

Luddites always lose. Just a reminder.

deleted by creator

I know you’re wanting to argue your point, so I’m only going to say one thing. Yes, it is sometimes stealing. Not all of the time, but some of the time. If you use a live artist as a prompt for selling and that artist isn’t getting paid (like musicians now do with sampling), then yes it’s stealing. You’re not only stealing their work, but you’re also stealing their business.

You should read this article by Kit Walsh, a senior staff attorney at the EFF if you haven’t already. The EFF is a digital rights group that recently won a historic case: border guards now need a warrant to search your phone.

Thanks, I hate it. Your article is going into the current law and how it doesn’t compare to the new way of stealing. The spirit of copyright law is, if you made it, you have the rights to it because you made it. You can sell it, decide what happens to it, etc., for a certain amount of time. The laws need to change, not the artists just accepting that their work and style will be stolen because corporations figured out a way to steal more from the already not paid enough group.

This part especially, is absolute bullshit:

The theory of the class-action suit is extremely dangerous for artists. If the plaintiffs convince the court that you’ve created a derivative work if you incorporate any aspect of someone else’s art in your own work, even if the end result isn’t substantially similar, then something as common as copying the way your favorite artist draws eyes could put you in legal jeopardy.

They’re trying to say, “Haven’t you been “inspired” by someone else? How can you judge this widdle ole’ computer program then?” Fuckers, please. Someone being inspired by someone else is already a gray area in copyright law. See any musician being sued by the Marvin Gaye family.

Now use the analogy of taking a single artist who has a decent living making their own stye of art. Now take 10,000 artists trying to copy the "style* of that artist and put those completed works out in 10 seconds as opposed to your work, which takes skill building, your imagination and time. The current copyright laws aren’t meant for AI, they should be ignored as a basis for anything.

The article does a very good job at show how it isn’t stealing. Particularlly this part:

Fair use protects reverse engineering, indexing for search engines, and other forms of analysis that create new knowledge about works or bodies of works. Here, the fact that the model is used to create new works weighs in favor of fair use as does the fact that the model consists of original analysis of the training images in comparison with one another.

This isn’t a new way of “stealing” it’s just a way to analyze and reverse engineer images so you can make your own original works. In the US, the first major case that established reverse engineering as fair use was Sega Enterprises Ltd. v. Accolade, Inc in 1992, and then affirmed in Sony Computer Entertainment, Inc. v. Connectix Corporation in 2000. So this is not new at all.

I understand that you are passionate about this topic, and that you have strong opinions on the legal and ethical issues involved. However, using profanity, insults, and exaggerations isn’t helping this discussion. It only creates hostility and resentment, and undermines your credibility. If you’re interested, we can have a discussion in good faith, but if your next comment is like this one, I won’t be replying.

I understand that you are passionate about this topic, and that you have strong opinions on the legal and ethical issues involved. However, using profanity, insults, and exaggerations isn’t helping this discussion. It only creates hostility and resentment, and undermines your credibility. If you’re interested, we can have a discussion in good faith, but if your next comment is like this one, I won’t be replying.

Not doing any of that, but okay.

Edit: I guess I was cussing, lol. It’s the internet and I think you’ll be fine.

Removed by mod

The Marvin Gaye comment sounds like you are defending copyright trolling

Nope, just pointing out that they won one and lost another, it’s a gray area. Nice try though.

Ah ok. Nice try of what? I don’t have a horse in this race

They’re not selling this picture…lol stop lying.

Ai art is stealing though. Artists are afraid to post their art online and get their work used in a machine learning model by some tech guys who never produced anything artistic in their lives

deleted by creator

Why is it that the people who decided to devote their life to filling the world with art are the most angry about custom art being abundant and free?

Because they want to fill the world with art in exchange for money.

Ideally, the solution that would benefit everyone would focus on dealing with that need for money, not on trying to keep old industries operating exactly as they always operated.

deleted by creator

It wasn’t the windmills that destroyed the common areas, it wasn’t the sails that enslaved people the world over, it wasn’t the cotton gin that drug the freemen back to their captors. It was and still is the powerful, those willing to use violence on their behalf and those unwilling to stand against it that do these things.

Drug?

EDIT: Did you mean “dragged”?

Why don’t managers and doctors and tech workers offer their stuff for free now? I mean, aren’t they just filling the world with amazing products and services?

deleted by creator

So you’re saying that every original, proprietary code can be used right now free of charge and under 10 seconds? I can just say, “app that makes pac men eat ghosts that look like Gibli ghosts” and I can claim that as mine. Cool! Where can I get this?

Here, look what I made! It’s totally mine and I’m sure Disney will be okay with it:

Yeah, you may be able to get all the way to a playable game if you use that prompt in a well set up AutoGen app. I would be interested to see if you give it a shot, so please share if you do. It’s such a cool time to be alive for “idea” people!

I’m working on an art project about it right now, you can see it here: https://sh.itjust.works/post/11256654?scrollToComments=true

I’m adding quite a few more companies, so it should be interesting.

deleted by creator

Have you seen my new art project? https://sh.itjust.works/post/11256654?scrollToComments=true

I have a few relatives who seem incapable of understanding that miraculously high-definition photos from the 1800s containing never-before-seen imagery of lumberjacks posing with 12ft. tall sasquatches could possibly be inauthentic. .

I’m doing a fun art project now, I’m feeling like from the conversations I’ve been having in this thread about AI, that I’m totally okay with selling these logos as is. I put in inspiration prompts and “in the style of” so I’m sure I’m good.