Taylor Swift is living every woman’s AI porn nightmare — Deepfake nudes of the pop star are appearing all over social media. We all saw this coming.::Deepfake nudes of the pop star are appearing all over social media. We all saw this coming.

I feel like I live on the Internet and I never see this shit. Either it doesn’t exist or I exist on a completely different plane of the net.

You ever somehow get invited to a party you’d usually never be at? With a crowd you.never ever see? This is that.

You ever somehow get invited to a party you’d usually never be at? With a crowd you.never ever see?

No?

You ever somehow get invited to a party

Also no 😥

Understandable, have a nice day

Why not just say “no, thank you” to such an invitation?

Well obviously you’d leave pretty immediately. A friend’s has never invited you somewhere new/ unknown?

Because that’s how you meet new people?

That’s the neat part. As an introvert I hate meeting new people. On the other hand I’m happy to meet different pixel blobs on my screen.

Yeah but sometimes you need stuff from the outside world like food and shit. God forbid you like drugs as an introvert… lol that’s a painful quandary

Shopping is easy. Everything online, food on self checkout. Drugs are luckily not my thing.

come to my taylor swift deep fake nude party

sure I’d love to meet new people

deleted by creator

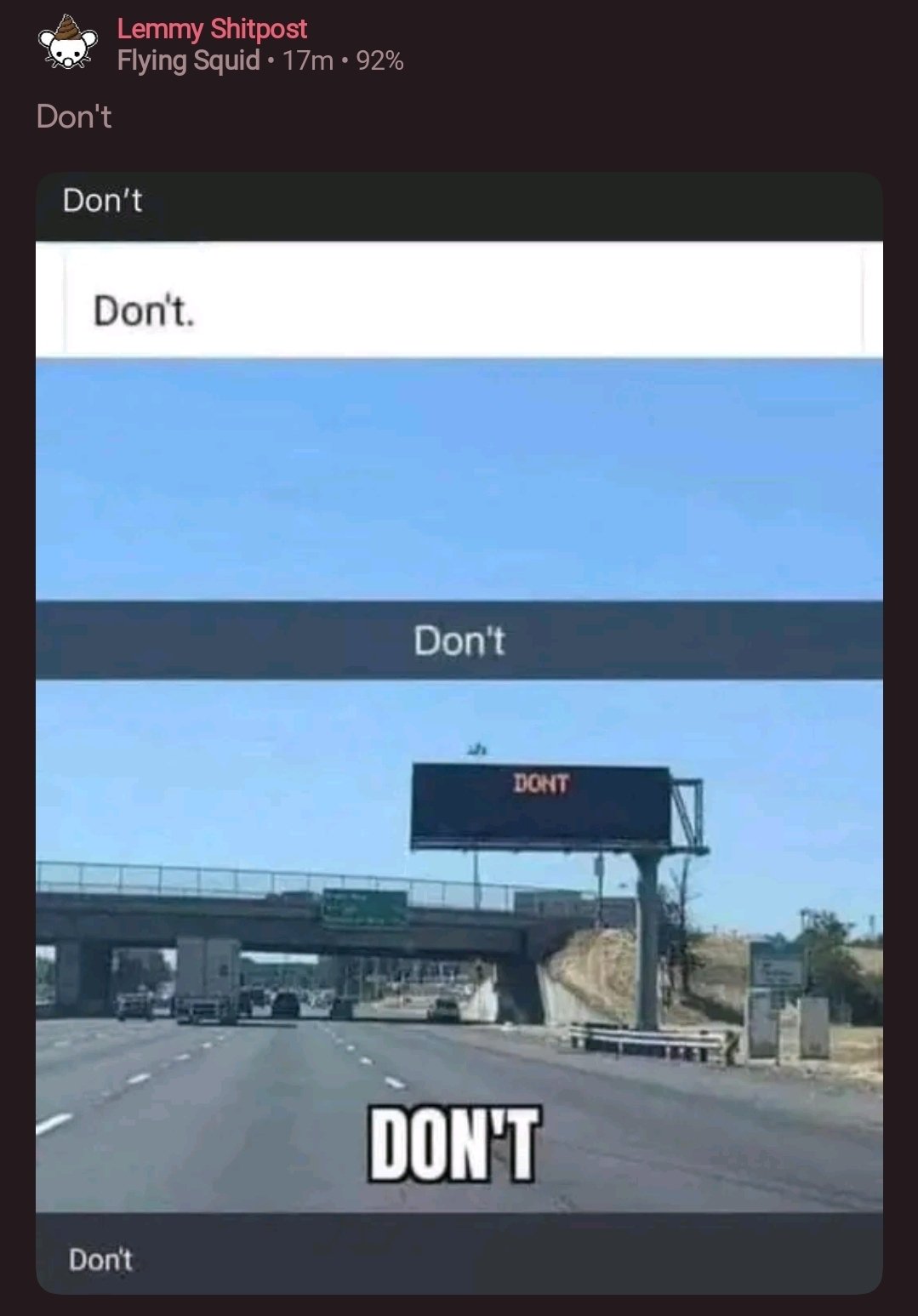

On the Internet, censorship happens not by having too little information, but too much information in which it is difficult to find what you want.

We all have only so much time to spend on the Internet and so necessarily get a filtered experience of everything that happens on the Internet.

Are you saying that not being exposed to things you don’t want is censorship?

No, that is not what I’m saying, mostly because I don’t think it is true. I’m saying that nowadays there is nearly all kinds of information one can think of somewhere out there on the Internet; but if it is only in relatively obscure places and you don’t know where to look for it, then it is still de facto censored by having too much other information out there.

I dont think that can be called censorship as it is not deliberate suppression of info, it’s just being drowned out or ignored.

I’ll wait for Taylor’s version.

I wasn’t expecting a joke this good. Thank you for that.

I wonder if this winds up with revenge porn no longer being a thing? Like, if someone leaks nudes of me I can just say it’s a deepfake?

Probably a lot of pain for women from mouth breathers before we get there from here .

I mean, not much happened to protect women after The Fappening, and that happened to boatloads of famous women with lots of money, too.

Arguably, not any billionaires, so we’ll see I guess.

This has already been a thing in courts with people saying that audio of them was generated using AI. It’s here to stay, and almost nothing is going to be ‘real’ anymore unless you’ve seen it directly first-hand.

first-hand.

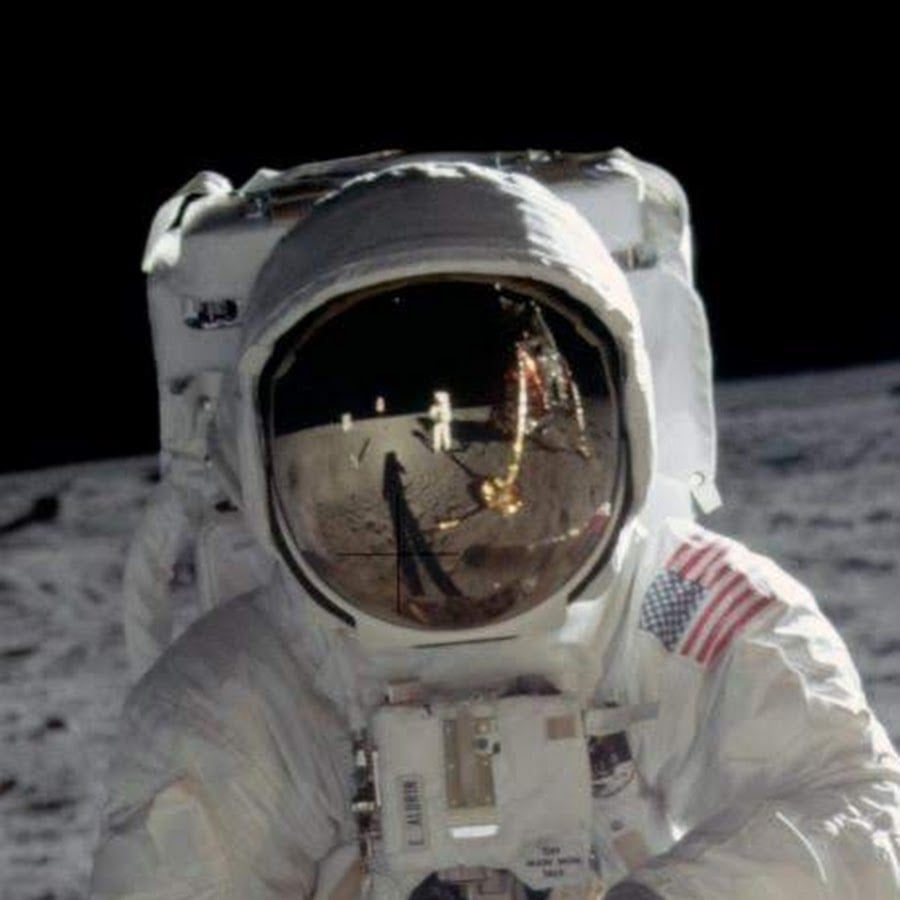

the first-hand in question:

If shitty non-real person AI-generated image deformity porn with body parts like this image isn’t real, I bet it will be. There, you’re all welcome.

It’s already a thing? I mean, you know, rule 34

Thereby furthering erosion of our democracies and continuing the slide into Putin-confusion.

We need trustworthy sources of news more than ever

Why would it make revenge porn less of a thing? Why are so many people here convinced that as long people say it’s “fake” it’s not going to negatively affect them?

The mouth breathers will never go away. They might even use the excuse the other way around, that because someone could say just about everything is fake, then it might be real and the victim might be lying. Remember that blurry pictures of bigfoot were enough to fool a lot of people.

Hell, even others believe it is fake, wouldn’t it still be humilliating?

I think you’re underestimating the potential effects of an entire society starting to distrust pictures/video. Yeah a blurry Bigfoot fooled an entire generation, but nowadays most people you talk to will say it’s doctored. Scale that up to a point where literally anyone can make completely realistic pics/vids of anything in their imagination, and have it be indistinguishable from real life? I think there’s a pretty good chance that “nope, that’s a fake picture of me” will be a believable, no question response to just about anything. It’s a problem

There are still people to believe in Bigfoot and UFOs, there’s still people falling for hoaxes every day. To the extent that distrust is spreading, it’s not manifested as widespread reasonable skepticism but the tendency to double down on what people already believe. There are more flat earthers today than there were decades ago.

We are heading to a point that if anyone says deepfake porn is fake, regardless of reasons and arguments, people might just think it’s real just because they feel like it might be. At this point, this isn’t even a new situation. Just like people skip reputable scientific and journalistic sources in favor of random blogs that validate what they already believe, they will treat images, deepfaked or not, much in the same way.

So, at best, some people might believe the victim regardless, but some won’t no matter what is said, and they will treat them as if those images are real.

This strikes me as correct, it’s kind of more complicated than just the blanket statement of “oh, everyone will have too calloused of a mind to believe anything ever again”. People will just try to intuit truth from surrounding context in a vacuum, much like how they do with our current every day reality where I’m really just a brain in a vat or whatever.

I hope someone sends your mom a deepfake of you being dismembered with a rusty saw. I’m sure the horror will fade with time.

What a horrible thing to wish on a random person on the internet. Maybe take a break on being so reactionary, jesus

The default assumption will be that a video is fake. In the very near future you will be able to say “voice assistant thing show me a video of that cute girl from the cafe today getting double teamed by robocop and an ewok wearing a tu-tu”. It will be so trivial to create this stuff that the question will be “why were you watching a naughty video of me” rather than “omg I can’t believe this naughty video of me exists”.

The mouth breathers will never go away. You’re the mouth breather.

Name calling. Real classy.

Australia’s federal legislation making non-consensual sharing of intimate images an offense includes doctored or generated images because that’s still extremely harmful to the victim and their reputation.

Why do you think “there” is meaningfully different from “here”?

A deep fake is still humiliating

deleted by creator

Fake celebrity porn has existed since before photography, in the form of drawings and impersonators. At this point, if you’re even somewhat young and good-looking (and sometimes even if you’re not), the fake porn should be expected as part of the price you pay for fame. It isn’t as though the sort of person who gets off on this cares whether the pictures are real or not—they just need them to be close enough that they can fool themselves.

Is it right? No, but it’s the way the world is, because humans suck.

Honestly, the way I look at it is that the real offense is publishing.

While still creepy, it would be hard to condemn someone for making fakes for personal consumption. Making an AI fake is the high-tech equivalent of gluing a cutout of your crush’s face onto a playboy centerfold. It’s hard to want to prohibit people from pretending.

But posting those fakes online is the high-tech, scaled-up version of xeroxing the playboy centerfold with your crush’s face on it, and taping up copies all over town for everyone to see.

Obviously, there’s a clear line people should not cross, but it’s clear that without laws to deter it, AI fakes are just going to circulate freely.

AI fake is the high-tech equivalent of gluing a cutout of your crush’s face onto a playboy centerfold.

At first I read that as “cousin’s face” and I was like “bru, that’s oddly specific.” Lol

Yup, it’s all the more frustrating when you take into account that social media sites do have the capability to know if an image is NSFW, and if it matches the face of a celebrity. Knowing Taylors fan base, they are probably quickly reported.

It’s mainly twitter as well, and it’s clear they are letting this go on to drum up controversy.

Humans are horrible, but a main-stream social media platform should not be a celebration of it. People need to demand change and then leave if ignored. I seem to hear people demanding change. The next step has more impetus.

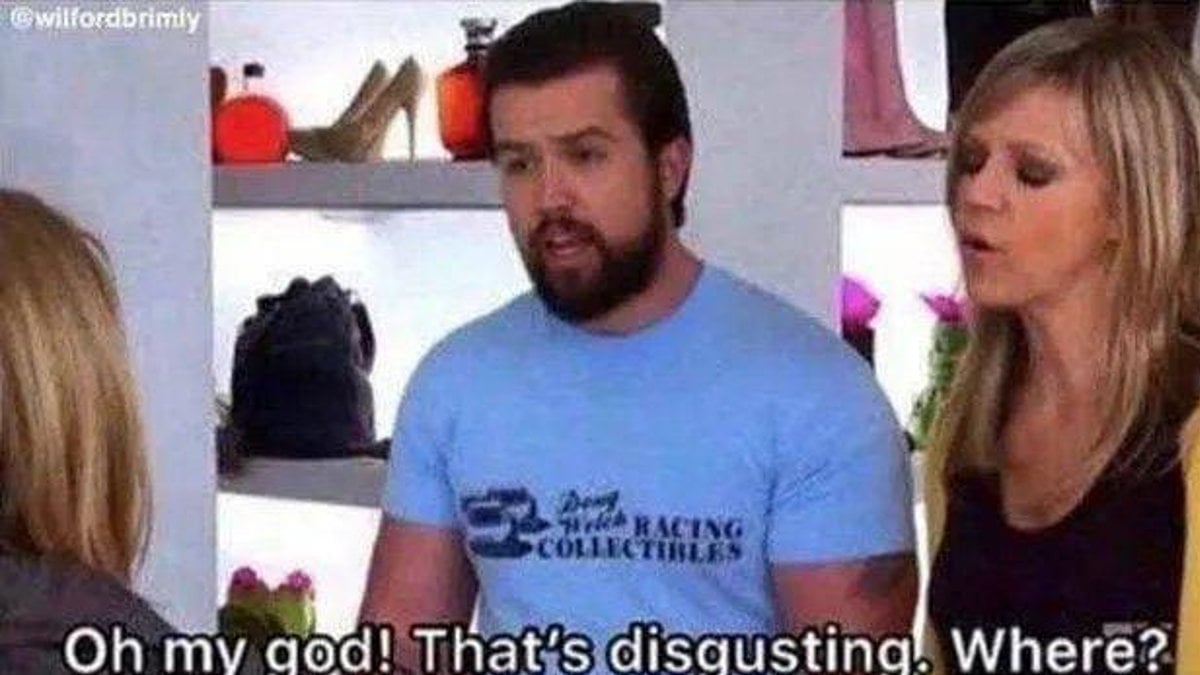

WHAT?? DISGUSTING! WHERE WOULD THESE JERKS PUT THIS ? WHAT SPECIFIC WEBSITE DO I NEED TO BOYCOTT?

Google Search didn’t really turn up much, far less than if you were to search up something like ‘Nancy Pelosi nude’ even, it kind of seems overblown and the only reason it’s gotten any news is because of who it happened to. Just being famous nowadays seems like you’re just going to see photoshopped or deepfake porn of yourself spread all over the internet.

Was this joke ever funny?

Time and place. Don’t be a creep.

Yes I get the reference.

Lol, seems like some people didn’t get it.

I didn’t get it.

It’s Always Sunny in Philadelphia.

Went to Bing and found “Taylor swift ai pictures” as a top search. LOTS of images of her being railed by Sesame Street characters

Im gonna be real…

When I stumbled upon (didn’t go looking to be clear) her and Oscar the Grouch on a pile of trash… It sent me.

I know the whole situation is gross but I couldn’t stop laughing due to the sheer absurdity. Like… What???

I had to add “Muppet” to the search term, and even then I only got one censored image, so I don’t know what your search history is like, but the bing algorithm definitely has some thoughts about what you like.

I guess you have safe search enabled

Bing has been surprisingly decent over the last few years.

God what a garbage article:

On X—which used to be called Twitter before it was bought by billionaire edgelord Elon Musk

I mean, really? The guy makes my skin crawl, but what a hypocritically edgy comment to put into an article.

And then zero comment from Taylor Swift in it at all. She is basically just speaking for her. Not only that, but she anoints herself spokesperson for all women…while also pretty conspicuously ignoring that men can be victims of this too.

Don’t get me wrong, I’m not defending non consensual ai porn in the least, and I assume the author and I are mostly in agreement about the need for something to be done about it.

But it’s trashy politically charged and biased articles like this that make people take sides on things like this. Imo, the author is contributing to the problems of society she probably wants to fix.

hypocritically edgy comment to put into an article.

Its vice, their whole brand is edgy. Calling Elon an edgelord is very on brand for them.

pretty conspicuously ignoring that men can be victims of this too.

Sure, but women are disproportionately affected by this. You’re making the “all lives matter” argument of AI porn

make people take sides on things like this

People should be taking sides on this.

Just seems like you wanna get mad for no reason? I read the article, and it doesn’t come across nearly as bad as you would lead anyone to believe. This article is about deepfake pornography as a whole, and how is can (and more importantly HAS) affected women, including minors. Sure it would have been nice to have a comment from Taylor, but i really don’t think it was necessary.

Its vice, their whole brand is edgy. Calling Elon an edgelord is very on brand for them.

I’ve come across this source before and don’t recall being so turned off by the tone. If this is on brand for them, then my criticism is not limited to the author.

Sure, but women are disproportionately affected by this. You’re making the “all lives matter” argument of AI porn

You have a point, but I disagree. Black lives matter is effectively saying that black lives currently don’t matter (mainly when it comes to policing). All lives matter is being dismissive of that claim because no one really believes that white lives don’t matter to police. Pointing to the fact that there are male victims too is not dismissive of the fact that women are the primary victims of this. It’s almost the opposite and ignoring males is being dismissive of victims.

People should be taking sides on this.

Sorry, wasn’t clear on that point. What I was saying here is this will make people take sides based on their politics rather than on the merits of whether it’s wrong in and of itself.

i really don’t think it was necessary.

Neither was her speaking for swift, nor all of women kind, nor only making it about women, nor calling musk an edge lord. You seem to be making the same argument as me.

On the contrary, I find it more ridiculous when news media pretends like nothing is wrong over at Twitter HQ. I wish more journalists would call Musk out like this every time they’re forced to mention Twitter.

Can you really see nothing other than “pretending nothing is wrong” and “calling musk an edge lord?”

I see the media calling out the faults regularly regularly without needing to act like …well, an edge lord.

Professionalism was thrown out the window the moment orange man became president. The Republicans play dirty, so everyone else has to as well, or else they’ll walk all over us. Taking the high ground is a dead concept.

I strongly disagree, but this is completely unrelated to what I said.

I have ADHD so I forgot what we were talking about even before I started commenting.

It’s vice.

Removed by mod

Had big “people calling people edgelords are the real edgelords” type vibes to it, which I’ll file in the circular file right next to its cousin “people calling people racists are the real racists”.

Edit: A couple of posts down the dude almost says that quote verbatim.

I disagree. To pretend nothing is wrong is worse. The author was accurate in their description here.

This is the second poster here who can’t seem to understand that there is a whole world of things between “pretending nothing is wrong” and acting like a child by calling people “edge lord.”

Last time I checked, on my front page, there was an article from the NY times about how x is spreading misinformation and musk seems to be part of it. yet they managed to point out this problem without using the term edge lord. Is this shocking to you?

Every thread man. Feels like reddit with tired references. You’re not even the first in this thread to reference it.

Now you do it

Idk if this one will get old for me.

I def appreciate IAS, but it’s just any time porn or leaks are mentioned, you can bet there will be a couple comments just saying “But where are they so I can avoid them?!” The episode is almost 15 years old at this point-- I guess I’m just over it.

People have been doing these for years even before AGI.

Now, it’s just faster.

Edit: Sorry, I suppose I should mean LLM AI

Agi? Where

There is no point waiting for a response…the threat has been neutralized. Now repeat after me: There is no AGI.

AGI continues to exist purely in the realm of science fiction.

I think they might have used AGI to mean “AI Generated Images” which I’ve seen used in a few places, not know that AGI is already a term in the AI lexicon.

And this is why I don’t want to be famous. Being famous exposes your name to the crazies of the world, and leaves you blissfully unaware until the crazies snap.

“… privacy is something you can sell, but you can’t buy it back.” -Bob Dylan

Nightmare? Doesn’t it simply give them the chance to just say any naked pic of them is fake now?

Oh I’m sure that must be a very nice thing to talk out with your mother or significant other.

“Don’t worry they are plastering naked pictures of me everywhere, it’s all fake”

Is your mother like under the impression that you are a virgin or something? My mother knows where my kids came from.

My mother wouldn’t be thrilled to see it happening. Would yours?

Shed likely have a good laugh, because she is not a prude. If she was, I’d care less about what she thinks.

Well if they think that’s your fault they’re pretty shitty

Yeah, but it’s still humilliating for everyone involved, nevermind the additional harassment that might bring.

Frankly, folks saying “everyone will just start assuming all porn is fake and nobody will mind it anymore” are just deluding themselves. I dunno if they want it to turn out like this so they won’t have to worry about the ethics of the matter, but that’s not how people behave.

Have you met my mom? She would 100% love the fact that people are trying to see her naked.

Good for her, but a lot of mothers are less enthusiastic of seeing their children like that

So don’t look. Just know that it’s more likely to be fake.

Social media is not known for keeping things contained.

It’s also not known for making you have to use it.

Today? I wouldn’t say that so confidently (on Lemmy even). People working in media and marketing do have to use it. Even if they aren’t in it, it doesn’t mean they are immune to such a thing happening, or that people they know won’t stumble on it.

Can I see your mom naked?

Shoot your shot. Why should I give a shit?

Nice, send me her OF

I doubt she has one. Also I haven’t talked to her in years and I’m not about to start now.

An Argentine candidate got filmed while being allegedly stoned, so “someone” released an even worst video that was clearly fabricated by an AI to disprove the first one. It kind of worked

I think more politicians should get stoned. Like drugs stoned.

deleted by creator

How does video indicate someone is stoned?

In my mind I’m imagining its a video of him making a cream cheese sandwich between two strawberries pop tarts because thats how I always knew my buddy was stoned.

Bro I’m don’t and I want that.

I’m don’t

You sure you’re not?

See also: Ford, Rob

Usually when you see rock flying towards someone…

Kidding. I dont know if stoned was the right word, but he looked like* being under the influence of some substance. Then people started saying it was coke.

Strange movements, the jaw moving periodically and in a akward position, looking very atentive with the eyes wide open… I dont have experience with anything of this and im not saying that he was under the influence of something, but those are the things that made the public opinion decide that he was doing coke.

*according to the opinión of the populace

Edit: we call it the Maradona stance

Fair enough. It’d be worse if he was sober and acting that way.

The only sensible course of action is to deep fake nudes of all our old grody ass relatives until everyone feels desensitized towards being naked

I’m not saying she shouldn’t have complained about this. She has every right to, but complaining about it definitely made the problem a lot worse.

Streisand Effect or something.

Except she’s the most famous woman in the country, a well-established sex symbol, and already the subject of enumerable erotic fantasies and fictions.

Its the same problem as “The Fappening” from forever ago. The fact that this exists is its own fuel and whether she chooses to acknowledge it or not is a moot point. Someone is going to talk about it and the news will spread.

This is something negative that can impact her not something negative she did that she’d like us to forget.

She oughta make a stink and put a spotlight on it if it’s gonna hurt her image.

This a problem that doesn’t just affect her though. Not discussing the problem means it doesn’t get worse for her, but does continue to happen to other people.

Discussing it means it gets worse for her but there could be potentially be solutions found. Solutions that would help her and other people affected.

Worst case scenario is no solution is found, but the people making AI porn make more Taylor Swift AI porn which results in less resources being devoted towards making AI porn of other people. This makes things worse for Swift but better for other people.

TLDR; Taylor Swift is a saint and is operating a level that us petty sinners can’t comprehend.

she drew so much attention to it though that there are more news stories than actual images at this point. if you look for the images, you’re gonna have to go through pages and pages of news articles about it at this point. not sure if it was intentional, but kinda worked…

This is probably a good thing even if real nudes leaked nobody would know if it’s real

At least now, if pictures are real, you can say it’s AI generated.

Still, to be honest, I’ve never understood how some people can let one night stands film them naked.

If it’s a longtime girlfriend or boyfriend and they betray you, it’s different, but people aren’t acting in a clever way when it comes to sex.

There’s nothing wrong with recording your naked body and it being seen online by willing persons.

The people who would disrespect you for it, they’re the problem.

That’s not what I’m talking about.

I’m talking about not being careful who you’re giving these images if you don’t want them to spread online. And, of course, the person sharing it on the web is the guilty person, not the naked victim.

Well, this very situation shows one can be as careful as they could and they might still have porn of themselves spread everywhere.

Yeah it’s true.

But at least it’s not your real intimacy this time.

Still I understand how traumatic it can be, especially for young people.

Well, you don’t have that many brain cells in the areas people are doing that thinking.

Well, targeting someone famous and going overboard with it likely results in legal responses. Perhaps this gets deepfakes then attention they need to be regulated or legally punishable. Especially when targeting underage children.

Some of them are really good too, in a realistic sense. You can tell they are AI though.

deleted by creator